Hypothesis testing is a cornerstone of data-driven business decision-making, providing a formal, statistical process to validate assumptions or claims using sample data.

By framing clear hypotheses and rigorously testing them, organizations minimize risks and make evidence-based choices, optimizing strategies like marketing campaigns, product development, and operational improvements.

Null and Alternative Hypotheses: Formulation and Business Context

Null Hypothesis (H0): Represents the default or status quo assumption, typically indicating no effect or difference.

Alternative Hypothesis (H1 or Ha): Proposes a change or effect that the analysis aims to support.

In business, for example, a company may hypothesize that a new advertising campaign (H1) increases sales, while the null hypothesis assumes it has no impact (H0). Testing aims to determine if observed data provide enough evidence to reject H0 in favor of H1.

Type I and Type II Errors: Significance Level and Power in Business Decisions

Type I Error (α): Incorrectly rejecting the null hypothesis when it is true (false positive). The significance level, typically set at 5%, controls this risk.

Type II Error (β): Failing to reject the null hypothesis when the alternative is true (false negative).

Power of Test (1-β): Probability of correctly detecting a true effect. Higher power reduces Type II errors.

Balancing these errors is crucial in business, where false positives may waste resources and false negatives may miss opportunities.

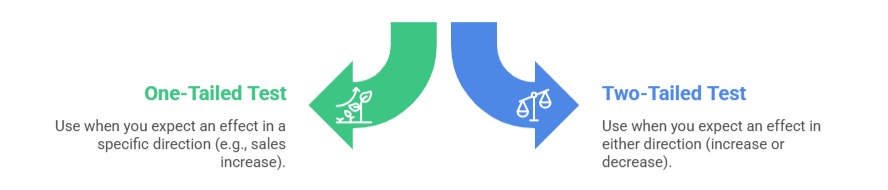

One-Tailed and Two-Tailed Tests: Choosing the Appropriate Direction

Choosing between them depends on business questions and hypotheses. One-tailed tests increase power but risk missing effects in the opposite direction.

P-Values and Statistical Significance Interpretation

P-Value: Probability of observing the test results assuming the null hypothesis is true.

Statistical Significance: Typically, p-values below the significance level (e.g., 0.05) lead to rejecting H0.

Interpreting p-values correctly is vital: a small p-value suggests strong evidence against H0 but does not measure effect size or business importance.