Responsible disclosure is a fundamental practice in cybersecurity that involves the ethical reporting and management of discovered vulnerabilities to ensure timely remediation while minimizing risks to users and organizations.

In AI-augmented environments, where artificial intelligence technologies intersect with complex systems, the landscape of vulnerabilities and risks broadens, requiring adapted disclosure practices that address unique AI-related challenges.

Responsible disclosure in these contexts must balance transparency, prompt communication, privacy concerns, and the safeguarding of AI models and data.

Understanding Responsible Disclosure in AI-Augmented Environments

AI-augmented systems combine traditional software components with AI models, data pipelines, and decision-making algorithms. Responsible disclosure here means thoughtfully managing:

1. AI-Specific Vulnerabilities: Issues like adversarial attacks, data poisoning, model extraction, and bias exploitation that may not exist in traditional systems.

2. Complex Stakeholders: Multiple parties, including AI developers, data providers, users, regulatory bodies, and third-party auditors.

3. Data Sensitivity: AI models often encapsulate private or proprietary data, raising privacy concerns during disclosure.

4. Model and System Dependencies: Vulnerabilities may propagate across integrated components, complicating remediation coordination.

Key Steps in Responsible Disclosure for AI Systems

To protect AI systems and stakeholders, organizations must follow a disciplined disclosure workflow. Here’s a list of essential actions that guide responsible vulnerability reporting.

1. Identification and Verification: Confirm the vulnerability’s existence, potential impact, and reproducibility within the AI system and related components.

2. Internal Reporting: Notify internal stakeholders, including AI engineers, data scientists, security teams, and management, emphasizing confidentiality and potential business impacts.

3. Risk Assessment: Evaluate the severity, exploitability, and scope, factoring in AI-specific threats such as model inversion or prompt injection.

4. Coordinated Remediation Planning: Collaborate across interdisciplinary teams to develop fixes, which may include model retraining, patching, or policy changes.

5. Selective Disclosure Timing: Balance the urgency of public disclosure with the need to implement mitigations, following established timelines and stakeholder agreements.

6. Public Communication: Prepare clear, responsible advisories or reports that explain the vulnerability, affected components, mitigation steps, and recommendations without exposing attack vectors unnecessarily.

7. Continuous Monitoring: Post-disclosure, monitor for any exploitation attempts or unintended consequences, updating mitigation as necessary.

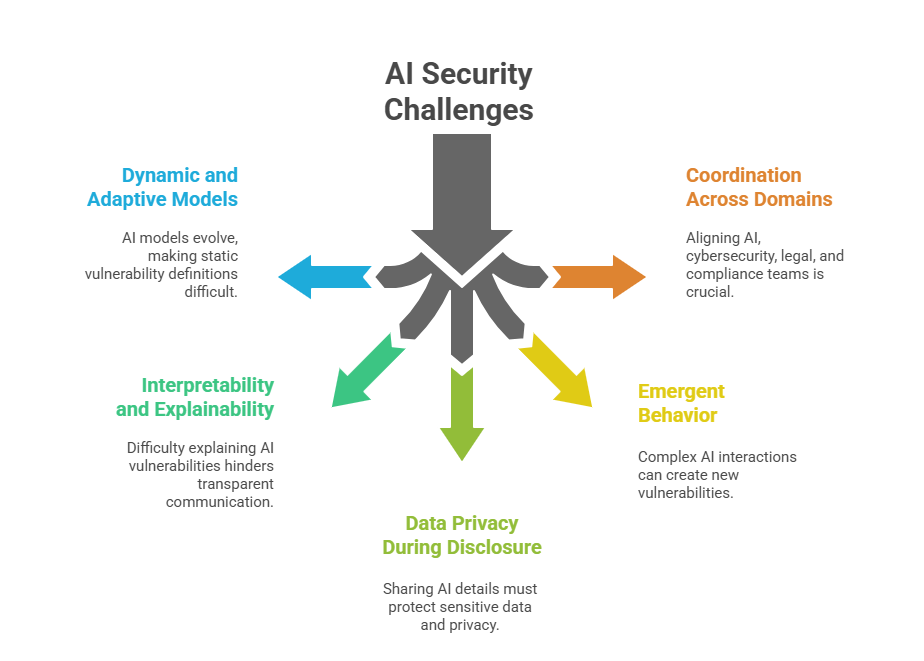

Challenges Unique to AI Environments

Due to their adaptive nature, AI systems require tailored methods for identifying and addressing vulnerabilities. Below are several challenges that make AI environments distinct.

Best Practices for Responsible Disclosure in AI-Augmented Settings

Given the complexity of AI systems, organizations must implement structured workflows to ensure responsible and ethical disclosure. The following best practices support that goal.

1. Develop Clear Policies: Establish organizational policies detailing AI-specific vulnerability definitions, disclosure workflows, and roles.

2. Engage Multidisciplinary Teams: Include AI researchers, security analysts, legal advisors, and communication experts in managing disclosures.

3. Maintain Confidentiality: Use secure communication channels and access controls to protect sensitive information during the disclosure process.

4. Leverage Standards and Frameworks: Adopt guidelines like NIST’s AI Risk Management Framework and industry best practices.

5. Educate and Train: Build awareness on AI-specific risks and responsible disclosure responsibilities among staff.

6. Foster External Collaboration: Coordinate with regulatory bodies, vendors, and security researchers for wider vulnerability management.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.