Generative Artificial Intelligence (AI) has emerged as a transformative technology that can create novel content by learning from vast datasets. In cybersecurity, generative AI enables the emulation of adversary patterns—simulating attacker behaviors, tactics, techniques, and procedures (TTPs)—to enhance threat understanding, incident response, and proactive defense strategies.

By generating realistic attack scenarios and actor behaviors, security teams can better anticipate potential threats and evaluate their defenses in simulated environments. However, the use of generative AI in this context must carefully consider ethical boundaries to prevent misuse, respect privacy, and comply with legal and organizational policies.

Generative AI for Emulating Adversary Patterns

Generative AI models, such as Generative Adversarial Networks (GANs) and Large Language Models (LLMs), learn from extensive threat intelligence data, attack histories, and cyber kill chain frameworks to produce synthetic yet realistic representations of adversary actions. Key capabilities include:

1. Attack Scenario Generation: Automatically creating varied and complex cyberattack simulations representing known or emerging TTPs that adversaries might employ.

2. Behavioral Emulation: Mimicking attacker logic, decision-making, and tool usage to produce realistic threat activities that stress-test security controls.

3. Malware Variant Synthesis: Generating polymorphic malware samples to evaluate detection systems against evolving threats.

4. Phishing Campaign Simulation: Crafting convincing phishing emails to test employee awareness and defensive mechanisms.

5. Threat Actor Profiling: Producing detailed adversary personas blending various attack styles and motivations for training and threat hunting exercises.

These generative capabilities facilitate dynamic, scalable, and diverse adversary emulation beyond static, manual exercise scripting.

Ethical Boundaries in Generative AI Usage

While generative AI offers powerful benefits for security preparedness, it also introduces ethical considerations and risks that require vigilant management:

1. Avoiding Malicious Use: Strict controls and policies must prevent generative capabilities from being exploited for malicious purposes such as developing real malware or launching live phishing attacks.

2. Data Privacy: Models trained on sensitive or proprietary data must ensure no unintended leakage or reproduction of confidential information.

3. Transparency: Clear documentation and validation of generative outputs help distinguish simulations from actual threats and avoid confusion.

4. Legal Compliance: Adherence to laws governing cybersecurity testing, data usage, and offensive security practices is mandatory.

5. Controlled Environments: Generative adversary simulations should occur within isolated, monitored environments to contain risks and prevent accidental harm.

Embedding these ethical safeguards ensures generative AI supports constructive cybersecurity goals responsibly.

Benefits of Generative AI for Adversary Emulation

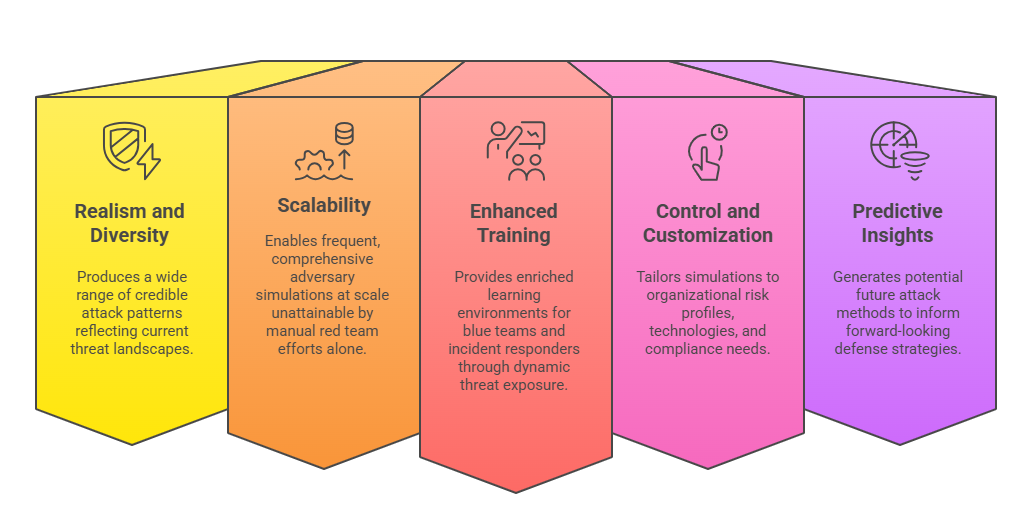

Here are the major benefits organizations can realize when using generative AI for emulating attacker behavior. These advantages reflect its power to scale, customize, and predict advanced threats.

Challenges and Best Practices

The following points summarize the primary hurdles and strategic practices organizations must address when using generative AI. These insights support more controlled, transparent, and efficient implementation.

1. Model Bias and Errors: Generative models may produce unrealistic or biased patterns without careful tuning and validation.

2. Resource Intensive: Requires computational power and specialized expertise for model training and deployment.

3. Oversight: Continuous monitoring and governance are essential to prevent misuse or drift into harmful applications.

4. Collaboration: Engagement between AI developers, cybersecurity experts, and legal teams fosters responsible practices.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.