As organizations increasingly adopt AI for automating report generation, decision-making, and insights dissemination, ensuring the accuracy and integrity of these AI-generated outputs becomes paramount.

While AI offers remarkable efficiencies, it also introduces risks related to bias, misinformation, and errors that can compromise the trustworthiness of reports.

Biases embedded in training data, model shortcomings, or unintentional misalignments with organizational objectives can lead to flawed conclusions or unfair treatment of stakeholders.

Consequently, organizations must establish robust processes for verifying accuracy, reducing bias, and validating the outputs of AI-generated reports.

Importance of Accuracy in AI-Generated Reports

Accuracy refers to the degree to which the AI-generated report reliably reflects the true state of the data and insights it aims to communicate.

1. Data Quality: High-quality, relevant, and correctly preprocessed data underpin accurate AI outputs. Garbage in, garbage out (GIGO) applies here profoundly.

2. Model Precision: Advanced models tuned specifically for the domain, with thoroughly validated algorithms, reduce errors.

3. Context Awareness: Incorporating contextual understanding ensures reports are relevant, nuanced, and correctly framed within organizational priorities.

4. Regular Updates: Continually retraining models with fresh, real-world data maintains relevance and correctness amid changing conditions.

Practices for Ensuring Accuracy

Improving accuracy in AI-generated insights requires combining automated checks with expert review. Here are some foundational practices that help ensure precision.

Addressing and Reducing Bias

Bias in AI can stem from skewed training data, biased feature engineering, or unintended model associations. Biases can lead to disparities and unfair outcomes, impacting both credibility and compliance.

1. Bias Detection: Use statistical and exploratory analysis tools to identify potential biases across protected attributes such as gender, race, or geography.

2. Diverse and Representative Data: Gather data from varied sources that reflect all relevant stakeholder groups and scenarios.

3. Fairness-Aware Algorithms: Apply techniques such as re-weighting, adversarial debiasing, or fairness constraints during training.

4. Bias Audits: Conduct periodic audits, especially when new data or models are introduced.

5. Transparency and Documentation: Clearly document data sources, assumptions, and known biases to inform report users.

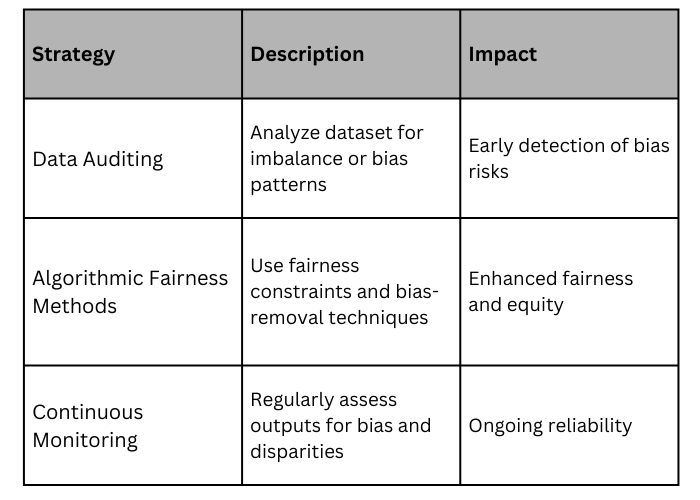

Best Practices for Bias Mitigation

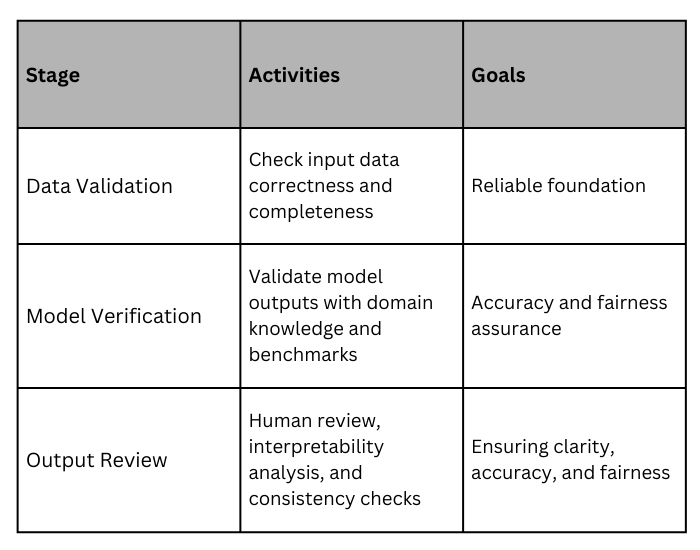

Verification and Validation of AI Outputs

Verification involves systematically reviewing and confirming the correctness and fairness of the AI-generated reports before dissemination.

1. Human-in-the-Loop: Incorporate domain experts to review and validate key findings and recommendations from AI systems.

2. Automated Checks: Use rule-based verification, anomaly detection, and consistency checks to identify obvious errors or inconsistencies.

3. Explainability and Transparency: Utilize interpretability tools (e.g., SHAP, LIME) to understand why specific outputs were generated, aiding validation.

4. Cross-Validation: Employ multiple models or datasets to verify that outputs are consistent and robust across different scenarios.

5. Benchmarking: Compare AI-generated outputs against historical or known high-quality reports to measure deviations and correctness.

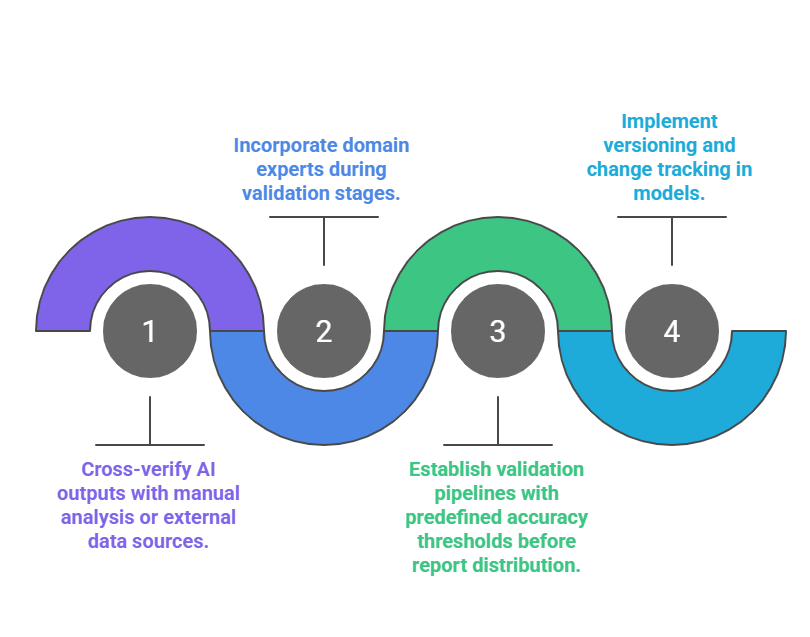

Verification Workflow

Best Practices for Ensuring Reliability in AI-Generated Reports

Maintaining the integrity of AI-generated reports demands careful planning and rigorous checks. Here’s a list of key practices that support dependable outcomes.

1. Clear Governance: Establish policies for AI usage, review cycles, and accountability.

2. Transparency and Documentation: Maintain comprehensive records of data sources, models, and decision criteria.

3. Regular Audits: Conduct periodic accuracy, bias, and fairness audits aligned with regulations like GDPR or HIPAA.

4. Stakeholder Engagement: Engage end-users, domain experts, and ethicists in the review process.

5. Training and Awareness: Educate teams about AI limitations, bias risks, and verification procedures.