Managing and interpreting the vast volumes of log data generated in modern IT environments is a core challenge in cybersecurity monitoring and incident response. Traditional log parsing methods often rely on fixed rules and regex patterns, which can be brittle and inefficient when faced with diverse log formats and evolving event types.

Large Language Models (LLMs), a form of advanced natural language processing technologies, have emerged as powerful tools to automate and enhance log parsing. By understanding the semantic context and natural language patterns in logs, LLMs enable more accurate extraction and normalization of relevant information.

Additionally, LLMs facilitate alert reduction by correlating and summarizing log events to reduce noise and alert fatigue, thereby improving security analyst efficiency and response times.

LLM-Enhanced Log Parsing: Understanding and Structuring Log Data

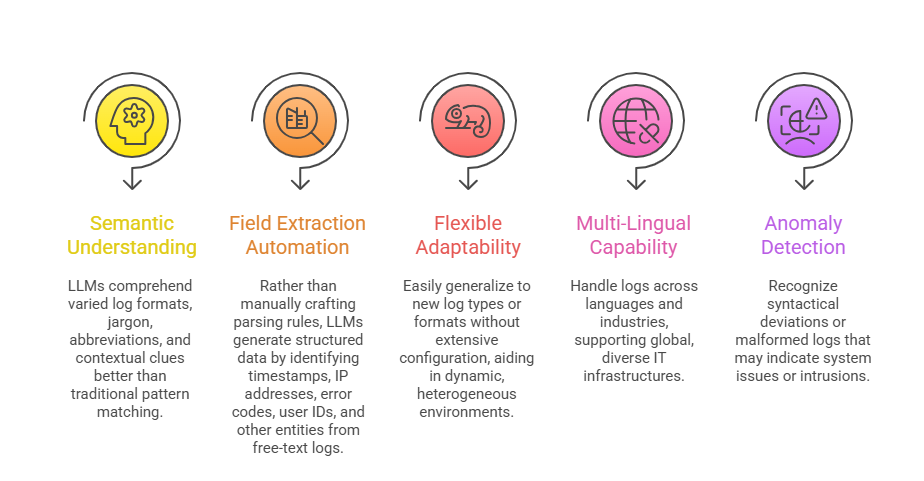

Log parsing entails extracting key fields and events from raw text log entries produced by systems, applications, and devices. LLMs improve this through:

This capability enables comprehensive and accurate log normalization to feed higher-level analytics effectively.

Reducing Alert Overload through LLM Summarization and Correlation

Security teams often contend with overwhelming volumes of alerts generated by log monitoring tools, many of which are duplicates, low priority, or false positives. LLMs assist in alert reduction by:

1. Clustering and Correlation: Grouping related log events and alerts into coherent incident clusters, recognizing patterns across time and sources.

2. Contextual Summarization: Automatically generating concise, human-readable summaries of correlated alerts, reducing information overload.

3. Priority Assignment: Using semantic insights combined with threat intelligence to score and prioritize alerts based on severity and relevance.

4. Reducing Redundancy: Filtering out repetitive or low-risk alerts to direct analyst focus on actionable threats.

5. Feedback Loop: Learning from analyst behavior and past investigations to refine alert handling and improve future reduction.

Such automation streamlines Security Operations Center (SOC) workflows and accelerates incident response.

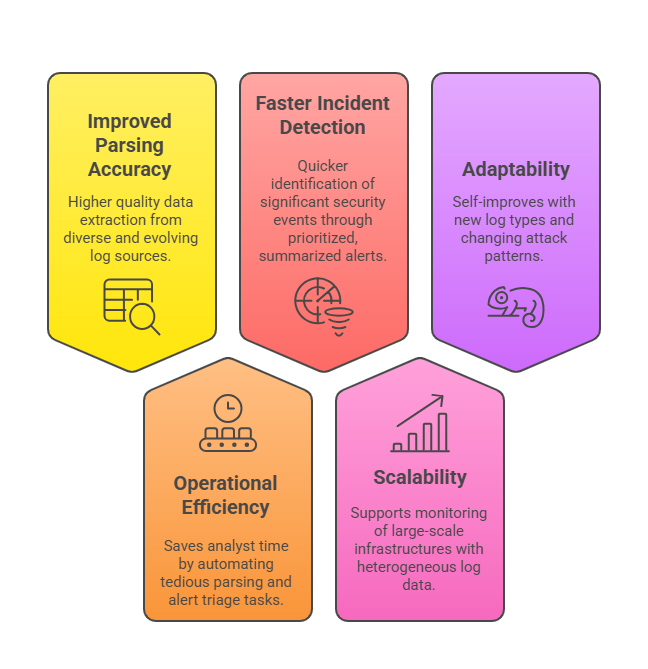

Benefits of LLMs in Log Parsing and Alert Reduction

The list below highlights how LLM-driven log parsing boosts operational performance and alert quality. These advantages make security monitoring more scalable, responsive, and effective.

Challenges and Considerations

Outlined here are the primary hurdles associated with integrating LLMs into existing monitoring ecosystems. These considerations ensure accuracy, compatibility, and continuous improvement.

1. Computational Resources: Large models require significant processing power and optimization.

2. Model Explainability: Analysts need clear reasoning behind alert prioritization and summarization.

3. Data Privacy: Logs may contain sensitive information requiring secure processing and compliance.

4. Integration Complexity: Seamless deployment with existing SIEM and SOAR platforms requires careful engineering.

5. Continuous Learning: Models must be periodically retrained to keep pace with evolving log formats and threat landscapes.