Artificial Intelligence (AI) tools have become integral to modern cybersecurity, enhancing capabilities in threat detection, analysis, and response automation. However, their deployment comes with inherent limitations, risks, and ethical boundaries that organizations and professionals must carefully manage.

While AI can process huge data sets faster and identify patterns beyond human capability, it is not infallible and can introduce new vulnerabilities, biases, and ethical dilemmas. Understanding these challenges is essential to responsibly harness AI’s power for ethical hacking and cyber defense without compromising privacy, fairness, or security.

Limitations of AI Tools in Cybersecurity

AI systems rely heavily on data quality and model design, which naturally constrains their effectiveness. Key limitations include:

1. Data Dependency: AI models require vast amounts of high-quality and representative data to learn effectively. Poor, incomplete, or biased data results in inaccurate or unfair decisions.

2. False Positives and Negatives: AI may misclassify benign or malicious activities due to imperfect detection algorithms. False positives cause alert fatigue, overwhelming security teams, while false negatives lead to undetected breaches.

3. Adversarial Vulnerabilities: Attackers can craft inputs to deceive AI—known as adversarial attacks—manipulating the model’s outputs and circumventing security controls.

4. Computational Costs: Real-time AI threat detection and continuous model retraining demand significant processing power, increasing operational expenses and infrastructure complexity.

5. Opaque Decision-Making: Many AI models act as “black boxes,” lacking transparency in their internal reasoning, thereby complicating trust, auditing, and regulatory compliance.

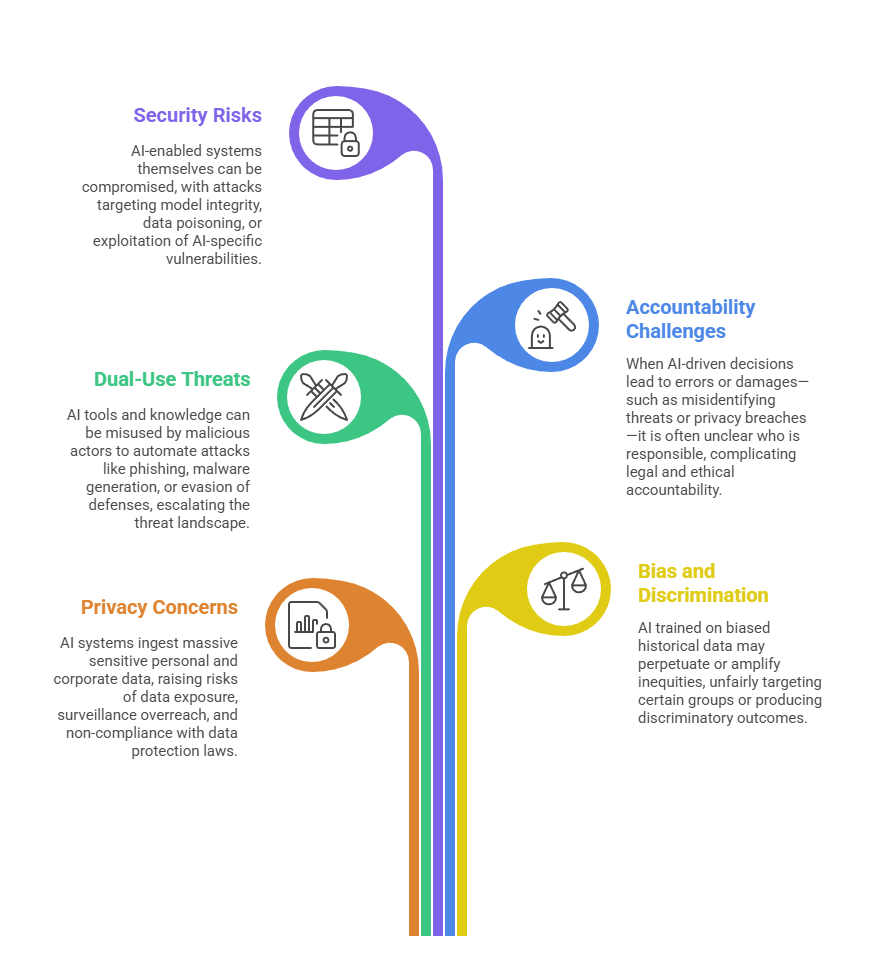

Primary Risks Associated with AI Tools

Deploying AI in cybersecurity introduces risks beyond limitations, including:

Ethical Boundaries and Responsible Use

Ethical guardrails are critical in AI cybersecurity applications to foster trust, fairness, and compliance:

1. Transparency: AI decision processes should be explainable to allow audits, build user trust, and meet regulatory requirements. Explainable AI (XAI) techniques help mitigate “black box” concerns.

2. Fairness and Bias Mitigation: Diverse training data and ongoing bias audits prevent discrimination. Ethical frameworks guide equitable AI model development and deployment.

3. Privacy Protection: Strict data governance, anonymization, and adherence to privacy regulations (e.g., GDPR, HIPAA) ensure data subject rights and confidentiality are respected.

4. Human Oversight: AI tools should augment, not replace, human judgment. Security experts must retain control for critical decisions and ethical evaluations.

5. Usage Policies and Access Controls: Clear definitions of permissible AI usage and robust access restrictions prevent misuse and unauthorized applications, especially given AI’s dual-use nature.

6. Continuous Monitoring and Updates: AI systems require constant evaluation and updates to respond to emerging threats, minimize risks, and address ethical concerns dynamically.