Threat modeling is an essential step in cybersecurity for identifying, understanding, and prioritizing potential attack vectors and adversary behaviors to design effective defenses. The MITRE ATT&CK framework, a globally recognized knowledge base of adversary tactics, techniques, and procedures (TTPs), provides a structured way to analyze and communicate threats.

AI-based threat modeling leverages artificial intelligence capabilities to map observed or potential TTPs to the MITRE ATT&CK framework automatically, enhancing accuracy, speed, and comprehensiveness.

This approach empowers security teams to gain deeper insights into attack patterns, predict adversary actions, and implement proactive mitigations aligned with realistic threat scenarios.

Understanding Threat Modeling and MITRE ATT&CK

Threat modeling is a systematic process to identify system vulnerabilities, determine the ways adversaries could exploit them, and evaluate the risks involved. The MITRE ATT&CK framework organizes adversary behaviors into categories:

Tactics: High-level adversary goals such as initial access, persistence, privilege escalation, defense evasion, etc.

Techniques: Specific methods used to achieve these goals (e.g., spear phishing, credential dumping, malware injection).

Procedures: Detailed, often context-specific implementations of techniques.

Mapping observed behaviors to ATT&CK enables standardized threat intelligence sharing and targeted defense strategies.

AI Approaches in Mapping TTPs to MITRE ATT&CK

AI enhances threat modeling by automating the mapping process through:

1. Natural Language Processing (NLP): Extracts TTP indicators from unstructured security data sources such as incident reports, logs, and threat intelligence feeds.

2. Machine Learning Classification: Classifies detected activities or alerts into ATT&CK techniques based on historical labeled data.

3. Pattern Recognition: Identifies sequences or combinations of low-level events that correspond to higher-level ATT&CK tactics.

4. Graph Analysis: Visualizes relationships and paths among TTPs, showing adversary kill chains and potential attack paths.

5. Anomaly Detection: Detects deviations in behavior consistent with unknown or emerging techniques fitting ATT&CK categories.

These AI techniques accelerate and improve the accuracy of threat modeling tasks.

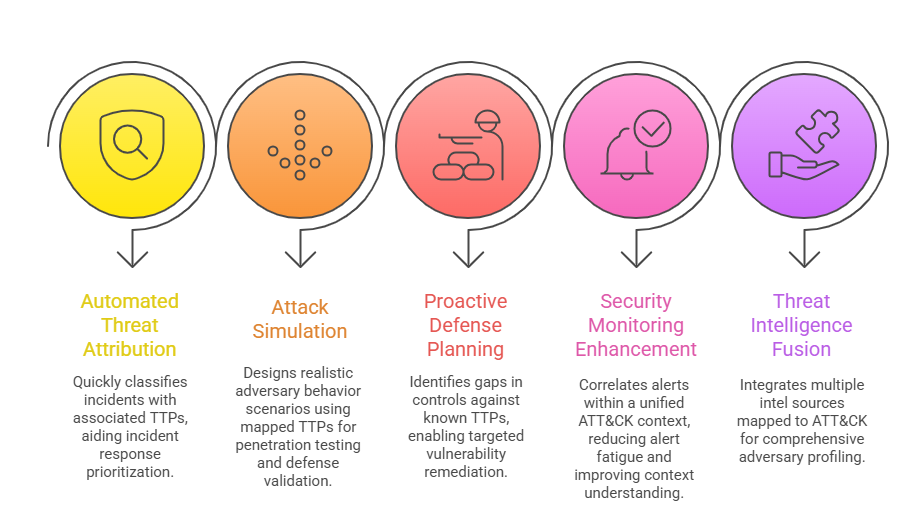

Applications and Benefits

Using structured TTP intelligence allows teams to strengthen monitoring, simulation, and response workflows. The following points highlight how this methodology delivers measurable security value.

Challenges and Considerations

Despite its advantages, AI-assisted adversary mapping is influenced by evolving threats and integration constraints. The following list highlights core considerations that shape model performance and trustworthiness.

1. Data Quality and Volume: NLP and ML models require high-quality, labeled data; noisy or partial data reduce effectiveness.

2. Evolving Techniques: ATT&CK is continuously updated; AI models must adapt to new techniques and emerging threats.

3. Explainability: Security teams require interpretable AI outputs to trust and act on modeling results.

4. Integration Complexity: Integrating AI-driven mappings into existing SOC workflows and tools demands standardized data formats and APIs.