Responsible AI usage in cybersecurity and ethical hacking is essential to ensure that artificial intelligence technologies are applied in a manner that is ethical, compliant with laws, and respectful of user rights.

As AI systems become more embedded in security processes, organizations must establish clear guidelines and frameworks to govern their development, deployment, and operation. These guidelines help prevent misuse, mitigate risks associated with bias and privacy, and foster trust among stakeholders.

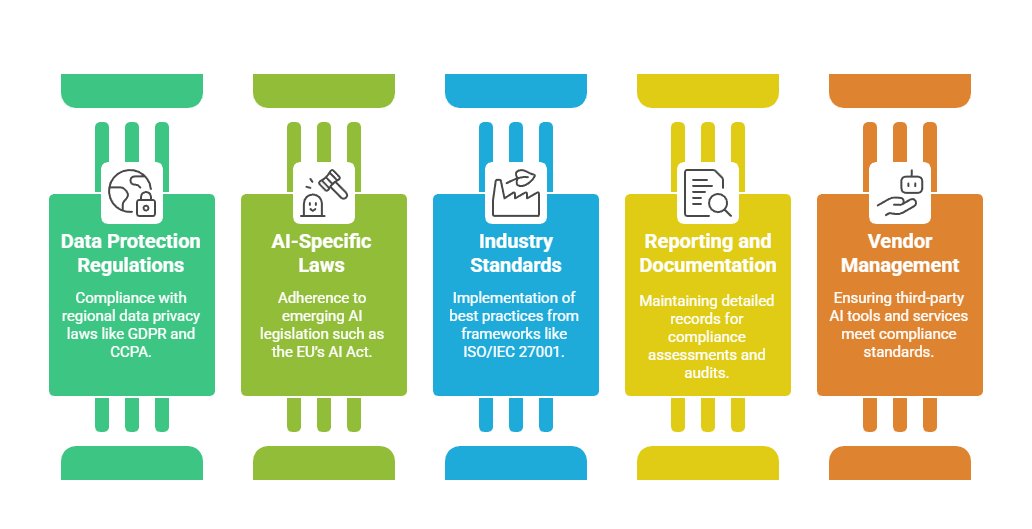

Compliance with legal and regulatory standards forms a critical pillar of responsible AI use, ensuring that organizations align with global and regional policies on data protection, transparency, and accountability.

Guidelines for Responsible AI Usage

Responsible AI usage involves multiple dimensions that address ethical, operational, and technical considerations:

1. Transparency and Explainability

AI systems should be designed to provide clear explanations of their decisions and actions.

Explainable AI (XAI) methods enable stakeholders to understand AI outputs, fostering trust and facilitating audits.

Transparency reduces “black box” risks and supports accountability mechanisms.

2. Fairness and Bias Mitigation

Implement processes to identify and reduce biases in training data and models.

Use diverse datasets and regular bias audits to ensure equitable treatment of all demographic groups.

Avoid discriminatory outcomes that could harm individuals or groups based on race, gender, or other sensitive attributes.

3. Privacy and Data Protection

Comply with data protection regulations such as GDPR, HIPAA, and CCPA.

Employ data minimization, anonymization, and secure storage practices.

Obtain informed consent when using personal data for AI training or analysis.

Monitor data usage to prevent unauthorized access or leaks.

4. Human Oversight and Control

Maintain human-in-the-loop control, especially for high-stakes or sensitive decisions.

Empower security professionals to validate AI outputs and override automated actions if necessary.

Promote collaboration between AI systems and human expertise.

5. Security and Robustness

Design AI systems with security in mind to prevent adversarial attacks such as data poisoning or evasion.

Regularly update and patch AI models to address vulnerabilities.

Test AI against a wide range of attack scenarios to ensure resilience.

6. Accountability and Governance

Establish clear roles and responsibilities for AI development and deployment.

Document AI model design, data sources, decision processes, and outcomes.

Maintain audit trails for compliance verification and incident investigations.

7. Ethical Use and Social Responsibility

Align AI applications with ethical principles such as beneficence, non-maleficence, justice, and respect for autonomy.

Avoid deploying AI in ways that cause harm, enable surveillance overreach, or violate human rights.

Foster an organizational culture that prioritizes ethical AI innovation.

Compliance Requirements for AI in Cybersecurity

Navigating the complex landscape of AI compliance requires attention to relevant laws, standards, and frameworks: