In the rapidly evolving landscape of artificial intelligence (AI), deploying models securely is paramount to safeguarding organizational assets, data privacy, and system integrity. As AI models, especially large-scale ones like language models and neural networks, become integral to business operations and services, they also present new attack vectors and security challenges.

Secure model deployment and stringent access control practices are essential to prevent unauthorized access, model theft, adversarial manipulation, and data breaches. Implementing best practices in these areas ensures that the AI infrastructure remains resilient against both external threats and internal misuses, while also complying with regulatory standards and ethical standards.

The Need for Secure Deployment

Models are vulnerable throughout their lifecycle—from development, training, and deployment, to ongoing management. As they are often exposed via APIs, cloud platforms, or embedded within applications, their security posture must be proactively managed. Unsecured deployment environments can lead to unauthorized model access, intellectual property theft, adversarial attacks, or data breaches.

Key Aspects in Secure Deployment

1. Protected Infrastructure: Ensuring that underlying hardware, cloud environments, and networking components are secured with firewalls, VPNs, and segmentation.

2. Secure Development and Testing: Adopting secure coding practices, vulnerability scanning, and penetration testing specific to AI components.

3. Model Hardened Against Attacks: Implementing techniques like adversarial training, model watermarking, and robustness testing before production deployment.

4. Deployment Environment Control: Isolating models within secure enclaves, containers, or dedicated virtual environments to prevent lateral movement and unauthorized access.

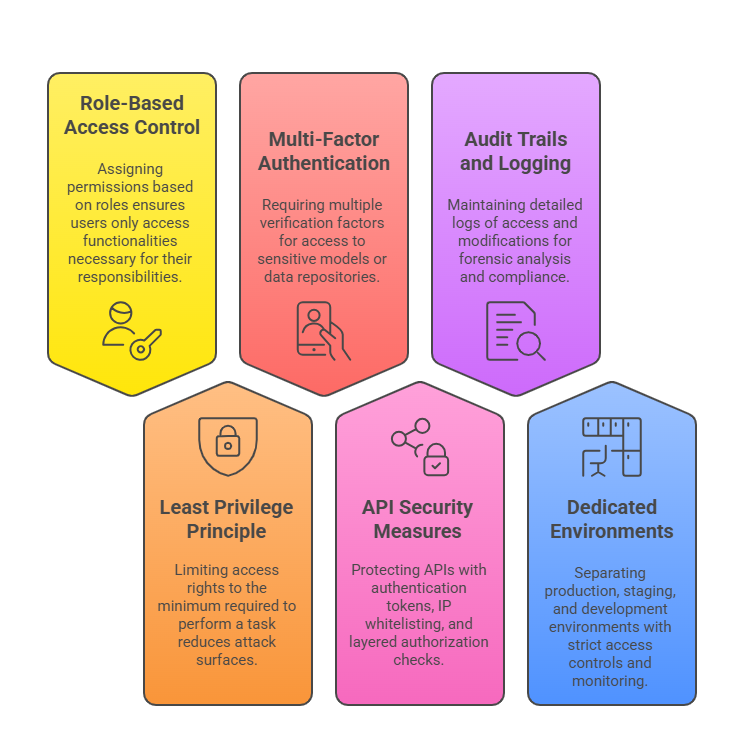

Best Practices in Access Control

Controlling access to AI models is crucial to prevent misuse, leakage, or unauthorized modification. Proper access controls encompass technical measures, operational procedures, and policy enforcement:

Operational Policies for Access Management

Ensuring secure access to systems requires structured operational policies. The following approaches help define roles, enforce limits, and maintain compliance.

1. Establish clear policies outlining authorized user roles, responsibilities, and access limits.

2. Implement periodic review and validation of access permissions.

3. Enforce strict onboarding and offboarding procedures aligned with organizational policies.

4. training on data privacy, security policies, and responsible AI usage.

Additional Best Practices for Secure Deployment

Deploying AI safely requires vigilance, continuous monitoring, and adherence to security protocols. Listed here are best practices to safeguard both models and users.

1. Encryption: Encrypt data at rest and in transit to protect sensitive model and user data.

2. Regular Security Audits: Conduct vulnerability assessments and penetration tests specific to AI infrastructure.

3. Monitoring and Anomaly Detection: Use AI and traditional tools to monitor for abnormal access patterns or suspicious behaviors.

4. Patch and Update Management: Keep all hardware, software, and AI frameworks updated against known vulnerabilities.

5. Robust Incident Response Plans: Develop procedures for responding to potential security breaches involving AI models, including protocol for model rollback, data recovery, and forensic analysis.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.