As Artificial Intelligence (AI) increasingly automates critical business and operational decisions, building ethical and explainable AI automations becomes imperative.

Ethical AI ensures that automation systems act fairly, transparently, and responsibly, minimizing harm and respecting human values. Explainability, a fundamental aspect of ethical AI, refers to the ability to clear up the "black box" of complex AI algorithms, helping stakeholders understand how decisions are made.

Understanding Ethical AI Automations

Ethical AI automations align AI decision-making with human values, fairness, and societal norms. Key facets include:

1. Fairness and Bias Mitigation: AI systems must avoid perpetuating or amplifying biases that lead to unfair treatment based on race, gender, or other protected attributes.

2. Accountability: Clear assignment of responsibility for AI-driven decisions and actions is vital to maintain governance and legal compliance.

3. Transparency: Stakeholders should be informed about how and why AI systems make specific decisions.

4. Privacy Preservation: Ethical AI respects data privacy, minimizing data collection and ensuring secure, purpose-limited use.

5. Human-Centric Design: Systems should augment rather than replace human judgment, enabling human oversight and intervention.

Ethical frameworks such as IEEE’s Ethically Aligned Design and EU’s AI Act guide organizations in responsible AI automation.

Explainability in AI: Making Decisions Understandable

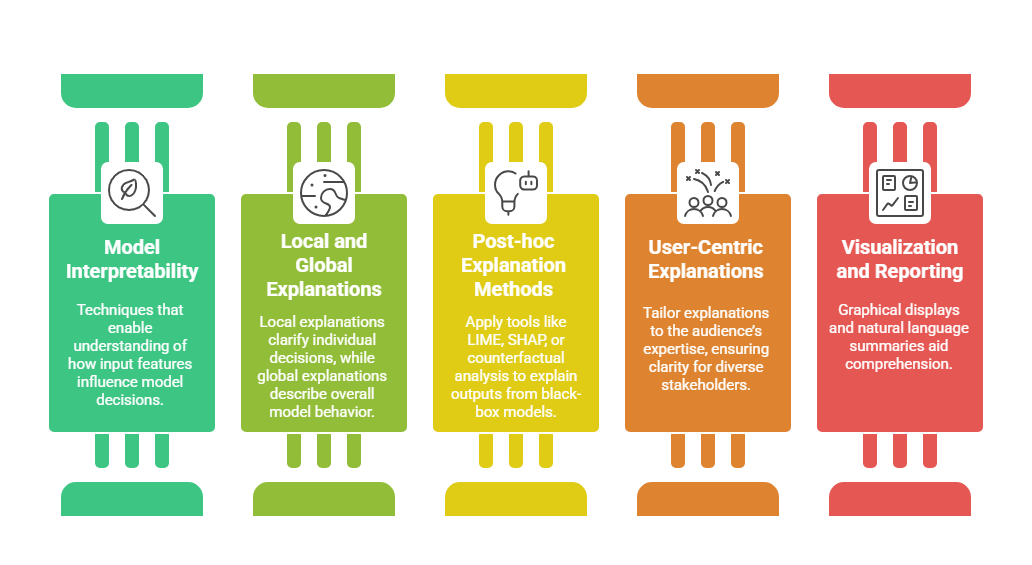

Explainable AI (XAI) demystifies complex AI models, providing insight into internal processes and outputs:

Explainability fosters trust, supports validation, and aids debugging and compliance.

Explainability fosters trust, supports validation, and aids debugging and compliance.

Best Practices for Building Ethical, Explainable AI Automations

Creating AI that users can trust relies on embedding ethics and interpretability from the start. Below is a set of practices designed to support safe and explainable automation.

1. Bias Detection and Mitigation

Use diversified training datasets and fairness metrics to identify and reduce bias.

Conduct regular bias audits and engage diverse stakeholder perspectives.

2. Transparency by Design

Document AI model development, data provenance, and decision logic.

Employ XAI tools early in the design phase to ensure interpretability.

3. Human-in-the-Loop (HITL)

Integrate human review for critical decisions and exception cases.

Enable override capabilities to prevent automated errors impacting users adversely.

4. Privacy and Security Safeguards

Implement data minimization, encryption, and anonymization techniques.

Comply with data protection regulations and ethical data sourcing.

5. Continuous Monitoring and Feedback

Monitor AI outputs for fairness, accuracy, and unintended consequences.

Incorporate user feedback to improve system behavior and explanations.

6. Ethical Governance Structures

Establish cross-functional AI ethics committees to guide development and deployment.

Define clear accountability and escalation paths for AI-related issues.

Challenges and Considerations

Organizations face both conceptual and operational barriers when integrating XAI into workflows. The points below outline the key issues that must be managed.

1. Trade-off Between Explainability and Performance: Some highly accurate AI models (e.g., deep neural networks) are less interpretable; balancing transparency with predictive power is crucial.

2. Complexity of Ethical Judgments: Ethical principles may conflict or be context-dependent, requiring nuanced application.

3. Evolving Standards and Regulations: AI ethics frameworks and compliance requirements are rapidly developing and vary globally.

4. User Diversity: Different stakeholders need different explanation types, demanding adaptable XAI methods.