With the proliferation of cloud-native applications, microservices, and digital integration, Application Programming Interfaces (APIs) have become pivotal in enabling communication and data exchange between software systems.

While APIs enhance flexibility and agility, they also introduce unique security challenges, including misconfigurations that can lead to unauthorized access, data breaches, and service disruptions.

Traditional API security reviews—often manual and static—may fail to keep pace with the scale and complexity of diverse API environments. Artificial intelligence (AI) offers transformative potential to automate API security review and misconfiguration detection.

AI-powered tools analyze API usage patterns, configurations, and traffic in real time to identify vulnerabilities, misconfigurations, and suspicious behavior, enabling organizations to fortify their API ecosystems proactively.

Understanding API Security Challenges and Misconfigurations

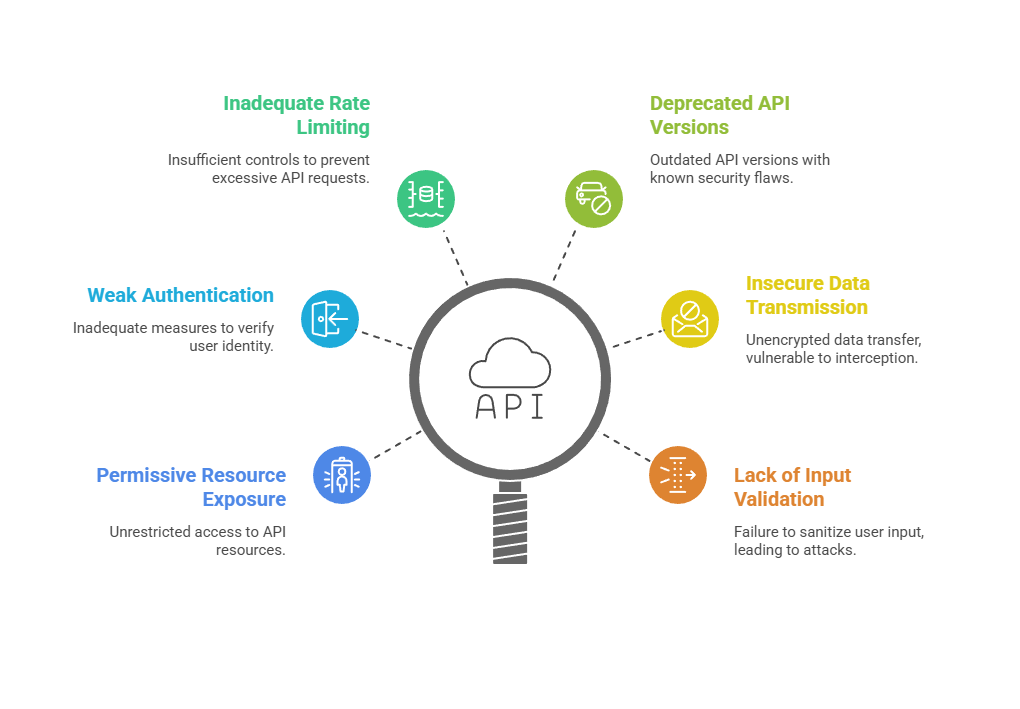

APIs enable seamless integration but can become security weak points if not properly designed, configured, or monitored. Common API misconfigurations include:

These weaknesses create opportunities for attackers to exploit APIs for data exfiltration, privilege escalation, and denial of service.

These weaknesses create opportunities for attackers to exploit APIs for data exfiltration, privilege escalation, and denial of service.

AI-Powered API Security Review

AI automates repetitive and complex aspects of API security review by employing several techniques:

1. Static API Specification Analysis: AI parses API descriptions (e.g., OpenAPI/Swagger documents) to verify adherence to security standards and detect configuration anomalies.

2. Machine Learning for Behavioral Profiling: AI models learn normal API usage patterns, detecting deviations indicative of abuse or misconfigurations.

3. Natural Language Processing (NLP): Applies to API documentation, comments, and change logs to identify potential security gaps or unclear definitions.

4. Automated Vulnerability Detection: Identifies known vulnerability patterns like improper authorization checks or data exposure.

5. Continuous Monitoring and Alerting: Real-time AI analysis of API traffic logs and metadata to detect anomalies and misconfiguration effects.

These capabilities enable continuous, scalable, and detailed API security assessments beyond manual capacities.

AI Techniques for Misconfiguration Identification

Advanced AI techniques analyze behavior, configuration changes, and endpoint relationships to maintain secure API environments. Here’s an overview of the main techniques employed for detecting API misconfigurations.

1. Anomaly Detection Models: Spot unusual API behavior such as unexpected endpoints access, incorrect HTTP methods, or irregular rate patterns.

2. Configuration Drift Detection: AI identifies deviations from baseline secure configurations over time, signaling potential misconfigurations introduced in updates.

3. Correlation with Threat Intelligence: Matches detected anomalies with known threats or attacker patterns targeting APIs.

4. Risk Scoring and Prioritization: Quantifies potential impact of detected misconfigurations to prioritize remediation efforts.

5. Graph-Based Analysis: Models API endpoint relationships and data flows, highlighting risky connection points and excessive privileges.

Benefits of AI in API Security Review and Misconfiguration Detection

By combining anomaly detection, scalability, and DevSecOps integration, AI enhances both preventive and corrective API security measures. The following points highlight the main benefits of AI in API misconfiguration detection.

1. Improved Accuracy: Detects complex misconfigurations and subtle security gaps often missed by manual reviews.

2. Scalability: Handles large volumes of APIs across dynamic environments with automated continuous assessment.

3. Real-Time Threat Detection: Early identification of suspicious behavior mitigates attack impacts rapidly.

4. Reduced Operational Overhead: Frees up security teams from manual audits, focusing efforts on critical remediation.

5. Comprehensive Visibility: Provides insights into API usage, security posture, and configuration health.

6. Integration with DevSecOps: Embeds security checks early in development pipelines to catch misconfigurations pre-deployment.

Challenges and Considerations

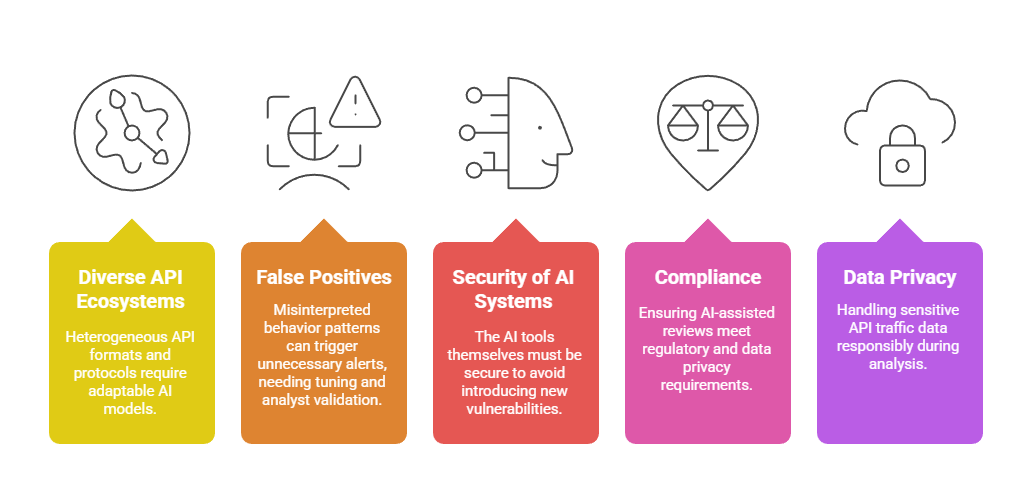

Deploying AI for API security requires balancing automation with human oversight, model adaptability, and regulatory requirements. Here are the main challenges and considerations for successful implementation.