Penetration testing (pentesting) is a cornerstone of cybersecurity, involving simulated attacks to identify vulnerabilities before malicious actors can exploit them. Traditionally, pentesting has relied heavily on manual techniques, expertise, and standardized tools to evaluate system security.

However, the advent of artificial intelligence (AI) has introduced AI-augmented pentesting, which leverages AI technologies to enhance, automate, and scale the pentesting process.

Understanding the differences between traditional and AI-augmented pentesting is essential for cybersecurity professionals aiming to adopt advanced methodologies and improve assessment efficiency and effectiveness.

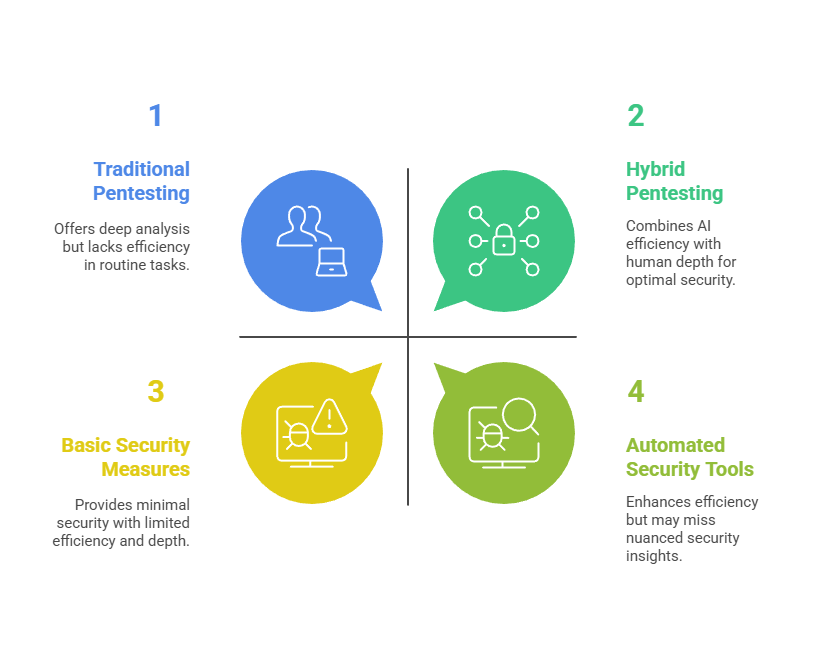

Traditional Pentesting

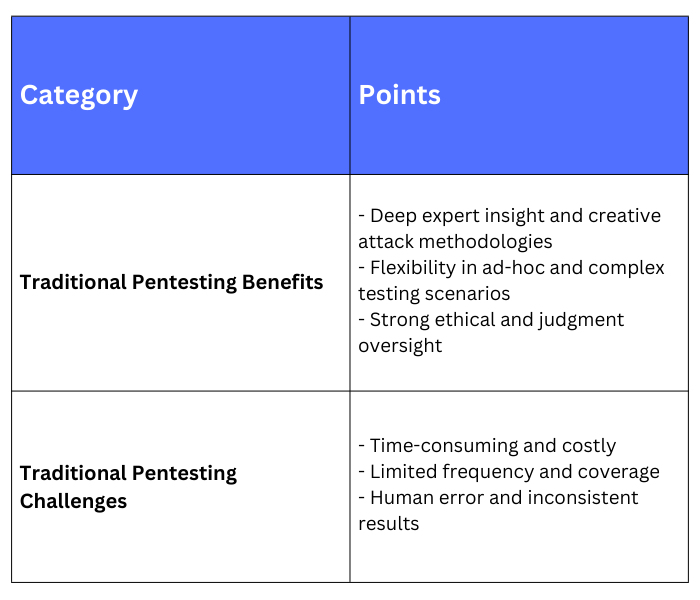

Traditional pentesting primarily consists of manual and semi-automated activities conducted by ethical hackers using specialized tools and techniques. Key characteristics include:

1. Manual Reconnaissance: Ethical hackers collect information through open-source intelligence (OSINT) and active scanning, analyzing targets based on their skills and experience.

2. Tool-Based Scanning: Use of vulnerability scanners, port scanners, and exploit frameworks in a controlled manner.

3. Expert-Driven Analysis: Pentesters interpret scan results, prioritize vulnerabilities, and craft custom exploits if needed.

4. Time-Intensive: Manual assessments often require significant time and human effort, especially for complex environments.

5. Limited Scalability: Resource constraints can limit the frequency and coverage of pentests.

6. Human Creativity: Manual testing benefits from the creativity and intuition of skilled testers to uncover subtle or complex vulnerabilities.

AI-Augmented Pentesting

AI-augmented pentesting integrates artificial intelligence and machine learning algorithms to automate and enhance various pentesting phases. Its main features include:

1. Automated Reconnaissance: AI tools automate data gathering by scanning multiple sources at scale, identifying patterns and enriching OSINT faster than manual methods

2. Intelligent Vulnerability Detection: Machine learning models prioritize vulnerabilities based on risk scoring and historical attack data, reducing false positives.

3. Predictive Analysis: AI predicts potential attack vectors by analyzing trends, configuration changes, and anomaly detection.

4. Scenario Simulation: Generative AI can simulate complex attack scenarios, including AI-driven red teaming exercises.

5. Continuous Testing: AI enables frequent, automated pentests integrated into DevSecOps pipelines for real-time security insights.

6. Efficiency and Coverage: AI reduces manual labor, accelerates testing cycles, and expands scope to cover dynamic and large-scale environments.

Benefits and Challenges in Traditional Pentesting

AI-Augmented Pentesting Benefits and Challenges

Benefits: It offers faster and more scalable assessments with significantly broader coverage, while also improving detection accuracy and prioritizing risks more effectively. It enables continuous security validation rather than relying on periodic checks, ensuring stronger real-time protection.

Additionally, predictive analytics can uncover novel attack vectors that traditional methods might miss, strengthening overall cybersecurity resilience.

Challenges: A strong dependence on data quality and model accuracy, which directly impacts the effectiveness of the results. There is also a risk of over-reliance on automation, potentially causing important human-driven nuances to be overlooked.

Ethical concerns arise around the transparency of AI-based decisions, especially when interpreting findings. Additionally, organizations may face significant initial investment and complexity when adopting advanced AI-powered tools.

Integrating Both Approaches

The most effective security programs often blend traditional and AI-augmented pentesting. AI serves as a force multiplier, automating routine tasks and providing insights, while human experts apply critical thinking, creativity, and ethical judgment to interpret findings and plan remediation. This hybrid approach enhances efficiency without sacrificing depth or control.