Security control testing is a vital process in cybersecurity that involves evaluating the effectiveness of implemented security measures, such as policies, configurations, and technical safeguards, to protect an organization’s assets.

Traditional manual or rule-based testing approaches can be time-consuming, inconsistent, and insufficient for modern complex IT environments. Artificial intelligence (AI) enhances security control testing by automating gap analysis, identifying misconfigurations, and continuously assessing policy enforcement.

With AI, organizations gain the ability to conduct comprehensive, accurate, and real-time evaluations of security controls, ensuring better compliance, reduced risks, and stronger defenses.

AI-Driven Identification of Policy Gaps

Policy gaps arise when implemented controls do not meet defined security policies or fail to address emerging risks adequately. AI identifies these gaps through:

1. Automated Policy Analysis: AI systems parse written policies and regulatory standards, extracting rules and conditions for compliance and control expectations.

2. Configuration Comparison: AI compares actual system, network, and application configurations against policy requirements to highlight deviations.

3. Continuous Compliance Monitoring: Machine learning models monitor changes in infrastructure or configurations to detect newly introduced gaps proactively.

4. Risk-Based Prioritization: AI assesses the potential impact of each gap to prioritize remediation efforts based on organizational risk profiles.

5. Natural Language Processing (NLP): Extracts actionable controls and compliance criteria from unstructured policy documents and audit reports.

These techniques provide a scalable way to manage complex and evolving policy landscapes consistently.

Detecting Misconfigurations with AI

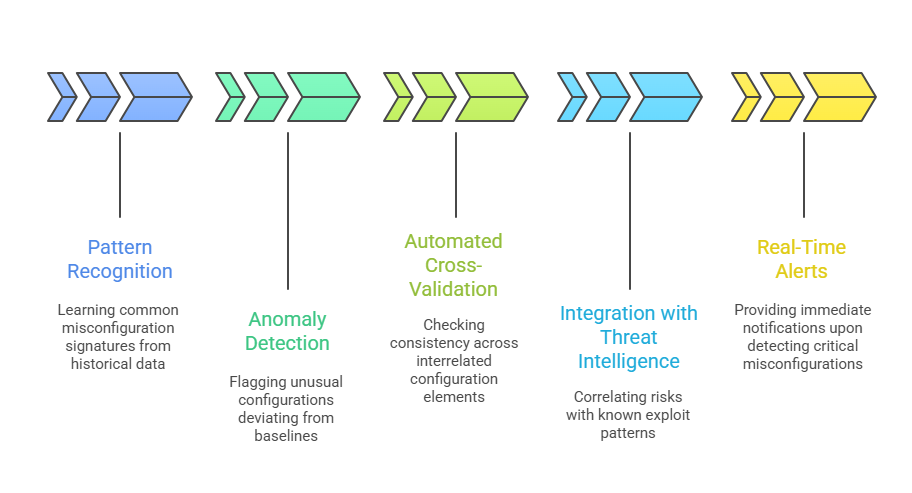

Misconfigurations are among the most common security vulnerabilities, often arising from human errors, inconsistent processes, or rapid infrastructure changes. AI improves misconfiguration detection by:

AI-powered detection enhances accuracy and reduces reliance on manual audits.

AI-powered detection enhances accuracy and reduces reliance on manual audits.

Benefits of AI for Security Control Testing

AI-driven control testing strengthens defenses by automating assessments and improving detection quality. The points below outline the core advantages offered by this approach.

1. Efficiency and Scalability: Automates labor-intensive assessment tasks across diverse, large-scale environments.

2. Early and Continuous Detection: Identifies gaps and misconfigurations promptly to prevent exploitation.

3. Improved Accuracy: Reduces false positives and human errors through data-driven analysis and learning.

4. Risk Prioritization: Focuses resources on high-impact issues to optimize security efforts and budget.

5. Compliance Assurance: Supports adherence to regulatory frameworks and internal policies with structured reporting.

6. Dynamic Adaptability: Maintains relevance amid evolving infrastructure and threat landscapes.

Challenges and Best Practices

To maximize AI effectiveness, teams must be aware of potential obstacles and follow structured enhancement steps. Here are the major challenges and the best practices that help mitigate them.

1. Complex Policy Landscape: AI models must be trained to understand diverse and evolving compliance requirements.

2. Data Quality and Integration: Effective analysis requires accurate configuration and inventory data integrated across systems.

3. Model Explainability: Transparent AI operations help security teams understand findings and build trust.

4. Human Oversight: Expert review remains essential to validate AI conclusions and manage exceptional cases.

5. Continuous Model Updating: Regular updates to AI algorithms and data inputs ensure ongoing effectiveness.