Graph Neural Networks (GNNs) are a powerful class of deep learning architectures designed to learn from data that is naturally interconnected or structured as graphs.

Unlike conventional models that assume data is independent, GNNs capture intricate relationships between entities using edges, nodes, and their attributes.

This makes them ideal for domains where interactions, dependencies, and network structures are central to the problem.

In relational datasets—such as social networks, biological pathways, communication networks, knowledge graphs, or financial transaction webs GNNs help uncover hidden patterns that traditional ML models often struggle to detect.

GNNs operate by iteratively aggregating information from neighboring nodes, gradually forming complex representations that reflect both local and global structure.

This ability enables accurate modeling of community patterns, influence propagation, anomaly detection, and link prediction.

Modern applications include recommendation engines, fraud detection, drug discovery, and semantic reasoning, making GNNs one of the most rapidly advancing areas in AI research.

Fundamentals and Applications of Graph Neural Networks (GNNs)

Graph Neural Networks (GNNs) enable learning from graph-structured data by capturing both node attributes and relational patterns.

They power a wide range of applications, from node classification and link prediction to community detection and recommendation systems.

1 Graph Representation Learning

Graph representation learning revolves around transforming nodes, edges, or entire subgraphs into dense vector embeddings that capture structural and semantic properties.

These embeddings reflect similarity patterns, neighborhood influence, and global graph topology.

By converting complex networks into numerical forms, downstream tasks classification, clustering, or search become computationally tractable.

For instance, in a citation network, embeddings learned for papers help identify related literature even when keywords differ.

GNN-based embedding techniques outperform traditional graph algorithms because they incorporate both node attributes and graph structure in the learning process.

2 Message Passing and Aggregation Mechanisms

The message-passing framework allows nodes to share information with their immediate neighbors across multiple iterations (known as layers).

Each node updates its representation based on aggregated messages, capturing increasing levels of relational context.

Aggregation strategies include mean pooling, attention-based weighting, max pooling, and summation, each influencing how information flows through the graph.

For example, in molecular graphs, message passing lets atoms exchange chemical property information, helping the network predict molecular stability or toxicity.

The multi-hop aggregation capability makes GNNs ideal for relational learning tasks where connections carry significant meaning.

3 Graph Convolutional Networks (GCNs)

GCNs extend the idea of convolution—common in CNNs—to graph-structured data by smoothing node features based on local neighborhoods.

Each GCN layer blends node attributes with information from adjacent nodes, enabling powerful relational reasoning.

This makes GCNs effective for semi-supervised classification tasks where only a small portion of nodes are labeled.

A typical example is classifying users in a social network based on shared interests and interaction patterns.

GCNs simplify real-world relational problems by providing an efficient way to model dependencies without manually constructing feature maps.

4 Graph Attention Networks (GATs)

GATs integrate attention mechanisms into graph learning, allowing nodes to focus more on relevant neighbors during aggregation.

This dynamic weighting improves model accuracy when some connections are more informative than others.

The attention coefficients help prioritize influential relationships such as identifying key protein interactions responsible for specific biological functions.

In recommendation systems, GATs highlight user–item interactions that significantly influence purchasing behavior.

The attention mechanism introduces interpretability, allowing analysts to understand which links drive predictions.

5 Graph-Based Link Prediction

Link prediction identifies missing or future connections in networks by analyzing node representations and graph structure.

This is crucial in applications like drug-target interaction discovery, social media friend suggestions, and fraud ring detection.

GNN-driven link prediction leverages multi-hop dependencies to infer subtle relational patterns that rule-based systems cannot detect.

For example, in supply chain networks, the model can reveal hidden supplier relationships that may introduce risk or inefficiencies.

Accurate link prediction supports proactive decision-making by anticipating interactions before they occur.

6 Node Classification and Community Detection

GNNs categorize nodes into meaningful groups by analyzing feature patterns and relational signals simultaneously.

For community detection, GNNs identify dense clusters of interconnected nodes that share common characteristics.

In telecommunications, node classification helps detect malfunctioning devices or unusual traffic behavior.

In e-commerce, it supports segmenting customers based on browsing and buying habits.

Modeling both attributes and connectivity enables GNNs to outperform traditional clustering methods that ignore relational pathways.

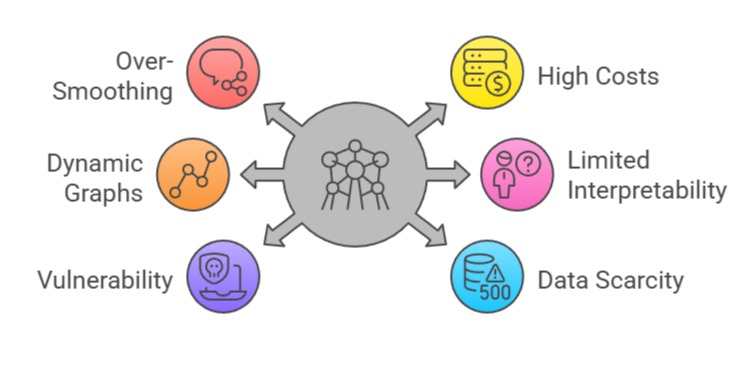

Challenges & Limitations of GNNs

1 Over-Smoothing in Deep Graph Models

As GNN layers increase, node representations gradually become indistinguishable because they aggregate repeated information from neighbours.

This leads to a phenomenon called over-smoothing, where all nodes converge to similar embeddings, reducing predictive power.

Deep GNNs often struggle to preserve meaningful variation in large or densely connected graphs. This limits their ability to model long-range dependencies.

For example, in social networks, users with distinct interests may end up with nearly identical embeddings due to excessive message passing.

2 High Computational and Memory Costs

Graph operations, especially neighborhood aggregation, become expensive for large-scale graphs with millions of nodes and edges.

Unlike images or text, graph structures lack regularity, making batching and parallelization harder.

Memory consumption spikes when storing multi-hop neighborhoods, leading to slow training cycles.

Real-time applications such as fraud monitoring or dynamic recommendation systems face bottlenecks due to these overheads.

Scaling GNNs efficiently often requires graph partitioning or distributed training setups.

3 Difficulty Handling Dynamic and Evolving Graphs

Many real-world graphs evolve over time—transactions occur, friendships form, biological interactions shift yet traditional GNNs assume static structures.

Capturing temporal changes requires specialized models like Temporal GNNs or Graph Recurrent Networks. Frequent graph updates break caching strategies and complicate incremental learning.

For example, cybersecurity networks continuously evolve as new threats emerge, making static GNNs struggle to maintain up-to-date representations.

4 Limited Interpretability and Transparency

Despite improvements through attention-based GNNs, understanding how graph structures influence predictions remains challenging.

Node embeddings and message-passing operations create complex interactions that are difficult to visualize or explain.

Applications like drug discovery or financial risk modeling require detailed interpretability for regulatory compliance.

The limited transparency can hinder adoption in industries where explainability is mandatory.

5 Vulnerability to Graph Attacks and Noise

GNNs are highly sensitive to small structural manipulations, such as adding or removing crucial edges.

Attackers can poison graphs by slightly altering the connectivity to mislead predictions—especially in fraud, spam detection, or social networks.

Noisy graphs exacerbate this, as irrelevant edges distort message aggregation. As GNNs rely heavily on structure, even subtle modifications affect model stability.

6 Data Scarcity and Label Imbalance

Many graph tasks suffer from limited labeled nodes because manual annotation is expensive.

Semi-supervised GNNs mitigate this somewhat, but performance still suffers when labels are sparse or imbalanced.

In biological networks, only a small number of proteins may be labeled as disease-associated, making classification difficult.

GNNs also struggle when meaningful patterns require labels from distant nodes.

Best Practices for Building High-Quality GNN Models

Building high-quality Graph Neural Network (GNN) models requires careful consideration of architecture, scalability, and graph-specific dynamics.

Following best practices ensures robust, interpretable, and efficient models capable of handling large, dynamic, and heterogeneous graph data.

1 Use Shallow Architectures with Residual or Skip Connections

To counter over-smoothing, limit GNNs to a few layers, typically two to four. Incorporate skip connections, residual links, or dilated message passing to preserve useful information across layers.

These architectures maintain expressiveness while avoiding degraded embeddings.

For example, using a residual GCN significantly improves node classification performance in citation networks.

2 Employ Graph Sampling Techniques for Large Graphs

For massive graphs, use sampling strategies such as GraphSAGE, neighbor sampling, or clustered mini-batches to reduce computational load.

Sampling improves scalability without compromising relational patterns.

It also allows training on GPUs with limited memory. For example, in e-commerce recommendation systems, neighbor sampling prevents exponential neighborhood growth.

3 Incorporate Attention Mechanisms for Better Relevance Weighting

Integrating attention modules helps models prioritize the most informative neighbors.

This enhances interpretability and improves robustness in noisy graphs.

GAT layers let the model adaptively learn which relationships matter most, reducing dependence on manually crafted features.

In molecular graphs, attention helps focus on key atomic interactions contributing to chemical behavior.

4 Normalize Features and Use Regularization Techniques

Feature normalization ensures stable training, especially when node attributes vary widely. Combine batch normalization, layer normalization, and dropout to reduce overfitting and stabilize message propagation.

Regularization is crucial when dealing with small labeled datasets. Techniques like edge dropout help models generalize better in real-world noisy graphs.

5 Apply Temporal or Dynamic GNNs for Evolving Graphs

Use specialized architectures like TGAT, DySAT, or Temporal Graph Networks for time-sensitive applications.

These models incorporate time-stamped edges and evolving structures, enabling accurate modeling of changing interactions.

In fraud detection, temporal GNNs detect evolving fraudulent patterns that static models miss.

6 Validate with Task-Specific Metrics and Structural Tests

Graph tasks require different evaluation metrics than standard ML tasks. Use link prediction metrics (AUC, Hits@K), node classification accuracy, or graph-level metrics like F1-score to assess performance.

Additionally, perform robustness tests by randomly removing edges or adding noise.

This ensures the GNN model is stable and not overly dependent on specific graph fragments.

7 Combine GNNs with Other Architectures (Hybrid Models)

Hybrid models GNN + Transformer, GNN + CNN, or GNN + MLP—often outperform standalone GNNs because they capture multi-modal patterns.

For instance, combining GNNs with Transformers allows integrating both graph structure and sequential or textual context.

In recommendation engines, hybrid models enhance personalization by blending relational and behavioral signals.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.