Time Series Analysis is a specialized branch of data science focused on understanding patterns that evolve over time, such as seasonality, cyclical fluctuations, and long-term shifts.

Unlike static datasets, time series emphasize the temporal order of observations, making them essential for domains where timing, sequence, and trends influence future outcomes.

Forecasting methods built on time series principles allow organizations to anticipate demand, detect anomalies, predict financial fluctuations, and optimize operational decisions with greater precision.

Modern time series approaches integrate statistical foundations with machine learning innovations, enabling systems to learn complex temporal behaviors efficiently.

These methods allow data scientists to capture autocorrelations, lagged dependencies, and external influences, supporting dynamic decision-making in volatile environments.

From classical linear models to advanced neural architectures, forecasting techniques have evolved into powerful tools capable of modeling both short-range and long-range temporal patterns.

Industries such as healthcare, energy, transportation, retail, and climate science now rely heavily on robust time-series forecasting to improve planning accuracy and reduce uncertainty in mission-critical applications.

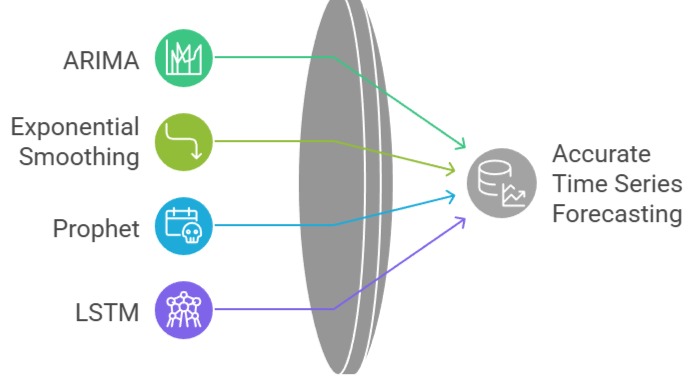

Key Forecasting Methods

1. ARIMA (Autoregressive Integrated Moving Average)

ARIMA combines autoregression, differencing, and moving-average components to capture linear temporal relationships. It excels when the dataset shows gradual trends without abrupt structural breaks.

The model estimates how past values and previous errors influence upcoming observations, making it effective for stable environments.

It requires stationarity, which means transformations such as differencing or logarithmic scaling may be applied.

ARIMA is particularly useful when the dataset is small but predictable, offering reliable short-term projections.

It also supports extensions like SARIMA for handling seasonal influences.

Example: Forecasting monthly electricity consumption for households where demand follows a consistent seasonal pattern.

2. Exponential Smoothing (Holt–Winters Method)

Exponential smoothing assigns higher weight to recent data, allowing the model to react quickly to current changes.

The Holt–Winters version incorporates trend and seasonality, making it suitable for datasets that repeatedly fluctuate in predictable cycles.

It is computationally lightweight and works effectively even with limited observations.

The method is known for producing smooth, interpretable forecasts without requiring extensive parameter tuning.

It also adapts rapidly when sudden changes occur, improving responsiveness.

Example: Retailers use Holt–Winters to forecast weekly sales of fast-moving products that exhibit repeating seasonal spikes.

3. Prophet (Facebook/Meta)

Prophet is built to handle real-world challenges such as missing data, irregular cycles, unexpected holidays, and trend shifts.

It decomposes time series into components—growth, seasonality, and special events—making it highly interpretable.

Prophet automatically detects changepoints, which is beneficial when historical behavior undergoes abrupt transitions.

It requires minimal manual configuration, making it popular among business analysts and non-specialists.

The tool is robust across large datasets and can operate even when the time series displays complex seasonal structures.

Example: Online platforms use Prophet to forecast website traffic influenced by campaigns, promotions, and holiday activity spikes.

4. LSTM (Long Short-Term Memory Networks)

LSTMs are deep learning models designed to learn long-range dependencies using memory cells and gating mechanisms.

They excel when the data contains nonlinear patterns, multi-step interactions, or long-duration effects.

LSTMs handle large volumes of sequential data and remain effective even when traditional statistical models fail to capture complex dynamics.

They are flexible enough to integrate external factors such as weather, promotions, or sensor signals.

LSTMs are widely adopted in scenarios requiring high accuracy and adaptability in rapidly changing environments.

Example: Predicting stock market movements where price sequences depend on intricate short-term and long-term relationships.

Real-World Case Studies in Time Series Forecasting

Real-world case studies in time series forecasting showcase how organizations leverage temporal data to make accurate predictions and informed decisions.

These examples highlight applications across retail, transportation, energy, aviation, and healthcare, demonstrating tangible business and operational impact.

1. Amazon – Demand Forecasting for Inventory Optimization

Amazon uses advanced time-series models to anticipate product demand across thousands of warehouses.

By combining LSTM networks with traditional statistical baselines, Amazon predicts how customer preferences shift due to seasons, regional holidays, or global events.

These forecasts determine inventory placement, reducing delays and minimizing overstock.

Their system processes real-time signals like browsing patterns, weather forecasts, pricing changes, and supply chain disruptions.

This hybrid forecasting engine allows Amazon to maintain high service levels while reducing fulfillment costs.

2. Uber – Predicting Rider Demand and Surge Pricing

Uber applies Prophet-like models and custom deep learning architectures to predict how demand fluctuates across cities.

Their system considers location-based activity, special events, traffic flow, and temporal patterns.

Accurate forecasts help position drivers before peak hours and dynamically adjust rates to balance supply and demand.

Time series also assists in detecting anomalies such as unexpected traffic jams or city-wide outages, ensuring stable service availability.

3. Power Grid Operators – Load and Energy Consumption Forecasting

Electricity providers rely on ARIMA, Holt–Winters, and LSTM networks to predict daily and hourly energy requirements.

These forecasts help balance generation and consumption, preventing power shortages or excessive production.

Renewable energy sources like wind and solar produce variable outputs; forecasting models help planning decisions to stabilize grid performance.

Long-range forecasts also guide infrastructure investments and pricing strategies.

4. Airlines – Flight Pricing and Passenger Volume Forecasting

Airlines analyze long historical patterns to estimate passenger flow, fare fluctuations, and booking spikes.

Time series forecasting feeds into revenue management systems, adjusting prices dynamically.

Seasonal travel peaks, competitor actions, and global events influence predictions.

Forecasting accuracy directly affects profitability, as airlines must allocate aircraft appropriately and predict high-demand travel windows.

5. Healthcare – Disease Outbreak and Hospital Resource Forecasting

Hospitals use forecasting models to predict patient admissions, disease outbreak trends, and ICU occupancy. During flu season or pandemics, LSTM-based models offer early warnings of rising cases. These insights help hospitals allocate ventilators, beds, and staff efficiently. Healthcare forecasting improves emergency response and reduces system overload during critical periods.

Implementation Best Practices for Time Series Forecasting

Implementation best practices for time series forecasting guide practitioners in building accurate, reliable, and maintainable models.

Following these strategies ensures models handle temporal dynamics effectively and deliver actionable insights in real-world scenarios.

1. Ensure Proper Stationarity Checks

Time series models often require stationary data for reliable forecasting.

Techniques like differencing, log transforms, or seasonal adjustments help stabilize variance and mean.

Without stationarity, statistical models may misinterpret temporal dependencies and produce misleading results.

Even with deep learning methods, stationarity improves convergence and reduces training instability.

2. Use Rolling or Expanding Windows for Validation

Random train-test splits break temporal order and distort performance evaluation. Instead, use time-aware validation techniques such as walk-forward validation.

This approach simulates real-world deployment: training on past data and predicting the future.

It ensures your model’s accuracy reflects how well it generalizes through evolving patterns.

3. Incorporate Exogenous Variables (External Features)

External signals weather, holidays, promotions, economic indicators often influence time-based behavior significantly. Incorporating them enhances forecasting accuracy, especially in volatile environments.

Feature engineering for temporal data should include lag features, moving averages, rolling statistics, Fourier features for seasonality, and holiday embeddings.

4. Apply Seasonality Decomposition Before Modeling

Decomposing signals into trend, seasonal, and residual components helps reveal hidden structures. It guides method selection:

Strong seasonality → Holt–Winters, Prophet

Complex nonlinearities → LSTM/Transformer models

Decomposition also makes diagnostics easier and reduces noise affecting the model.

5. Regularize and Smooth the Data When Needed

Sudden spikes or outliers can mislead forecasting models.

Techniques like winsorization, smoothing filters, or robust scaling mitigate noise.

Regularizing model weights or tuning smoothing parameters prevents overfitting and enhances long-term stability.

6. Ensemble Multiple Forecasting Models

Real-world time series rarely behave consistently. Combining ARIMA, exponential smoothing, and neural networks often yields more stable predictions.

Ensembles capture both linear and nonlinear dynamics, handle abrupt regime shifts better, and improve resilience during unexpected trend changes.

7. Continuously Retrain and Monitor Model Drift

Time series patterns frequently evolve due to behavioral shifts, new competitors, policy changes, or external shocks.

Implement automated retraining loops and drift detection mechanisms.

Monitoring forecast error in production ensures timely adjustments and prevents long-term degradation.

8. Optimize Forecast Horizon Based on Use Case

Short-term forecasts usually demand high precision, while long-term forecasts emphasize trend direction.

Aligning the horizon with business objectives helps select appropriate modeling techniques and loss functions.

Avoid forcing a single model to address both short- and long-range forecasting tasks simultaneously.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.