Reinforcement Learning (RL) is a learning paradigm where an agent interacts with an environment, learns from feedback, and makes sequential decisions to maximize long-term rewards.

Unlike supervised learning, which requires labeled examples, RL hinges on exploration, trial-and-error, delayed feedback, and dynamic policy updates.

This makes it highly suitable for robotics, game-playing systems, autonomous navigation, and adaptive control applications.

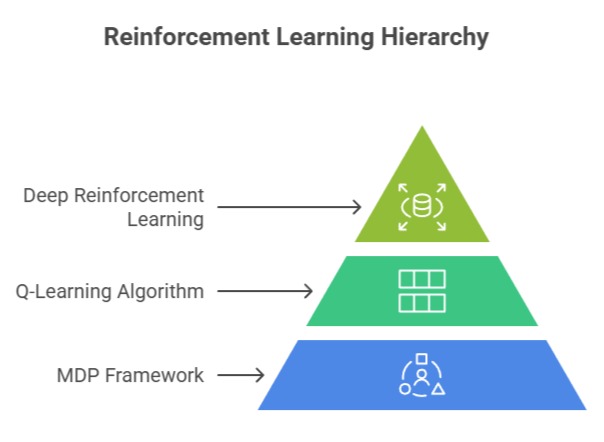

Markov Decision Processes (MDPs) provide the mathematical backbone for RL by defining states, actions, transition probabilities, and rewards.

Q-Learning, a value-based technique, teaches agents how to act optimally by estimating the expected return of actions without needing a model of the environment.

Deep Reinforcement Learning extends this by using neural networks to approximate high-dimensional value functions or policies, enabling RL to handle complex tasks like playing Atari games, controlling drones, or optimizing resource allocation in real-time systems.

Together, these components form the foundation of modern reinforcement learning pipelines, enabling machines to continuously improve through interaction, adaptation, and strategic decision-making.

Markov Decision Processes (MDPs)

Markov Decision Processes (MDPs) provide the mathematical foundation for modeling sequential decision-making in uncertain environments.

They define how agents interact with an environment and optimize actions to achieve long-term rewards.

1. State–Action–Reward Framework

MDPs formalize decision-making under uncertainty using a structured representation of states, actions, and rewards.

Each state describes the current situation of the agent, and each action influences the environment’s transition to the next state.

The model assumes the Markov property future states depend only on the current state and action, not the entire history—simplifying computational complexity.

This structure allows RL algorithms to compute optimal strategies using value functions or policies.

Such frameworks are essential in scenarios like elevator scheduling, where each decision (moving up or down) influences future states and total system efficiency.

For example, a robot navigating a warehouse needs an MDP to evaluate paths while balancing obstacles, battery usage, and travel time.

2. Long-Term Reward Optimization

MDPs focus on maximizing cumulative utility rather than immediate gains, making them suitable for tasks requiring strategic foresight.

The concept of discounted rewards ensures that future benefits are valued appropriately while still encouraging timely actions.

This balance helps agents avoid greedy decisions that undermine long-term success.

Value iteration and policy iteration algorithms leverage this structure to compute optimal policies systematically.

In supply chain management, for instance, an MDP helps determine the best restocking strategy by weighing operational costs against potential shortages.

The framework’s mathematical rigor makes it a reliable foundation for reinforcement learning models.

Q-Learning

Q-Learning is a fundamental reinforcement learning algorithm that enables agents to learn optimal actions through interaction with an environment.

It builds decision-making knowledge directly from experience without requiring a predefined model.

1. Model-Free Value Estimation

Q-Learning helps an agent learn optimal behaviors without needing explicit environmental knowledge, making it extremely practical in unpredictable settings.

The algorithm maintains a Q-table that stores the expected return of taking specific actions in various states.

Through repeated exploration, the table is updated using temporal-difference learning, gradually aligning Q-values with the agent’s long-term performance.

This method is highly effective for discrete state–action spaces, such as grid navigation or basic industrial automation.

For example, a simple cleaning robot uses Q-Learning to learn where to move next to minimize energy and maximize surface coverage, even without a predefined map.

2. Balancing Exploration and Exploitation

Q-Learning relies on strategies like epsilon-greedy selection to ensure the agent explores new possibilities while still leveraging known optimal actions.

Managing this balance is critical because insufficient exploration restricts learning, while excessive exploration slows progress.

Over time, the exploration rate decays, allowing the agent to solidify a stable, high-performing policy.

This dynamic trade-off is crucial in applications like financial trading bots, where exploring new strategies helps discover profitable patterns while exploiting known ones maintains stable returns.

The method’s adaptability makes it a staple in many RL-based control systems.

Deep Reinforcement Learning (DRL)

Deep Reinforcement Learning (DRL) combines reinforcement learning with deep neural networks to solve complex decision-making problems.

It enables agents to learn directly from high-dimensional inputs and develop sophisticated policies through experience.

1. Neural Network Function Approximation

Deep Reinforcement Learning enhances traditional RL methods by using deep neural networks to approximate value functions or policies, allowing the system to process high-dimensional sensory inputs.

Instead of manually defining features, the network automatically extracts meaningful patterns from images, audio, or complex signals.

This allows DRL techniques like Deep Q-Networks (DQNs) to excel in tasks where the input space is too large for tabular methods.

For example, a DQN can learn to play Atari games directly from raw pixel data, identifying objects, movements, and game rules through interaction.

This ability to handle rich inputs elevates RL to tackle real-world challenges such as autonomous navigation or drone control.

2. Stable Learning With Advanced Mechanisms

DRL incorporates strategies like experience replay and target networks to maintain learning stability and prevent divergence.

Experience replay stores past interactions, enabling batch learning and decorrelating data, while target networks stabilize updates by decoupling prediction and evaluation processes.

These mechanisms significantly improve reliability, especially in volatile environments where feedback changes rapidly.

DRL powers breakthroughs such as AlphaGo, robotic manipulation systems, and real-time resource optimization in cloud computing environments.

Its capacity to integrate perception and decision-making into a unified framework makes it indispensable for modern AI.

Real-World Case Studies in Reinforcement Learning

Reinforcement learning techniques are increasingly applied to solve complex, real-world decision-making and control problems.

These case studies illustrate how different RL methods translate theoretical concepts into practical, high-impact solutions across industries.

Case Study 1: Traffic Signal Optimization Using Markov Decision Processes (MDPs)

A large metropolitan city deployed an MDP-based decision system to regulate traffic signals across major intersections.

Each junction was represented as a state, with actions corresponding to changing signal durations or prioritizing particular lanes.

The system used real-time congestion data and probabilistic transition dynamics to predict upcoming traffic flows.

By optimizing cumulative reward—in this case minimizing total waiting time—the MDP reduced long queues during peak hours.

Over weeks of adaptation, the policy learned to balance flow across multiple connected intersections, proving more effective than rule-based timing loops.

The result was smoother commute times, fuel savings, and a measurable decrease in bottlenecks across the network. This demonstrated how mathematically structured planning can transform urban mobility.

Case Study 2: Autonomous Warehouse Robots Using Q-Learning

A major e-commerce company deployed autonomous robots for shelf retrieval and delivery, relying on Q-Learning to navigate complex warehouse layouts.

The environment was modeled as a grid where each location represented a state, and movement directions formed the action set. Initially, robots explored pathways randomly, updating Q-values based on time efficiency and collision avoidance rewards.

Over time, they learned optimal routes that minimized travel distance and avoided narrow aisles where congestion occurred.

The Q-Learning framework adapted quickly when shelves were rearranged, without requiring a fully rebuilt navigation system.

This reduced manual labor, accelerated order processing, and maintained operational fluidity during high-demand seasons.

The model-free nature of Q-Learning proved ideal for dynamic logistics environments.

Case Study 3: Deep Reinforcement Learning for Video Game AI (Atari & 3D Games)

A gaming company integrated Deep Reinforcement Learning to build intelligent agents capable of mastering complex environments such as racing and strategy-based games.

Using Deep Q-Networks (DQNs), the system processed raw pixel input from gameplay, extracting visual features and learning high-level strategies through repeated simulation.

Experience replay buffers and target networks stabilized learning, allowing the agent to surpass human performance in tasks like lane control, enemy avoidance, and resource management.

The agent adapted its behavior based on evolving game scenarios, learning to anticipate obstacles and exploit game mechanics.

This not only improved NPC behavior realism but also assisted developers in stress-testing difficult game levels.

The success validated DRL as a powerful tool for interactive entertainment design.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.