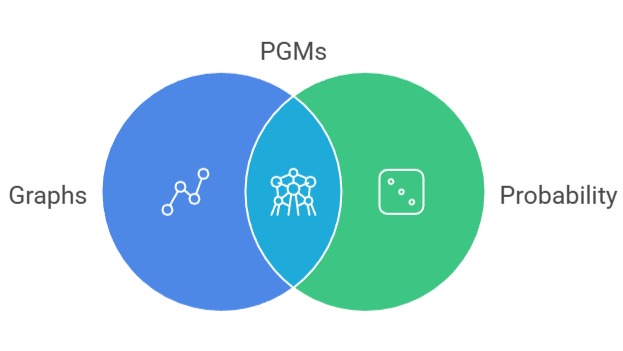

Probabilistic Graphical Models (PGMs) offer a structured way to represent complex probability distributions using graphs, allowing dependencies among variables to be modeled more transparently.

They simplify reasoning under uncertainty by decomposing large joint distributions into smaller, more manageable components.

PGMs combine graph theory with probability theory to capture relationships that may not be explicitly observable but influence outcomes indirectly.

These models excel in scenarios where multiple factors interact, such as medical diagnosis, speech recognition, and risk analysis. By encoding conditional independence, PGMs reduce computational complexity during inference.

They are widely used to build explainable machine learning systems where transparency is valued as much as accuracy.

Their adaptable nature makes them suitable for dynamic environments where knowledge evolves over time.

Probabilistic Graphical Models (PGMs)

Probabilistic graphical models represent complex variable dependencies using graphs, enabling efficient reasoning and inference under uncertainty.

1. Representation of Complex Dependencies

PGMs use nodes to represent variables and edges to represent relationships, allowing intricate patterns of influence to be visualized intuitively.

The graph structure helps identify which variables directly interact and which relationships can be ignored without losing essential information.

This selective modeling reduces dimensionality, making probability computations more tractable.

Many real-world systems exhibit layered or interconnected causes, and PGMs capture these without forcing restrictive assumptions.

Because of this expressiveness, they are commonly applied to environments involving uncertainty, such as robotics and forecasting.

The approach enables analysts to investigate how altering one variable influences the rest of the system.

This form of structured reasoning aids in understanding both causal and associative behaviors.

2. Conditional Independence Simplification

One of the most powerful concepts in PGMs is the use of conditional independence to reduce the computational burden of probability estimation.

Instead of assessing an entire joint distribution, the model focuses on smaller partitions that interact conditionally.

These independence rules dramatically shrink the number of calculations needed, especially in high-dimensional spaces.

This reduction ensures faster inference and more scalable model deployment.

eliminating unnecessary dependencies, PGMs prevent noise or irrelevant correlations from affecting predictions.

This selective focus provides clearer interpretability and stability. Many probabilistic algorithms rely heavily on these independence assumptions to achieve efficient reasoning.

3. Inference and Probabilistic Reasoning

PGMs support both exact and approximate inference, enabling practitioners to compute probabilities, make predictions, or identify the most likely explanation for observed data.

Exact methods like variable elimination work well for smaller networks, while approximate techniques such as Monte Carlo sampling handle larger, complex structures.

Inference allows systems to generate insights even when some values are missing or uncertain, making PGMs suitable for incomplete datasets.

They also help in determining the likelihood of hidden variables that influence observed outcomes.

This predictive power is particularly useful for domains like fault detection and decision support systems.

By enabling dynamic reasoning, PGMs adapt to new evidence and update beliefs efficiently.

4. Learning Model Structure from Data

PGMs can learn both structure and parameters from data, enabling models to uncover hidden relationships without manual intervention.

Structure learning identifies which variables should be connected, revealing patterns that may not be immediately obvious.

This capability is valuable when working with large datasets in domains such as genomics or finance, where relationships evolve or are initially unknown.

Parameter learning estimates the strength of these relationships, refining probabilistic interactions.

Together, these techniques allow PGMs to serve as data-driven knowledge discovery tools.

The learned graphs often provide domain experts with interpretable insights that classical models fail to offer. This combination of learning and inference makes PGMs highly adaptive.

Example: Disease Diagnosis System

In a medical diagnosis scenario, PGMs can connect symptoms, patient history, environmental exposure, and hidden conditions in a probabilistic framework.

If a patient reports a set of symptoms, the model computes the likelihood of various diseases by analyzing the connected dependencies.

Conditional independence helps ignore irrelevant factors, focusing only on meaningful relationships.

As new symptoms or test results arrive, the system updates probabilities in real time, improving diagnostic accuracy.

This dynamic reasoning assists doctors in identifying possible conditions more efficiently.

Such models are used in decision-support platforms that require transparent and interpretable logic. PGMs thus provide a robust foundation for risk assessment in healthcare.

Bayesian Networks

Bayesian networks model probabilistic relationships between variables using directed acyclic graphs to support reasoning and decision-making under uncertainty.

1. Directed Acyclic Graph Structure

Bayesian Networks (BNs) represent variables using a directed acyclic graph (DAG), where arrows define causal or directional influences between nodes.

The absence of cycles ensures that dependencies follow a logical progression, enabling structured reasoning from causes to effects.

This directional representation makes BNs ideal for modeling sequences of events, risk paths, and hierarchical systems.

The DAG also clarifies which variables act as predictors and which outcomes they influence.

These directional relationships enable clearer interpretability and support causal thinking.

As a result, Bayesian Networks are widely applied in fields where cause-effect understanding is essential.

Their structure enables models that are both explainable and mathematically rigorous.

2. Joint Probability Factorization

Bayesian Networks simplify complex joint distributions by factorizing them into a product of conditional probabilities.

This decomposition drastically reduces the computational complexity associated with high-dimensional data.

Each node is conditioned only on its parent nodes rather than the entire variable set, enabling more efficient learning and inference.

This factorization also enhances clarity by revealing how each factor contributes to the overall probability distribution.

Practitioners can easily identify which relationships have the strongest influence.

This structured breakdown supports more scalable and interpretable model deployment.

Factorization remains one of the core advantages of Bayesian Networks in large-scale predictive systems.

3. Handling Missing and Noisy Information

Bayesian Networks excel in real-world settings where data is incomplete, uncertain, or noisy.

Because BNs integrate probabilistic reasoning with structured dependencies, they naturally fill in missing information by considering alternative pathways.

This resilience is especially beneficial in sectors such as cybersecurity, medical testing, or sensor-based monitoring.

The model intelligently distributes probabilities even when certain measurements are unavailable.

Noise in recorded values does not severely degrade predictions, as the network relies on aggregated evidence from related variables.

This flexibility ensures reliable decision-making under imperfect conditions. BNs therefore become valuable tools in mission-critical systems that require dependable output.

4. Causal Reasoning and Decision Support

One of the strongest advantages of Bayesian Networks is their ability to model causal influence, making them useful for decision-making and scenario analysis.

Interventions can be simulated by adjusting node values, allowing practitioners to explore how changes propagate through the system.

This supports policy planning, safety assessments, and process optimization across industries.

Causal relationships help differentiate correlation from actual influence, improving reliability of decisions.

Organizations use BNs to compare outcomes under multiple hypothetical situations, enhancing strategic planning.

By grounding decisions in probabilistic evidence, BNs reduce uncertainty and provide actionable recommendations.

Their causal clarity makes them indispensable in controlled decision environments.

Example: Credit Risk Evaluation

In credit scoring, a Bayesian Network can link applicant information such as income, repayment history, employment stability, and economic trends to estimate the probability of default.

Each factor contributes to the final prediction based on its conditional influence. If new information emerges like recent payment delays the model updates the probability distribution dynamically.

This provides financial institutions with flexible, adaptive risk assessments.

The interpretability allows analysts to justify decisions, which is crucial in regulated industries.

Bayesian Networks also help identify hidden risk patterns that traditional models may overlook. Their structured reasoning enhances fairness and reduces biased decision-making.