As modern organizations generate unprecedented volumes of data from sensors, transactions, digital platforms, and enterprise systems, the need for scalable and efficient machine learning development has intensified.

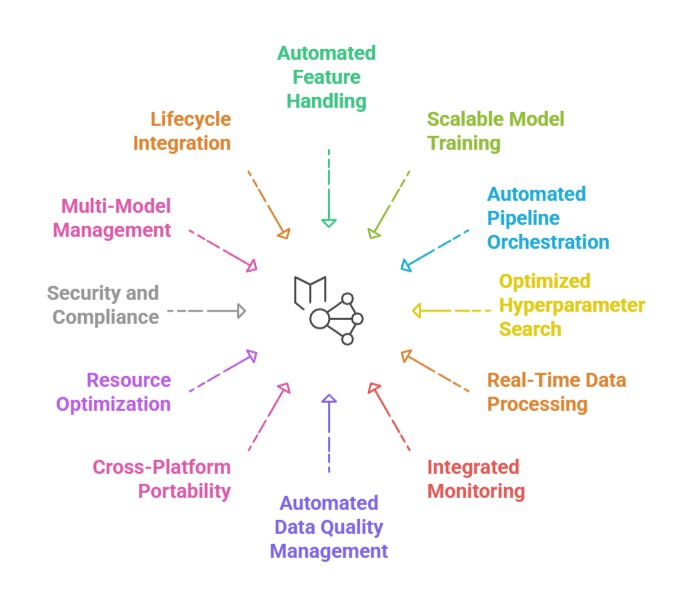

AutoML for large-scale environments addresses this demand by enabling automated model creation, feature handling, and end-to-end pipeline management over massive, fast-growing datasets.

Instead of manually tuning algorithms or engineering step-by-step workflows, AutoML orchestrates resource allocation, distributed processing, parallel experimentation, and adaptive optimization, ensuring that ML systems remain robust even when data expands into terabytes or petabytes.

These automated mechanisms reduce engineering bottlenecks, accelerate experimentation cycles, and ensure that data operations remain consistent across diverse workflows.

Today, large-scale AutoML is widely adopted in domains such as fraud analytics, real-time personalization, industrial monitoring, and scientific computing where large datasets require rapid and repeatable modeling processes.

By integrating automation with distributed computing frameworks like Apache Spark, Kubernetes, or Ray, AutoML pipelines can seamlessly scale with business needs and maintain reliable performance.

Importance of AutoML for Large-scale Data and ML Pipelines

1. Automated Feature Handling for Massive Datasets

AutoML systems for large-scale pipelines automatically handle feature extraction, missing value strategies, transformations, and correlation detection over enormous datasets without manual intervention.

These systems scan distributed data chunks, identify signal-rich attributes, and apply optimization strategies that avoid redundant computations.

For instance, Google’s AutoML Tables uses automated feature crosses and target encoding at big-data scale to accelerate enterprise modeling.

AutoML also manages drift-aware updates, recalibrating features when data patterns shift.

By reducing manual feature engineering time, organizations can deploy models faster and maintain consistent accuracy even as datasets evolve.

This automation proves essential in industries like e-commerce, where millions of customer interactions continuously update the dataset.

2. Scalable Model Training Across Distributed Compute

Large-scale AutoML automatically allocates compute resources and distributes training tasks across clusters, improving training speed and handling large datasets that exceed single-machine capacity.

Distributed execution engines coordinate hyperparameter exploration, model evaluation, and resource balancing without developer intervention.

For example, H2O Driverless AI uses GPU acceleration and multi-node training to produce high-performance models within minutes for telecommunications churn prediction involving millions of records.

This distributed strategy ensures reliability and removes the strain of manual configuration.

As a result, workloads become both faster and more cost-efficient, enabling real-time experimentation at organizational scale.

3. Automated Pipeline Orchestration and Workflow Consistency

AutoML platforms generate complete ML pipelines—including data ingestion, preprocessing, model training, validation, and deployment—while maintaining uniform standards across production environments.

These pipelines integrate CI/CD practices, ensuring automated version tracking and reproducibility.

For example, Azure AutoML orchestrates pipelines that connect with Data Factory and ML Ops components to create consistent production-ready flows.

The automation also prevents human error by enforcing standardized preprocessing and evaluation protocols.

This helps enterprises avoid fragmented processes and ensures that ML lifecycle steps are synchronized across teams and environments.

4. Optimized Hyperparameter and Architecture Search at Scale

Hyperparameter optimization becomes increasingly difficult with large datasets, but AutoML platforms use efficient techniques like Bayesian optimization, population-based training, or Multi-Fidelity search to accelerate convergence.

These methods allow systems to prune poor-performing configurations early, reducing computation costs.

For example, Amazon SageMaker Autopilot identifies optimal learning rates, tree depths, or neural architecture variations while evaluating models across distributed trials.

This scalable search process is crucial for domains such as real-time ad ranking where model responsiveness directly impacts revenue.

The automation ensures accuracy improvements without overwhelming computational overhead.

5. Real-Time Data Processing for Streaming ML Pipelines

In environments where data arrives continuously such as IoT networks, finance, or supply chains AutoML supports streaming pipelines that update models in near real-time.

Automated drift detectors identify shifts in incoming data and trigger re-training or recalibration workflows.

For example, Uber’s Michelangelo platform leverages automated refresh pipelines for surge-pricing predictions, ensuring models remain responsive to live activity.

The automation ensures continuous alignment between evolving data patterns and deployed models.

This ability is essential for mission-critical systems that depend on immediate inference quality.

6. Integrated Monitoring and Automated Re-training

AutoML frameworks include built-in monitoring tools that track model performance, data consistency, and pipeline health.

When metrics indicate degradation, AutoML triggers retraining events, updates the model registry, and redeploys improved versions seamlessly.

For instance, LinkedIn employs automated retraining workflows for job-recommendation engines, allowing models to adapt to new hiring trends without downtime.

Automated oversight reduces operational burden and minimizes the risk of outdated predictions.

Such closed-loop automation ensures that ML systems remain performant and aligned with business requirements.

7. Automated Data Quality Management for Large Pipelines

AutoML systems built for enterprise-scale environments incorporate advanced data validation routines that automatically scan massive datasets for anomalies, schema mismatches, duplication bursts, and inconsistent formats.

These automated checks prevent defective data from reaching downstream models, reducing the chances of skewed outputs.

For example, TFX (TensorFlow Extended) integrates AutoML with data validation components that automatically flag abnormal spikes in e-commerce order logs before feeding them into recommendation models.

This continuous quality monitoring helps teams maintain reliability without manually inspecting millions of entries.

By automating data integrity checks, organizations ensure stable, repeatable training cycles even when data volume or structure changes rapidly.

8. Cross-Platform Pipeline Portability and Deployment Automation

Modern AutoML frameworks simplify deployment by generating portable pipelines that can run across multi-cloud, on-premise clusters, or edge devices without rewriting code.

These systems automatically package preprocessing logic, model artifacts, and environment specifications into deployable units.

For example, Kubeflow AutoML exports containerized workflows that can be deployed consistently on Kubernetes clusters for fraud-detection workloads across multiple branches of a financial institution.

This portability eliminates compatibility issues and shortens rollout timelines.

Automated environment configuration also minimizes dependency errors and ensures predictable performance in diverse computing settings.

9. Resource Optimization and Cost-Aware Training Policies

Large-scale AutoML platforms incorporate intelligent budgeting controls that automatically balance model accuracy with infrastructure expenses.

They analyze workload patterns, determine when to scale resources up or down, and prioritize efficient algorithms to reduce computation waste.

For instance, DataRobot’s cost-aware modeling engine considers cloud billing metrics and selects leaner architectures for large telecom datasets where cost control is a priority.

These systems also terminate underperforming experiments early, conserving GPU or cluster usage.

The result is a more financially sustainable ML environment, especially for organizations training multiple high-volume models simultaneously.

10. Security, Access Control, and Compliance Automation

Enterprise-scale pipelines require strong governance, and AutoML platforms incorporate automated role-based access, audit trails, encryption policies, and compliance workflows.

These controls ensure that sensitive datasets—such as medical records, financial logs, or identity information are processed responsibly.

For example, Amazon SageMaker pipelines enforce automated permission checks before allowing training jobs to access protected S3 buckets for insurance-risk modeling.

Automated compliance ensures alignment with regulations like GDPR or HIPAA without relying solely on manual oversight.

This protects organizations from unauthorized access and reduces operational risk in scaling ML workflows.

11. Multi-Model Management and Automated Variant Testing

Large AutoML ecosystems can generate hundreds of model variants during experimentation, and automated model management systems organize them, rank their performance, and select the best candidate for deployment.

These mechanisms automatically run A/B or shadow tests to compare real-time performance across different model versions.

For example, PayPal uses automated variant testing to evaluate several fraud-detection models simultaneously before choosing the most stable one for production traffic.

By automating comparison, rollback, and selection, teams avoid the complexity of manually evaluating model versions, especially when dealing with high-velocity data streams.

12. Lifecycle Integration with Business Processes and Analytics Systems

AutoML pipelines connect seamlessly with business intelligence tools, data warehouses, operational dashboards, and transactional systems.

This integration ensures that trained models influence decision-making without delay.

For example, a retail chain can connect AutoML-driven forecasting pipelines directly to inventory management software, enabling automated restocking decisions based on real-time demand signals.

This alignment between ML ecosystems and business operations reduces manual intervention and improves response speed.

It transforms ML from an isolated analytic tool into a core driver of organizational strategy.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.