Automated Machine Learning (AutoML) has become a transformative approach in modern machine learning pipelines by reducing the effort, time, and expertise required to build effective predictive models.

Instead of manually testing algorithms, adjusting settings, and evaluating performance, AutoML systems streamline this workflow through intelligent search strategies, automated validation, and adaptive optimization.

This democratizes machine learning, enabling both experts and beginners to focus on strategy and interpretation rather than exhaustive technical experimentation.

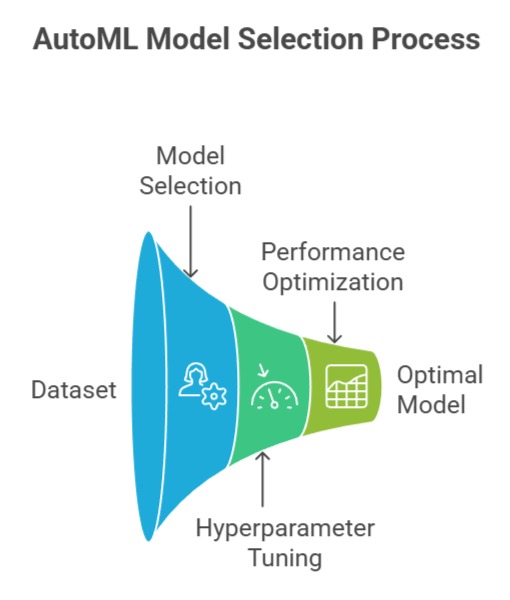

Model selection and hyperparameter tuning are two core components of AutoML that significantly impact the quality and reliability of machine learning solutions.

Model selection identifies the most suitable algorithm based on dataset characteristics, performance constraints, and computational limitations.

Hyperparameter tuning automation, on the other hand, refines the chosen model by systematically exploring parameter configurations to achieve optimal performance.

With advancements in Bayesian optimisation, genetic search, meta-learning, and neural architecture search (NAS), AutoML platforms now deliver highly efficient, scalable, and robust models suited for academic, industrial, and real-time applications.

Model Selection (AutoML)

Model Selection in AutoML automates the process of identifying the most suitable algorithm for a given dataset and task.

It removes guesswork by systematically evaluating, comparing, and ranking models based on performance and reliability.

1. Automated algorithm comparison

AutoML systematically evaluates several machine learning algorithms—such as Random Forests, Gradient Boosting, SVMs, Neural Networks—by benchmarking them across validation metrics.

It performs this comparison without manual intervention, ensuring a neutral and thorough search that avoids human biases, especially in high-dimensional or noisy datasets.

For example, tools like H2O AutoML automatically rank models based on performance and stability.

2. Meta-learning-driven choices:

Advanced AutoML systems use historical data from past learning tasks to predict which models may work best for a new dataset.

This reduces the exploration time while maintaining strong accuracy.

Techniques like warm-starting model selection are especially useful in domains like finance or healthcare where dataset structures are often similar across projects.

3. Adaptive evaluation strategies:

AutoML platforms often adjust model evaluation frequency, validation splits, and early-stopping rules dynamically based on model behavior.

This prevents wasting computation on underperforming algorithms, improving efficiency in large-scale model sweeps.

For example, Auto-sklearn uses dynamic resource allocation to prune poor candidates early.

4. Automatic feature–model compatibility checking:

Some algorithms require specific data structures or scaling approaches. AutoML learns dataset properties such as sparsity, feature types, or distribution patterns and accordingly filters incompatible models.

This prevents errors and ensures the pipeline remains stable, particularly in diverse datasets like image embeddings or textual vectors.

5. Model performance tracking and ranking:

Instead of manually reading confusion matrices, AutoML provides streamlined leaderboards that allow users to focus on the best configurations.

This ranking incorporates multiple metrics—accuracy, F1-score, AUC, inference latency—making the selection holistic.

For instance, Google Cloud AutoML ranks models visually for transparency.

6. Cross-validation automation:

Traditional model selection often demands repeated cross-validation to ensure stability.

AutoML automates this entire process using parallelized evaluation, enabling rapid exploration across numerous folds.

This is critical in fields such as fraud detection where class imbalance can skew simple train–test splits.

Example: In a churn prediction project, AutoML evaluates dozens of algorithms and selects a Gradient Boosting model with the best AUC, eliminating the need for manual experimentation.

Hyperparameter Tuning Automation

Hyperparameter Tuning Automation uses AutoML techniques to automatically discover optimal training configurations without manual experimentation.

It accelerates model optimization while ensuring strong performance and efficient resource utilization.

1. Search space optimization

AutoML intelligently defines a hyperparameter search space based on the chosen model’s structure, avoiding unrealistic or irrelevant configurations.

This prevents wasted computation and makes tuning more targeted. For example, learning rates or tree depths are automatically bounded based on dataset scale and model complexity.

2. Bayesian optimization for efficient tuning:

Many AutoML systems rely on Bayesian optimization to iteratively refine hyperparameters based on previous trial outcomes.

This reduces the randomness of grid or random search and converges faster toward optimal configurations.

Tools like Optuna use probabilistic modeling to guide the search intelligently.

3. Parallel and distributed tuning:

AutoML platforms can conduct multiple hyperparameter trials simultaneously across CPUs or GPUs, drastically reducing total tuning time.

This feature is especially crucial for deep learning models where each training run is computationally heavy.

For instance, Ray Tune supports highly scalable distributed tuning.

4. Automated early stopping: When a particular hyperparameter configuration shows poor progress early in training, AutoML can terminate the run to save time—a process known as trial pruning.

This accelerates optimization, particularly in large neural networks with long training cycles.

5. Dynamic adjustment of search strategies: AutoML may begin with broad exploration and shift to fine-grained tuning as promising regions of the hyperparameter space are discovered.

This combination of exploration and exploitation increases the likelihood of finding optimal configurations without unnecessary trials.

6. Handling multi-objective tuning:

Many real-world applications require balancing competing goals such as accuracy, inference speed, and resource usage.

AutoML supports multi-objective optimization by evaluating hyperparameters across several metrics, enabling balanced solutions for production environments.

Example: When training a text classification model, AutoML optimizes learning rate, dropout rate, and batch size using Bayesian search and early stopping, achieving 7% higher accuracy than manual tuning.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.