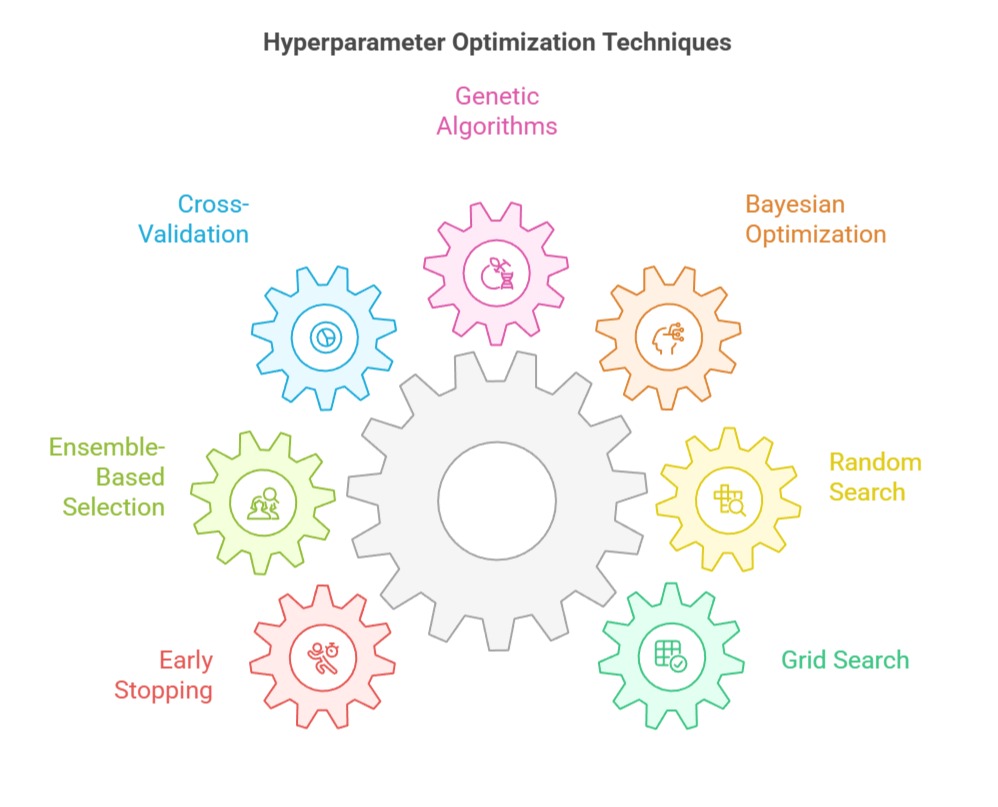

Hyperparameter tuning and model selection strategies focus on identifying the optimal configuration of model parameters to achieve the best predictive performance and generalization.

These techniques systematically compare multiple models and parameter settings using validation frameworks to ensure robust, reliable, and efficient machine learning solutions.

Grid Search Optimization

Grid search optimization systematically evaluates all possible combinations of predefined hyperparameters to identify the configuration that yields the best model performance.

Key Points

1. Grid Search performs a systematic sweep over predefined hyperparameter values to evaluate model behavior across all possible combinations.

2. It is highly interpretable because every tested configuration is explicit, making it easier to understand which parameter influences performance.

3. The method is best suited for smaller search spaces where computation cost is manageable and results remain exhaustive.

4. It helps identify interactions between parameters by evaluating complete permutations instead of random subsets.

5. Grid Search often integrates cross-validation, ensuring performance metrics are robust and not dependent on a single train-test split.

6. The technique can become slow with high-dimensional search spaces, but remains a reliable baseline for controlled experimentation.

7. It is commonly used during early phases of model building to benchmark hyperparameters systematically.

8. Works particularly well when the practitioner has a clear understanding of reasonable ranges for each parameter.

Example: Tuning an SVM with parameters C = {0.1,1,10} and kernel = {linear, rbf}, Grid Search evaluates each combination to find the best configuration.

Random Search Optimisation

Random search optimization explores a random subset of hyperparameter combinations to efficiently discover high-performing models with reduced computational cost.

Key Points

1. Random Search samples combinations randomly from specified ranges rather than testing every possibility.

2. It often reaches strong solutions faster because it avoids exploring unproductive regions of the search grid.

3. The technique works especially well when certain hyperparameters have minimal impact, reducing wasted computation.

4. It handles large and continuous ranges smoothly, allowing much wider parameter exploration than grid methods.

5. Performance improves as the number of trials increases, giving flexibility based on available resources.

6. It is commonly used for algorithms with many hyperparameters, such as neural networks or ensemble models.

7. Random Search can uncover high-quality settings even when optimal values lie outside the originally estimated ranges.

8. The method is efficient when paired with early-stopping rules that cut off weak configurations early.

Example: Tuning a Random Forest by sampling n_estimators, max_depth, and max_features randomly over wide intervals rather than fixed lists.

Bayesian Optimization

Bayesian optimization uses probabilistic models to intelligently select hyperparameter values, balancing exploration and exploitation to find optimal configurations with fewer evaluations.

Key Points

1. Bayesian Optimization builds a probabilistic model of the objective function and uses it to determine promising hyperparameter regions.

2. It reduces computational cost by strategically balancing exploration and exploitation using acquisition functions.

3. The method continually updates beliefs about the search space after each evaluation, improving accuracy over time.

4. It is ideal for expensive models—such as deep learning or large gradient-boosting pipelines—where every evaluation matters.

5. Bayesian techniques such as Gaussian Processes or Tree-Parzen Estimators capture nonlinear relationships between parameters.

6. The process avoids blind searching and instead recommends optimal parameter sets based on estimated performance curves.

7. It adapts dynamically, making the search more intelligent as more information is collected.

8. Often integrated in modern platforms like Optuna, Hyperopt, or Scikit-Optimize.

Example: Optimizing XGBoost learning_rate and max_depth using Bayesian Optimization to suggest the next best values based on previous results.

Genetic Algorithms (Evolutionary Search)

Genetic algorithms apply evolutionary principles such as selection, crossover, and mutation to iteratively evolve and identify optimal or near-optimal hyperparameter configurations.

Key Points

1. Genetic Algorithms mimic natural evolution through selection, mutation, and crossover to improve hyperparameters iteratively.

2. They maintain a population of candidate solutions, gradually evolving them toward more optimal configurations.

3. The approach works well for non-convex, irregular, or discrete search spaces where traditional optimization struggles.

4. Mutation allows exploration of new regions, while crossover combines strong traits from high-performing individuals.

5. GA-based tuning handles complex interactions among parameters naturally without explicit modeling.

6. The method is parallelizable, making it suitable for distributed systems where multiple solutions run concurrently.

7. It is often used in tasks like neural architecture search or optimizing exotic model structures.

8. Works especially well when hyperparameters include categorical or hierarchical options.

Example: Using GA to tune CNN architectures, where parameters like number of filters, kernel sizes, and activation functions evolve over generations.

Cross-Validation for Model Selection

Cross-validation for model selection evaluates model performance across multiple data splits to ensure robust generalization and reliable comparison between competing models.

Key Points

1. Cross-validation divides data into multiple folds, ensuring each portion gets a turn as a validation segment.

2. It reduces overfitting risk by assessing performance across diverse partitions rather than relying on one split.

3. Models are judged based on aggregated metrics (mean accuracy, RMSE, etc.), giving more stable estimates.

4. CV helps compare different algorithms under identical evaluation conditions, improving fairness in selection.

5. It is essential when working with limited datasets where a separate validation set would waste data.

6. Common variations—like k-fold, stratified, and repeated CV—adapt based on need and dataset characteristics.

7. Cross-validation integrates seamlessly with tuning methods like Grid Search and Bayesian Optimization.

8. It helps reveal models that generalize consistently instead of those performing well by chance.

Example: Using 5-fold CV to compare Logistic Regression, Random Forest, and XGBoost for credit-risk prediction.

Ensemble-Based Selection (Stacking & Blending Validation)

Ensemble-based selection uses validation techniques to assess and combine multiple models through stacking or blending, improving overall predictive performance and stability.

Key Points

1. Ensemble selection evaluates multiple tuned models and combines their predictions to form a superior meta-model.

2. It reduces dependence on a single configuration by leveraging diversity across algorithms and hyperparameters.

3. Stacking uses a second-level learner trained on out-of-fold predictions to refine decision boundaries.

4. Blending provides a simpler variant where a holdout set supports meta-model training.

5. This strategy often surpasses individual models, especially when different learners capture unique patterns.

6. It helps mitigate variance from unstable models like decision trees or boosting systems.

7. Ensemble selection is widely used in competitions and real-world pipelines due to its robustness.

8. Works well when models have complementary strengths.

Example: Stacking Logistic Regression + Random Forest + LightGBM using a meta-learner such as XGBoost for fraud-detection tasks.

Early Stopping for Model Selection

Early stopping for model selection halts training when validation performance stops improving, preventing overfitting and identifying the most effective model state.

Key Points

1. Early stopping monitors model performance on validation data during training to prevent unnecessary iterations.

2. It halts training when additional epochs no longer yield improvements, avoiding overfitting and wasted computation.

3. The method is particularly useful in neural networks and gradient-boosting algorithms.

4. It effectively acts as an automatic model selector by determining the optimal training duration.

5. Early stopping provides an implicit regularization effect, helping the model maintain generalization capability.

6. It is simple to implement and integrates seamlessly with most modern ML frameworks.

7. The technique saves time by eliminating needlessly long training cycles.

8. Commonly used alongside hyperparameter tuning tools that repeatedly train models.

Example: Training a LightGBM classifier with early stopping at 120 rounds when validation AUC stops improving.

Case Study 1: Hyperparameter Tuning for Fraud Detection in Digital Banking

Banks dealing with high-volume online transactions rely heavily on machine learning to identify fraudulent patterns in real time.

A leading fintech company used Bayesian Optimization to fine-tune XGBoost’s parameters such as learning_rate, min_child_weight, and max_depth.

Since fraud data is heavily imbalanced, traditional grid search struggled with the massive search space. Bayesian Optimization helped narrow down high-potential configurations efficiently by modeling relationships between parameters and validation performance.

The optimized model improved recall significantly, ensuring more fraudulent events were caught without excessive false alarms.

Cross-validation further validated that the tuned model performed consistently across different monthly transaction segments.

This systematic tuning process resulted in faster detection times, fewer manual reviews, and improved customer trust.

Case Study 2: Model Selection for Demand Forecasting in Retail Supply Chains

A global retail chain used machine learning to forecast product demand across thousands of stores, each with unique buying patterns.

They compared models such as Random Forest, LightGBM, and Prophet, using 5-fold time-series cross-validation to prevent leakage.

Random Search was applied to explore wide ranges of tree depths, sampling ratios, and lag-based features.

The search identified that LightGBM performed best in capturing seasonal fluctuations while maintaining computational efficiency.

The chosen model reduced stockouts and overstock scenarios by accurately predicting weekly demand variations.

The selection process also accounted for forecasting stability across different product categories, making the deployment scalable.

Overall, optimized tuning helped the retailer lower operational costs and increase product availability at the right locations.