Dimensionality reduction is a crucial technique in advanced data science, designed to simplify high-dimensional datasets while preserving essential structure, patterns, and variability.

As modern applications generate massive feature spaces ranging from genomics and social media analytics to sensor logs and transactional records working directly with such numerous variables can lead to computational inefficiencies, noisy representations, and poor model generalization.

Dimensionality reduction methods aim to overcome these challenges by mapping data into a more compact space, reducing redundancy, filtering noise, and highlighting meaningful relationships among features.

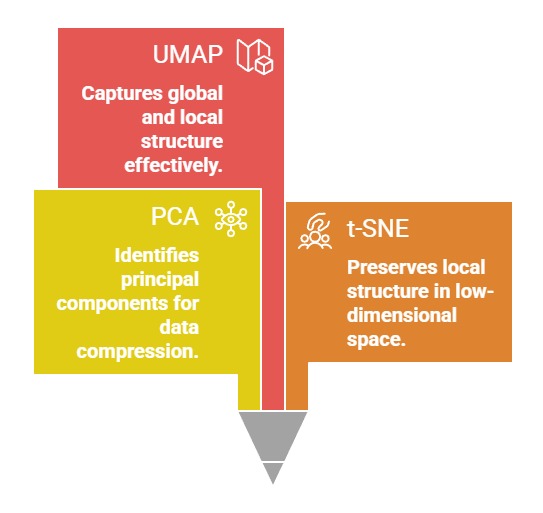

PCA, t-SNE, and UMAP represent three widely adopted approaches, each optimized for different analytical goals.

PCA focuses on linear transformations and variance preservation, making it ideal for structured numerical datasets with strong correlations.

t-SNE specializes in exploring local neighbourhoods and visualizing complex nonlinear manifolds, making it popular in high-dimensional visualization tasks such as embeddings or biological expression data.

UMAP provides a more mathematically grounded and computationally efficient alternative to t-SNE, maintaining both local and global structures while enabling scalable performance for real-world applications.

Principal Component Analysis (PCA)

Principal Component Analysis (PCA) is a dimensionality reduction technique that transforms high-dimensional data into a smaller set of uncorrelated components while preserving most of the original variance.

It is widely used to simplify datasets, reduce noise, and improve the efficiency and performance of machine learning models.

1. Captures Maximum Variance Through Orthogonal Components

PCA prioritizes directions in the dataset that contain the highest variability and transforms the data along these axes to reduce dimensionality without losing critical information.

It ensures these components are orthogonal, eliminating redundancy between correlated variables.

This enables models to focus on the most meaningful structural patterns present in the data.

By representing the data in a variance-maximized space, PCA reduces noise and enhances clarity.

This makes it ideal for high-dimensional datasets where interpretability and efficiency are key.

2. Reduces Correlated Feature Redundancy

When multiple variables convey overlapping information—as seen in financial, industrial, and sensor datasets—PCA merges them into fewer composite components.

This reduces multicollinearity and simplifies model training.

Eliminating redundant attributes prevents fluctuating coefficients in regression-based models and stabilizes predictions.

Such consolidation is valuable for datasets where correlated signals distort learning. PCA ensures relationships are preserved but expressed more compactly.

3. Improves Computational Efficiency in Large Datasets

High-dimensional datasets slow down training and may strain memory.

PCA reduces dimensionality, resulting in smaller matrices that speed up downstream tasks like clustering, classification, or visualization.

This efficiency is crucial when dealing with millions of samples or real-time streaming environments.

The computational savings often translate into faster experimentation and lower infrastructure costs. PCA becomes a foundational preprocessing step in scalable pipelines.

4. Enhances Visualization and Pattern Discovery

By projecting high-dimensional information into two or three principal components, PCA reveals broad trends, clusters, and outliers invisible in raw form.

Analysts can detect hidden structures, anomalies, or relationships between entities.

This improves exploratory data analysis and supports hypothesis development.

It is particularly useful in domains where interpretability matters, such as healthcare diagnostics or customer behavior segmentation.

5. Minimizes Noise by Filtering Weak Components

Components capturing minimal variance often correspond to noise or irrelevant fluctuations.

PCA systematically excludes such components, resulting in cleaner and more stable datasets.

Removing noise strengthens subsequent model accuracy and consistency.

It also prevents the model from overfitting.

This makes PCA especially valuable in domains like manufacturing quality control or sensor analytics where noise is prevalent.

Works as a Preprocessing Step for Many ML Algorithms

Models such as clustering algorithms, SVMs, and regression models benefit from PCA preprocessing, especially when dealing with high-dimensional inputs.

PCA simplifies the input space, ensuring algorithms converge faster and more reliably.

It also improves distance-based methods by creating a more uniform representation. This synergy enhances overall pipeline performance.

t-SNE (t-Distributed Stochastic Neighbor Embedding)

t-Distributed Stochastic Neighbor Embedding (t-SNE) is a nonlinear dimensionality reduction technique designed primarily for visualizing high-dimensional data in two or three dimensions.

It is widely used to explore complex patterns, clusters, and structures that are difficult to observe in the original feature space.

1. Reveals Local Neighborhood Structure Effectively

t-SNE excels at preserving local relationships, ensuring points close in high-dimensional space remain close in low-dimensional projections.

This focus uncovers fine-grained clusters or hidden subgroups.

It is especially beneficial in complex domains where subtle local patterns are more important than global structure. Visualizations become intuitive, helping researchers understand data topology deeply.

2. Ideal for Visualizing Complex Nonlinear Manifolds

Unlike linear methods, t-SNE handles curved or nonlinear structures gracefully.

It maps high-dimensional manifolds into meaningful 2D/3D plots. This helps uncover shapes and patterns that linear models flatten out or distort.

Tools like t-SNE have become central in fields such as genome profiling, document embeddings, and neural representation analysis.

3. Provides Strong Cluster Separation in Visual Plots

t-SNE naturally expands distances between clusters, allowing distinct groups to become visually identifiable. This makes it a favorite among analysts who need to differentiate types of images, documents, or biological cells. Clear cluster delineation supports more accurate interpretation and diagnostic analysis. It is particularly useful for validating dataset separability before model training.

4. Handles Very High-Dimensional Data Gracefully

t-SNE is capable of processing thousands of dimensions and condensing them into intuitive low-dimensional structures.

It handles language embeddings, gene sequences, and deep neural activations that traditionally defy interpretation.

This capability makes the technique extremely important in NLP and deep learning workflows.

5. Highly Sensitive to Hyperparameters (Perplexity, LR)

t-SNE’s performance relies heavily on tuning parameters such as perplexity, learning rate, and iteration count. Incorrect values may distort clusters or hide meaningful structures.

This sensitivity requires experimentation and domain understanding. However, proper tuning produces exceptional visual clarity, making it worth the additional effort.

6. Best Suited for Exploration, Not Production Pipelines

Because t-SNE lacks a consistent transformation mechanism for new data, it is rarely used in production.

It is designed primarily for visual analysis, not feature extraction for modeling.

This limitation means its strength lies in research and inspection rather than operational ML pipelines.

UMAP (Uniform Manifold Approximation and Projection)

UMAP (Uniform Manifold Approximation and Projection) is a powerful dimensionality reduction technique that transforms high-dimensional data into a low-dimensional space while preserving meaningful structure.

It balances local neighborhood relationships with global data layout, enabling insightful visualization and robust feature generation for machine learning.

1. Preserves Both Local and Global Data Structure

UMAP maintains neighbourhood relationships like t-SNE but also retains broader data layout, giving a more cohesive view of high-dimensional spaces.

This dual preservation provides a balanced representation, helping analysts capture both micro-patterns and overall structure.

As a result, cluster shapes and transitions between groups appear more natural and continuous.

2. Significantly Faster and More Scalable Than t-SNE

UMAP’s optimized mathematical foundation enables large-scale processing on millions of samples without excessive computation.

It leverages efficient graph-based algorithms and approximate nearest-neighbor searches, making it suitable for enterprise-level data workflows.

Its speed advantage allows real-time or near-real-time visualization for large datasets.

3. Allows Reusable Transformations for New Data

Unlike t-SNE, UMAP can store its transformation model and apply it to unseen data, making it practical for production pipelines.

This capability supports building stable embedding systems used in recommendation engines, anomaly detection, or document clustering.

Its consistency elevates it from a visualization tool to an operational feature generator.

4. Highly Flexible Through Custom Parameters

Users can adjust parameters like n_neighbors and min_dist to control clustering density, smoothness, and separation.

This tunability helps shape the embedding for specific use cases—either tightly packing clusters or showing broader transitions.

Such flexibility allows fine-grained control over the resulting geometry.

5. Supports Multiple Data Modalities and Distance Metrics

UMAP accommodates various metrics—cosine, manhattan, euclidean, and more—making it effective for text, images, categorical patterns, and graph-like data.

This adaptability expands its use beyond numerical datasets.

It also handles sparse input efficiently, making it suitable for high-dimensional NLP representations.

6. Enhances Downstream ML Performance Through Compact Embeddings

UMAP-generated embeddings often improve the performance of clustering, classification, or retrieval models by emphasizing genuine structure and suppressing noise.

These embeddings preserve meaningful distances, fostering better decision boundaries.

This makes UMAP valuable in domains requiring precise similarity understanding, such as search engines or customer segmentation systems.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.