Support Vector Machines (SVM) represent a powerful class of supervised learning algorithms designed to construct the most discriminative boundary between classes.

Unlike traditional linear classifiers, SVMs prioritize maximizing the separation distance, ensuring stronger generalization even when training data contains noise or overlaps.

Kernel methods further expand SVM’s capabilities by allowing the model to perform complex transformations without explicitly mapping data into high-dimensional spaces.

This fusion enables SVMs to adapt to nonlinear trends common in modern datasets such as image recognition, genomic analysis, sentiment classification, and anomaly detection.

Together, SVMs and kernel-based techniques provide a mathematically robust, scalable, and versatile framework that continues to be relevant in contemporary machine learning pipelines.

Support Vector Machines (SVM)

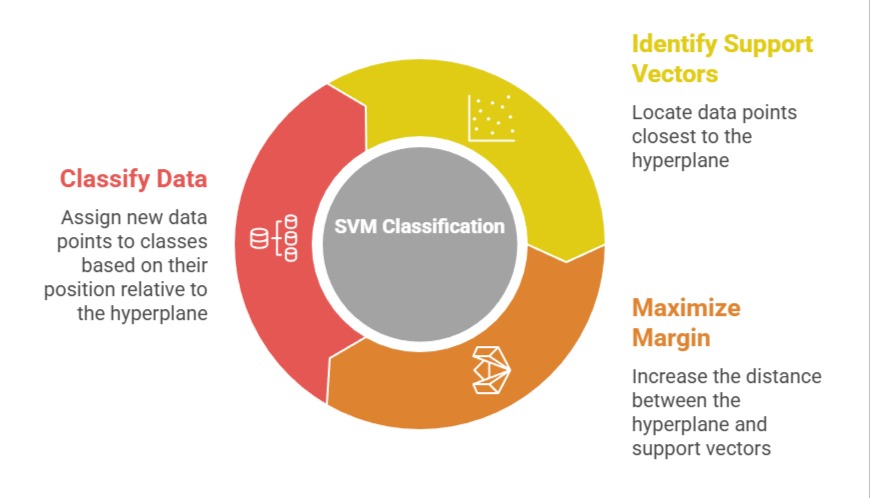

SVM is a margin-based classification and regression algorithm that finds the optimal hyperplane separating different classes by maximizing the distance from the nearest data points known as support vectors.

Importance of Support Vector Machines

Support Vector Machines (SVMs) are powerful supervised learning algorithms known for their strong theoretical foundation and reliable performance across diverse problem settings.

Their importance lies in their ability to generalize well, handle high-dimensional data, and produce robust decision boundaries.

1. Margin Maximization

SVM focuses on identifying the boundary that creates the widest separation between classes, improving robustness against data variability.

This emphasis on distance rather than empirical error helps reduce overfitting and ensures stable predictions on unseen samples.

The margin acts as a safety zone so even if data shifts slightly, classification remains reliable.

This architecture is particularly useful when working with structured or high-dimensional datasets.

For example, in email spam classification, SVM ensures spam and non-spam emails stay clearly distinguished by maximizing the separation margin.

2. Support Vectors as Critical Decision Points

Only a small subset of the dataset—support vectors—determines the final boundary, meaning SVM does not rely on all points equally.

This selective dependence makes the model stable and resistant to noise, especially when large datasets contain redundant or duplicated records.

These support vectors influence the model’s orientation and stability, ensuring outputs remain consistent.

In handwriting recognition, for instance, a handful of representative characters guide the classifier’s decision plane effectively.

3. Effective in High-Dimensional Spaces

SVM handles scenarios where the number of features is significantly larger than the number of observations.

The mathematical formulation does not degrade in performance under such conditions, making it suitable for genomics, document classification, or text mining.

Its reliance on geometric principles rather than dense parameterization keeps computation manageable.

For example, SVM excels in classifying texts using TF-IDF vectors where feature counts can exceed tens of thousands.

4. Versatile for Classification and Regression (SVR)

Beyond classification, SVM can be extended to regression tasks (Support Vector Regression). SVR uses an ε-insensitive margin that allows flexibility while still maintaining a robust distance-based approach.

This makes it useful for forecasting problems where precision around a specific tolerance is required.

For instance, SVR is widely applied in energy demand prediction where slight variations are tolerable but large deviations must be restricted.

5. Strong Performance with Limited Samples

SVM does not require massive datasets to achieve high accuracy, making it valuable in domains where data collection is expensive or limited.

Its margins allow it to generalize based on fewer examples without compromising reliability.

Researchers often use SVM for medical imaging classifications where sample availability is constrained.

For example, tumor detection models often rely on SVM due to the small size of curated datasets.

6. Built-In Regularization Mechanism

SVM incorporates the C-parameter, which balances misclassification tolerance with margin width.

This built-in tradeoff makes it easy to tune models for either conservative or flexible decision boundaries.

Highly noisy environments can be addressed by adjusting this parameter, offering more control over sensitivity.

In credit risk prediction, tuning C can determine whether borderline applicants should be misclassified to reduce risk.

7. Works Well for Non-linearly Separable Data with Kernels

When data forms curved or intertwined patterns, linear boundaries fail. However, pairing SVM with kernel functions enables the algorithm to detect intricate relationships.

These nonlinear transformations occur implicitly, making SVM both efficient and expressive.

For example, SVM with a polynomial kernel can classify circular or spiral datasets that linear methods cannot separate.

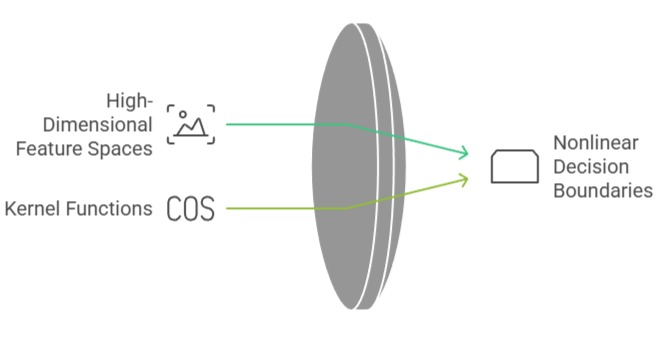

Kernel Methods

Kernel methods enable SVM to operate in high-dimensional feature spaces without explicitly computing mappings.

They measure similarity between data points through kernel functions, enabling nonlinear decision boundaries.

Importance of Kernel Methods

Kernel methods extend the power of machine learning algorithms by enabling them to model complex, nonlinear relationships efficiently.

Their importance lies in providing flexible, expressive transformations that make otherwise inseparable data patterns learnable.

1. Implicit Feature Transformation (Kernel Trick)

The kernel trick allows complex features to be analyzed without constructing them explicitly, saving memory and computation time.

This makes it feasible to create sophisticated classifiers even when original features appear linearly inseparable.

The idea is to rely on similarity scores that represent hidden relationships.

For example, using an RBF kernel can detect clusters that appear circular or irregular in nature.

2. Handles Highly Nonlinear Data Efficiently

Kernel methods transform tangled data structures into spaces where separation becomes straightforward.

This capability is crucial for domains like image segmentation, biometric identification, or speech classification.

Kernels capture fine-grained patterns that are otherwise missed in raw feature space.

In facial emotion recognition, RBF kernels detect subtle texture variations and separate emotional categories effectively.

3. Multiple Kernel Choices for Different Data Patterns

Common kernels include Linear, Polynomial, RBF, and Sigmoid, each capturing different types of relationships.

This flexibility allows practitioners to align transformation behavior with domain characteristics.

Selecting the right kernel dramatically improves model accuracy. For instance, polynomial kernels often work well for detecting complex polynomial relationships in manufacturing fault detection systems.

4. Ability to Model Infinite-Dimensional Spaces

Some kernels, like RBF, correspond to infinite-dimensional feature mappings.

This expansive representational capacity gives SVM the power to classify extremely complex structures without overfitting.

Infinite mapping is done implicitly, keeping computation feasible.

For example, clustering boundaries that resemble concentric patterns can be separated easily with RBF-based kernel SVM.

5. Adaptive Decision Boundaries

Kernel SVM can bend, twist, and adapt boundaries according to underlying data formations.

This adaptability enables precise modeling of irregular or noisy datasets.

Industries such as finance use kernel SVM to detect fraud patterns with hidden nonlinear relationships.

For example, subtle spending behaviors can be identified using curvature-based boundaries from kernel transformations.

6. Robust Against Outliers When Tuned Properly

Kernel SVM models include parameters such as gamma (for RBF) that dictate how sensitive the model should be to individual points.

Proper tuning prevents the model from being overly influenced by outliers.

This makes kernel methods reliable for anomaly detection. For example, telecom networks use kernel SVM to isolate abnormal traffic patterns.

7. Supports Complex Similarity Measures for Custom Applications

Beyond standard kernels, domain experts can design custom kernel functions tailored to specific structures like graphs, strings, or sequences.

This expands SVM to areas such as bioinformatics or text mining.

For example, string kernels can compare DNA sequences directly, enabling genetic classification tasks with exceptional accuracy.

Case Study 1: Handwritten Digit Recognition (MNIST Dataset)

Industry: Computer Vision

Method Used: SVM with RBF Kernel

The MNIST dataset, containing thousands of grayscale handwritten digits, has been a classic benchmark for evaluating classification algorithms.

Early machine learning systems struggled to distinguish subtle variations in human handwriting such as slanted strokes, incomplete curves, or imperfect edges.

SVM combined with the RBF kernel became one of the first highly accurate models to solve this challenge.

Why SVM Works Here

1. The RBF kernel captures nonlinear boundaries between thousands of pixel-based features.

2. High-dimensional space representation helps differentiate digits like "3" and "8" despite overlapping shapes.

3. SVM’s margin-based learning avoids overfitting on noisy handwriting patterns.

Outcome : SVM achieved over 98% accuracy, outperforming many basic neural networks of the time. It also required far fewer parameters, making it computationally lighter for early systems.

Case Study 2: Bioinformatics – Cancer Gene Expression Classification

Industry: Healthcare & Genomics

Method Used: SVM with Linear & Polynomial Kernels

Gene expression datasets often contain thousands of features (genes) but very few patient samples.

Traditional classifiers struggle with such data imbalance and dimensionality. SVM became a preferred method in cancer subtype identification from microarray data.

Why SVM Works Here

1. SVM naturally handles high-dimensional data where feature count >> number of samples.

2. Margin maximization prevents model instability despite the small dataset size.

3. Kernels help uncover nonlinear relations between gene interactions.

Outcome: SVM models successfully classified cancer subtypes such as leukemia and lymphoma, enabling more targeted diagnosis. The technique demonstrated >95% accuracy in many published studies.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.