Convolutional Neural Networks (CNNs) have revolutionized computer vision by enabling models to automatically learn hierarchical feature representations from images.

Over the years, various CNN architectures have emerged, each introducing unique design innovations to tackle challenges like vanishing gradients, computational complexity, and feature extraction efficiency.

Among the most influential models are AlexNet, VGG, ResNet, and Inception, which have set benchmarks in image classification, object detection, and feature learning. These architectures differ in depth, convolutional filter designs, and strategies for improving network performance.

Understanding their structure, advantages, and limitations is crucial for designing efficient deep learning systems.

Each of these networks has contributed significantly to the evolution of CNNs, introducing concepts such as deep feature hierarchies, residual learning, and multi-scale feature extraction, which remain foundational in modern computer vision tasks.

AlexNet

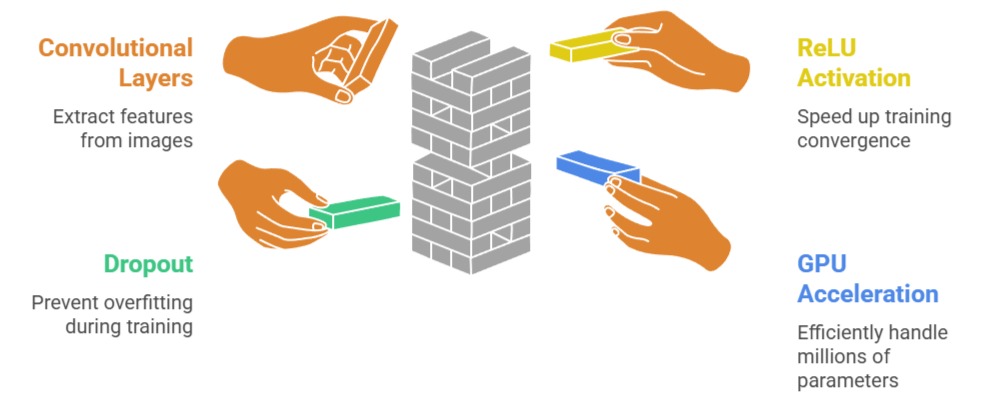

AlexNet, developed by Alex Krizhevsky in 2012, marked a turning point in the field of deep learning and computer vision.

AlexNet, developed by Alex Krizhevsky in 2012, marked a turning point in the field of deep learning and computer vision.

It was the first convolutional neural network to achieve remarkable success on the large-scale ImageNet dataset, winning the ILSVRC competition by a significant margin.

The architecture consists of five convolutional layers followed by three fully connected layers, utilizing ReLU activation to speed up convergence and dropout to prevent overfitting.

AlexNet also popularized the use of GPU acceleration for training deep networks, making it feasible to handle millions of parameters efficiently.

Its design incorporated data augmentation techniques such as image flipping, cropping, and color jittering to improve generalization on unseen data.

Advantages

1. Breakthrough in Deep Learning

AlexNet was the first CNN to achieve groundbreaking results on the ImageNet dataset, demonstrating that deep neural networks could outperform traditional computer vision methods.

Its success popularized deep learning in academia and industry.

By proving that convolutional layers combined with GPUs could scale to millions of parameters and handle real-world images, AlexNet set a benchmark for all future CNN architectures.

Its impact went beyond performance, inspiring researchers to explore deeper networks and complex image recognition problems.

2. Faster Training with ReLU Activation

AlexNet replaced traditional sigmoid and tanh activations with ReLU (Rectified Linear Units), which significantly sped up training convergence.

ReLU reduces the likelihood of vanishing gradients in deep networks and allows models to learn more complex features efficiently.

By accelerating gradient flow, the network could be trained faster without compromising performance.

This change influenced almost all subsequent CNN architectures, establishing ReLU as the default activation function for deep networks.

3. Data Augmentation

AlexNet used extensive data augmentation techniques, including random cropping, flipping, and color jittering, to artificially increase dataset size and diversity.

This helped the network generalize better to unseen data, reducing overfitting on the training set.

By exposing the network to multiple variations of the same image, AlexNet became more robust to transformations and noise.

Data augmentation remains a standard practice in training modern deep networks, highlighting AlexNet’s influence on training strategies.

4. Dropout for Regularization

Dropout was introduced in the fully connected layers of AlexNet to prevent overfitting.

By randomly deactivating neurons during training, the network avoids co-adaptation of features and forces neurons to learn more robust representations.

Dropout improves generalization and enhances model performance on validation and test sets.

Its effectiveness in AlexNet motivated widespread adoption in deep learning models across various domains, including image recognition and natural language processing.

5. GPU Utilization

AlexNet’s training leveraged GPUs, which dramatically reduced computation time and enabled the handling of large-scale image datasets. Before AlexNet, deep networks were mostly trained on CPUs, making them prohibitively slow for practical use.

GPU acceleration allowed parallel computation of convolutions and matrix operations, making deep learning scalable and feasible.

This approach paved the way for the GPU-based deep learning revolution we see today.

6. Ease of Understanding and Implementation

Despite its depth and parameter size, AlexNet’s architecture is straightforward, consisting of 5 convolutional layers followed by 3 fully connected layers.

Its simplicity allows beginners and researchers to understand and implement the network easily, making it a popular educational model.

The clear structure also facilitates experimentation with modifications, such as changing filter sizes or adding normalization layers.

7. Foundation for Modern CNNs

AlexNet laid the groundwork for more complex CNN architectures like VGG, ResNet, and Inception.

Concepts introduced in AlexNet, such as ReLU activation, dropout, and GPU training, became fundamental practices in deep learning.

By providing a successful and reproducible framework, it enabled further innovation in designing deeper and more efficient networks for various computer vision tasks.

Disadvantages

1. Large Number of Parameters

AlexNet contains over 60 million parameters, making it extremely memory-intensive.

Training and storing such a network requires significant hardware resources, including high-end GPUs with large VRAM.

This limits its usability in low-resource environments and increases both training cost and time.

Deploying the model on mobile devices or edge applications is challenging without additional optimizations like pruning or quantization.

2. Limited Depth

Compared to modern architectures like ResNet or Inception, AlexNet is relatively shallow.

Its five convolutional layers are insufficient to capture highly abstract or hierarchical features in complex datasets.

This limitation restricts its performance on more challenging tasks that require deep feature representations, such as fine-grained image recognition or scene understanding.

3. Overfitting Risk

Despite dropout and data augmentation, AlexNet can overfit smaller datasets due to the high number of parameters.

Without large-scale datasets like ImageNet, the network’s performance can degrade significantly.

Overfitting also requires careful tuning of learning rates, batch sizes, and regularization strategies, which complicates the training process.

4. Large Convolution Filters in Early Layers

AlexNet uses 11x11 and 5x5 convolutional filters in its initial layers.

While effective for large images, these filters may fail to capture fine-grained, localized features, limiting feature extraction quality.

Modern architectures prefer smaller filters (e.g., 3x3) to achieve deeper networks and better representation learning without increasing parameters unnecessarily.

5. Not Optimized for Very Deep Networks

AlexNet does not employ mechanisms like skip connections or batch normalization, which are essential for training very deep networks.

As a result, scaling AlexNet to hundreds of layers is infeasible due to vanishing gradient and optimization challenges.

This makes it less adaptable for extremely deep learning tasks.

6. High Computational Demand

Training AlexNet from scratch is computationally expensive. Large datasets, high-resolution images, and multiple epochs contribute to long training times.

Researchers need access to multiple GPUs or distributed computing clusters to train the model efficiently, limiting accessibility for smaller labs or individual practitioners.

7. Deployment Challenges

Due to its size and complexity, AlexNet is not suitable for deployment in resource-constrained environments.

Optimization strategies such as model compression or pruning are required, which can be complex and may impact accuracy.

This limits its practicality for real-time applications on mobile or embedded devices.

VGG (Visual Geometry Group Network)

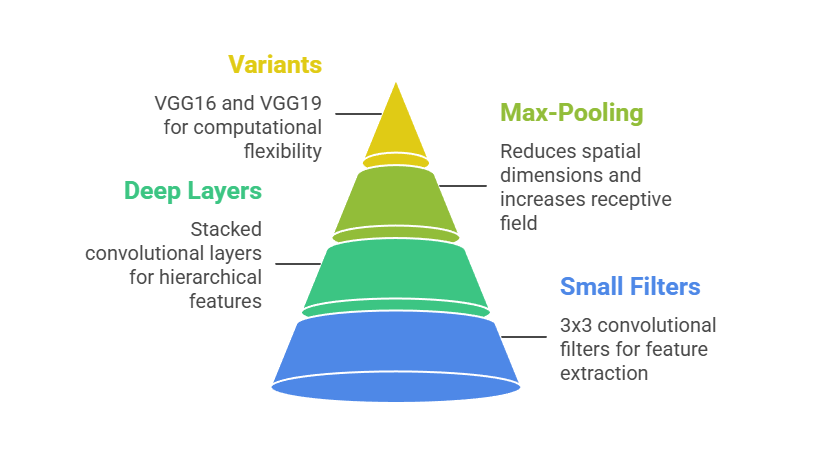

VGG, developed by the Visual Geometry Group at Oxford in 2014, focused on demonstrating that increasing the depth of convolutional networks could significantly improve performance.

Its main contribution was the systematic use of small 3x3 convolutional filters stacked in deep layers, combined with max-pooling layers, to extract hierarchical features efficiently.

The network comes in multiple variants, including VGG16 and VGG19, indicating the number of weight layers, which allows flexibility for different computational resources.

Unlike complex architectures, VGG maintains a simple and uniform design, making it highly interpretable and easy to implement. Its straightforward approach showed that depth, more than complicated filter designs, is critical to achieving high accuracy.

VGG’s pretrained models are widely used in transfer learning because the learned feature representations generalize well to other datasets.

Overall, VGG provides a strong foundation for understanding how depth and small convolutional filters affect feature extraction and model performance in deep learning.

Advantages

1. Uniform and Modular Architecture

VGG’s consistent use of 3x3 convolutional filters across all layers makes the network simple to understand and implement.

The uniform design allows researchers and practitioners to easily replicate or extend the architecture without worrying about varying filter sizes or complex modules.

This clarity supports experimental modifications and integration into larger pipelines, making it an excellent choice for both learning and research applications.

Its modular design also helps in visualizing feature hierarchies at different layers, which is critical for understanding how deep networks process information.

2. Deep Feature Representation

By stacking many convolutional layers, VGG can capture hierarchical and abstract features of input images.

Early layers focus on edges and textures, while deeper layers extract complex patterns and semantic information.

This depth improves classification accuracy, particularly for challenging datasets with fine-grained details.

The network’s ability to learn such rich representations makes it highly effective for tasks beyond classification, such as object detection and segmentation, where understanding both local and global features is crucial.

3. Strong Transfer Learning Capability

VGG’s pretrained models are widely used in transfer learning due to their rich feature representations.

Researchers can use these pretrained weights as feature extractors for new tasks, reducing training time and improving performance on smaller datasets.

This flexibility has made VGG a cornerstone for computer vision applications, including facial recognition, medical image analysis, and satellite imagery.

Its ability to generalize well to different domains demonstrates the robustness of the learned features.

4. Reproducible and Reliable

The simplicity of VGG ensures that results are consistent across different implementations and datasets.

This reproducibility is critical in research, where comparing models requires reliable benchmarks.

VGG’s architecture allows practitioners to predict performance trends based on network depth, enabling systematic experimentation.

Its reliability has made it a standard reference model in many academic papers and tutorials, providing a strong foundation for educational and research purposes.

5. Flexibility in Depth

VGG comes in multiple variants, including VGG16 and VGG19, allowing researchers to adjust the network depth according to the complexity of the task or available computational resources.

Increasing depth generally improves accuracy but requires more memory and computation.

This flexibility enables practical experimentation to find the optimal trade-off between model performance and computational cost.

6. Educational Value:

VGG is often used in teaching deep learning concepts due to its simplicity and effectiveness.

Students can clearly see how stacking small filters increases depth and how deeper layers improve feature extraction.

The architecture also helps illustrate concepts like max-pooling, fully connected layers, and ReLU activation in a structured way.

Its widespread adoption in tutorials and courses has made it an essential learning tool.

7. Integration-Friendly

VGG’s straightforward design makes it suitable for integration as a backbone in more complex architectures, including object detection models like Faster R-CNN or segmentation networks.

Its layers can be easily extracted for feature maps, making it highly versatile for advanced applications that require pretrained feature extractors.

This adaptability has ensured its continued relevance even as newer architectures have emerged.

Disadvantages

1. High Memory and Computational Requirements

VGG has a large number of parameters, resulting in high memory consumption and slow training.

Deploying it requires substantial GPU resources, limiting its usability in environments with limited hardware, such as mobile devices or embedded systems.

Training from scratch is especially time-consuming for large datasets.

2. Overfitting on Small Datasets

The large number of weights in VGG makes it prone to overfitting if trained on small datasets.

Despite using techniques like dropout, the network still requires careful regularization and data augmentation to perform well on limited data.

This makes it less practical for applications where large-scale annotated data is unavailable.

3. Long Training Time

Due to its depth and high parameter count, VGG requires extensive time to train from scratch.

Even with GPUs, training can take days on large datasets like ImageNet, making experimentation slow.

This time constraint can be a bottleneck for researchers and practitioners who want to iterate quickly.

4. Not Optimized for Resource-Limited Environments

VGG’s size and computation demand make it unsuitable for real-time applications or deployment on low-power devices.

Optimizations such as pruning or quantization are necessary, which may introduce additional complexity and reduce accuracy.

5. No Architectural Innovations for Gradient Flow

Unlike ResNet, VGG does not include skip connections or other mechanisms to facilitate gradient flow.

As networks become deeper, training can become unstable, and vanishing gradient issues may arise, limiting scalability beyond certain depths.

6. Rigid Convolutional Structure

The uniform 3x3 convolutional layers, while simple, limit flexibility in capturing multi-scale features.

Networks like Inception handle multi-scale feature extraction more efficiently by combining different filter sizes in parallel, which VGG cannot inherently do.

7. Large Model Size for Deployment

With hundreds of millions of parameters in deeper variants, VGG consumes significant storage space, making it difficult to deploy in scenarios where memory efficiency is crucial.

This limits its practical usability in edge computing or mobile applications.

ResNet (Residual Networks)

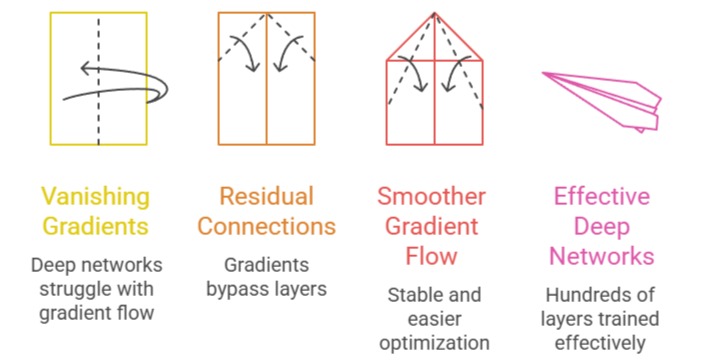

ResNet, introduced by Kaiming He and colleagues in 2015, revolutionized deep learning by addressing the long-standing vanishing gradient problem that limits very deep neural networks.

Its key innovation, residual connections, allows gradients to bypass layers, enabling networks with hundreds of layers to be trained effectively without performance degradation.

By learning residual mappings instead of direct input-output transformations, ResNet ensures smoother gradient flow, making deep networks more stable and easier to optimize.

The architecture comes in multiple variants, including ResNet-50, ResNet-101, and ResNet-152, where the number indicates the depth of the network.

ResNet has been a foundational model for modern computer vision tasks, including object detection, segmentation, and facial recognition, due to its strong feature extraction capabilities.

It also popularized the concept of very deep networks that are both scalable and efficient, paving the way for hybrid architectures like Inception-ResNet.

The network’s combination of depth, modularity, and residual learning makes it a benchmark for training extremely deep convolutional neural networks.

Advantages

1. Residual Connections Facilitate Deep Training

The introduction of skip connections in ResNet allows gradients to bypass certain layers, preventing vanishing gradient problems that often occur in very deep networks.

This innovation enables training of networks with hundreds of layers while maintaining stable convergence.

Residual learning makes optimization easier, ensures that the deeper layers do not degrade performance, and allows the network to learn identity mappings if necessary.

This feature has made ResNet a standard architecture for very deep CNNs.

2. Exceptional Accuracy on Large-Scale Datasets

ResNet consistently achieves state-of-the-art performance on benchmark datasets like ImageNet.

Its ability to maintain performance as depth increases allows it to capture complex hierarchical features, improving classification accuracy on challenging datasets.

The network’s design ensures robust learning of both low-level and high-level features, making it highly effective for complex visual recognition tasks.

3. Faster Convergence Compared to Traditional Deep Networks

Residual connections improve gradient flow, which accelerates convergence during training.

Networks converge faster because gradients propagate efficiently through the layers, reducing the number of epochs required to reach optimal performance.

This results in time and resource savings during training, especially for very deep networks that would otherwise take significantly longer to optimize.

4. Versatile Feature Extraction for Transfer Learning

ResNet provides highly effective hierarchical feature representations, making it a preferred choice for transfer learning.

Its pretrained models can be used as feature extractors for a wide range of tasks, including object detection, segmentation, and even medical image analysis.

The learned features generalize well across domains, allowing faster development of new models with high performance on smaller datasets.

5. Scalable Deep Architecture

With variants like ResNet-50, ResNet-101, and ResNet-152, the architecture can be scaled according to computational resources and task complexity.

This scalability allows practitioners to balance accuracy and computational cost effectively.

By choosing the appropriate depth, ResNet provides flexibility for research and practical applications, making it suitable for both large-scale and resource-constrained environments.

6. Robustness and Generalization

The combination of residual learning and batch normalization ensures that ResNet generalizes well to unseen data.

Regularization and skip connections reduce overfitting, making it more reliable across different datasets.

This robustness has made ResNet a backbone for many advanced computer vision pipelines, ensuring consistent performance in real-world applications.

7. Foundation for Advanced Architectures

ResNet’s residual block concept has influenced numerous modern architectures, including Inception-ResNet and DenseNet. Its modular design allows easy integration into hybrid networks, extending its applicability beyond pure classification tasks.

By setting a benchmark for training very deep networks efficiently, ResNet continues to be a key reference model for state-of-the-art CNN research.

Disadvantages

1. Complexity for Beginners

The residual block design introduces additional layers and shortcut connections, which can be challenging for newcomers to understand and implement.

Compared to simpler architectures like VGG or AlexNet, the learning curve for ResNet is steeper, requiring a solid understanding of deep learning concepts to modify or optimize effectively.

2. Computationally Intensive for Very Deep Variants

Deep ResNet models, such as ResNet-152, require substantial GPU resources and memory to train.

The combination of depth and large parameter counts makes these networks expensive in terms of computation and storage, limiting their accessibility for smaller research labs or low-resource environments.

3. Not Ideal for Low-Power Devices

Deploying very deep ResNet models on mobile or embedded systems is challenging due to memory and processing requirements.

Without pruning, quantization, or other optimization techniques, running these models in real-time on constrained hardware is difficult.

4. Hyperparameter Sensitivity

Training ResNet effectively requires careful tuning of learning rates, batch sizes, and optimizers. Residual connections help with gradient flow, but without proper hyperparameter selection, training can still be suboptimal.

Beginners may struggle to achieve stable convergence on very deep models without extensive experimentation.

5. Large Model Size

Despite efficiency improvements, ResNet variants contain millions of parameters, which increases storage and memory demands.

Very deep networks like ResNet-152 may require multiple GPUs for training, adding to the operational cost and infrastructure requirements.

6. Overhead of Residual Connections

While skip connections improve training, they add computational overhead during forward and backward passes.

This slightly increases memory usage and computational time compared to traditional sequential architectures.

7. Dependency on Large Training Data

ResNet performs best with large-scale datasets. On smaller datasets, it may not achieve its full potential without fine-tuning, augmentation, or transfer learning.

This dependence can be a limitation in scenarios where annotated data is scarce.

Inception (GoogLeNet)

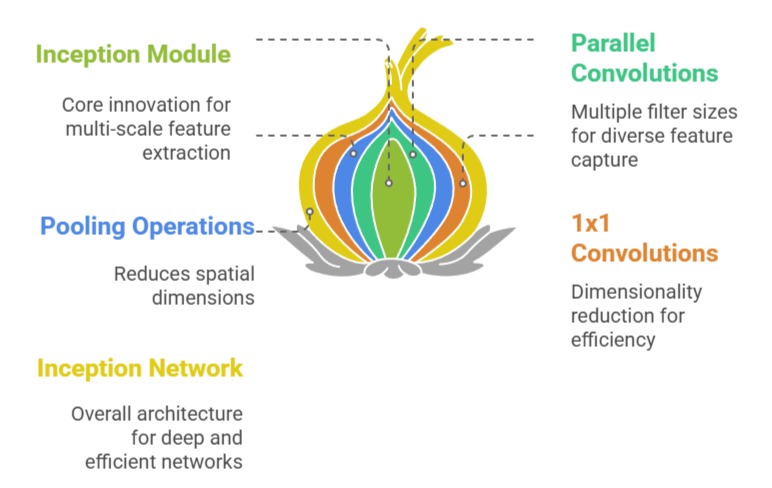

Inception, also known as GoogLeNet, was introduced by Szegedy and colleagues in 2014 to improve computational efficiency while enabling very deep networks.

Its primary innovation is the Inception module, which performs parallel convolutions with multiple filter sizes (1x1, 3x3, 5x5) and pooling operations within the same layer, allowing the network to capture multi-scale features effectively.

The architecture also incorporates 1x1 convolutions to reduce dimensionality, minimizing computational cost without sacrificing depth. Inception networks are significantly deeper than AlexNet or VGG, yet they maintain efficiency through careful design and parameter reduction.

The network won the ImageNet Large Scale Visual Recognition Challenge in 2014 due to its excellent performance and computational optimization.

Additionally, auxiliary classifiers are included during training to combat vanishing gradients, helping the network converge faster.

Overall, Inception represents a balance between depth, width, and efficiency, making it a powerful architecture for a wide range of computer vision tasks.

Advantages

1. Multi-Scale Feature Extraction

The Inception module performs parallel convolutions with different filter sizes, capturing both fine and coarse features simultaneously.

This design enables the network to analyze patterns at multiple scales within the same layer, improving its ability to recognize complex objects and textures.

Multi-scale feature extraction enhances performance on datasets with diverse objects and intricate details, making Inception highly versatile for real-world applications.

2. Parameter Efficiency with 1x1 Convolutions

Inception uses 1x1 convolutions as bottleneck layers to reduce the number of input channels before applying computationally expensive 3x3 and 5x5 convolutions.

This technique decreases the total number of parameters and computational cost, allowing the network to be very deep without requiring excessive hardware resources.

It also prevents overfitting by limiting unnecessary parameters while maintaining the capacity to learn complex feature representations.

3. High Accuracy on Large Datasets

Inception achieves excellent performance on large-scale image datasets like ImageNet. Its multi-scale processing and deep architecture allow it to learn intricate features and semantic patterns efficiently.

The combination of depth and parallel filter operations ensures that the network can extract both global and local features, resulting in superior classification accuracy compared to many earlier architectures.

4. Auxiliary Classifiers for Faster Convergence

Inception includes auxiliary classifiers at intermediate layers to provide additional gradient signals during training.

These classifiers help combat the vanishing gradient problem, accelerate convergence, and improve overall training stability.

By introducing intermediate supervision, the network learns more effectively at earlier stages, leading to better feature representations and improved generalization.

5. Scalable and Flexible Architecture

Inception modules can be stacked to create extremely deep networks without a proportional increase in computational cost.

This modular design allows researchers to build deeper networks, adjust filter sizes, and experiment with architectural variations efficiently.

The scalability makes Inception suitable for both high-performance applications and research experiments exploring novel CNN designs.

6. Transfer Learning Capability

Inception’s pretrained models are highly effective for transfer learning.

The network’s multi-scale feature extraction provides rich representations that generalize well to other tasks such as object detection, segmentation, and medical imaging.

Fine-tuning Inception on smaller datasets often yields state-of-the-art performance due to the robustness and versatility of the learned features.

7. Balanced Depth and Efficiency

Inception achieves a balance between network depth, width, and computational efficiency.

By combining multiple filter sizes and bottleneck layers, it maintains high performance while reducing unnecessary parameters.

This balance allows Inception to compete with deeper networks like ResNet in accuracy while being more efficient in terms of computation and memory usage.

Disadvantages

1. Complex Architecture

The parallel branches in each Inception module make the network more difficult to understand, implement, and debug compared to simpler architectures like VGG or AlexNet.

Beginners may find it challenging to modify the network or adapt it to new tasks due to the intricate structure of the modules.

2. Hyperparameter Tuning Complexity

Achieving optimal performance requires careful tuning of filter sizes, number of channels, and module configurations.

Selecting incorrect parameters can lead to suboptimal performance, making experimentation more time-consuming and technically demanding.

3. Training Overhead

While Inception reduces computational cost compared to naive deep networks, the presence of multiple parallel branches increases the complexity of forward and backward passes.

This adds some overhead in memory and computation during training, especially in very deep variants like Inception-v3 or v4.

4. Auxiliary Classifiers Add Complexity

The inclusion of intermediate classifiers improves convergence but introduces additional design and training complexity.

Balancing the loss contributions from auxiliary classifiers with the main classifier requires careful consideration, which can complicate the training process.

5. Deployment Challenges

Due to its multi-branch design, deploying Inception on resource-constrained devices or for real-time applications is more challenging than deploying simpler sequential networks.

Optimizations are often necessary to reduce memory usage and inference time without sacrificing accuracy.

Parameter Sensitivity: Inception’s performance is sensitive to architectural choices, including the arrangement of filters, bottleneck layers, and pooling operations.

Suboptimal design choices can significantly degrade network performance, making careful planning essential during model construction.