Deployment strategies explain how trained deep learning models are moved from development into production systems.

This topic covers methods for serving models reliably, scaling them for real-world use, and updating them safely.

It emphasizes monitoring, versioning, and controlled rollouts to maintain model performance over time.

ONNX (Open Neural Network Exchange)

ONNX is an open-source format that standardizes the representation of deep learning models across frameworks.

It allows models trained in one framework, such as PyTorch, TensorFlow, or MXNet, to be exported and executed in another framework or hardware backend without rewriting code.

Importance

1. Cross-Framework Interoperability

ONNX enables models trained in one framework, such as PyTorch or TensorFlow, to be exported and executed seamlessly in another.

This eliminates the need to rewrite or retrain models for different platforms, allowing teams to leverage the best tools for training while deploying on the most optimized runtime.

It facilitates collaboration across teams using different frameworks and accelerates the transition from research to production.

2. Hardware and Platform Flexibility

ONNX supports multiple hardware backends, including CPUs, GPUs, and specialized accelerators like TPUs and FPGAs.

This flexibility allows organizations to scale deployments efficiently across cloud servers, edge devices, or enterprise environments without redesigning the model architecture.

It ensures consistent performance and reliability across different infrastructure setups.

3. Optimized Inference

ONNX Runtime offers performance improvements such as operator fusion, memory optimization, and parallel execution, which reduce latency and improve throughput during inference.

These optimizations are crucial for real-time applications like video analytics, autonomous driving, or high-frequency financial systems, where speed and responsiveness directly impact user experience and safety.

4. Reduced Development Complexity

By standardizing model representation, ONNX simplifies the deployment workflow.

Engineers can focus on optimizing models for performance and usability rather than dealing with framework-specific deployment issues.

This reduces errors, saves development time, and ensures consistency between training and production models.

5. Enterprise and Cloud Integration

ONNX is widely supported by cloud providers and enterprise AI platforms, making it easier to integrate models into existing pipelines.

Organizations can deploy AI solutions at scale, maintain version control, and manage multiple models efficiently across different teams or departments.

TorchScript

TorchScript is a PyTorch-specific deployment tool that converts dynamic, Python-based models into a static, serialized format.

Models can be scripted or traced to create computation graphs suitable for high-performance inference outside of the Python runtime.

Importance

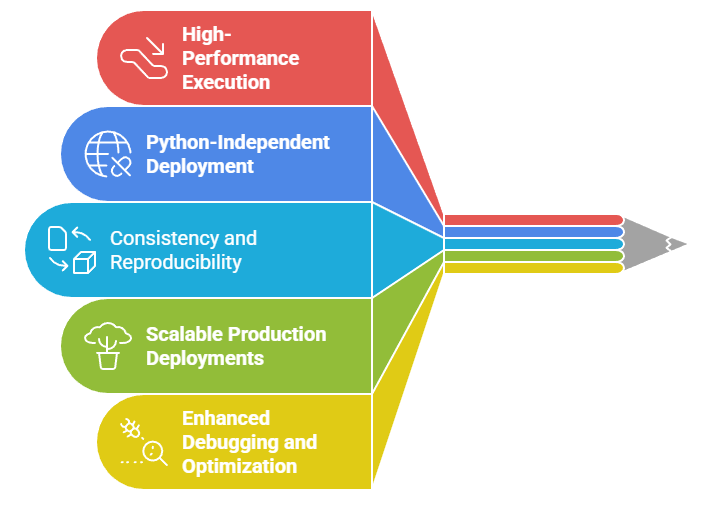

1. High-Performance Execution

TorchScript converts dynamic PyTorch models into a static computation graph, enabling faster inference.

Optimizations like operator fusion and memory-efficient execution reduce runtime overhead, which is critical for real-time applications such as robotics, autonomous systems, and video processing.

2. Python-Independent Deployment

TorchScript allows models to run outside Python, in environments such as C++ applications or embedded systems.

This ensures that models can be deployed in production scenarios where Python may not be available or suitable, expanding deployment options and platform compatibility.

3. Consistency and Reproducibility

By serializing models into a fixed graph, TorchScript ensures consistent behavior between training and production.

This reproducibility reduces the likelihood of runtime errors caused by dynamic code execution, which is essential for mission-critical applications where reliability is paramount.

4. Scalable Production Deployments

TorchScript models can be deployed across multiple servers or devices efficiently.

This makes it suitable for large-scale AI services, enabling high-throughput inference while maintaining low latency and stable performance across diverse hardware configurations.

5. Enhanced Debugging and Optimization

TorchScript allows developers to inspect and optimize model graphs, making it easier to identify bottlenecks, unnecessary computations, or inefficient operations. This capability improves model efficiency and reduces computational costs in production environments.

Edge Deployment

Edge deployment involves running deep learning models directly on devices located at the “edge” of a network, such as smartphones, drones, IoT sensors, cameras, or autonomous vehicles.

This approach minimizes latency and reduces dependence on cloud infrastructure.

Importance

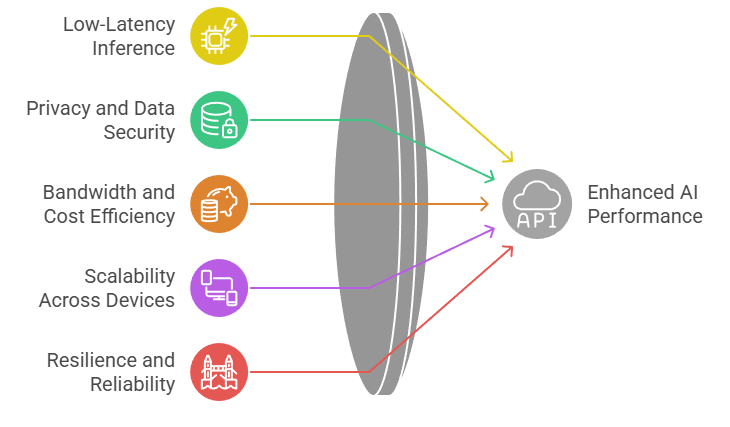

1. Low-Latency Inference

Edge deployment allows models to run directly on local devices such as smartphones, cameras, or IoT sensors.

This eliminates network delays associated with cloud-based inference, enabling real-time decision-making crucial for autonomous driving, industrial monitoring, and mobile AI applications.

2. Privacy and Data Security

Processing data locally reduces the need to transmit sensitive information to cloud servers, preserving user privacy and complying with data protection regulations.

This is particularly important in healthcare, finance, and personal devices where data security is critical.

3. Bandwidth and Cost Efficiency

Running models on-device reduces reliance on continuous cloud connectivity, saving bandwidth and reducing operational costs.

It allows AI applications to function effectively even in remote locations or areas with limited internet access.

4. Scalability Across Devices

Edge deployment supports distributed AI, where multiple devices independently perform inference.

Optimizing models for edge devices using techniques like quantization, pruning, and lightweight architectures ensures that AI solutions can scale efficiently across millions of devices.

5. Resilience and Reliability

Edge deployment ensures that AI applications remain functional even when network connectivity is lost or delayed.

This robustness is critical for mission-critical systems, such as autonomous drones, smart factories, and emergency response systems, where uninterrupted model performance is essential.