Convolutional Neural Networks (CNNs) have reshaped the landscape of modern computer vision by enabling machines to interpret and understand visual information with a level of depth and precision that was previously unattainable.

Across industries, these architectures have proven fundamental to tasks such as image recognition, object detection, and image segmentation, each serving a distinct purpose in turning raw pixels into actionable insights.

Image recognition allows systems to classify visual content into meaningful categories, acting as the backbone of facial identification, automated quality inspection, and content moderation.

Object detection extends this capability by not only identifying what is present but precisely locating multiple objects within complex scenes, making it indispensable in autonomous driving, retail analytics, and intelligent surveillance.

Segmentation performs an even more refined analysis by assigning a label to every pixel, producing dense and structural understanding required for medical diagnosis, satellite mapping, and robotic perception.

The widespread adoption of these applications stems from the ability of CNNs to automatically learn hierarchical feature representations without manual feature engineering, transforming computer vision pipelines into scalable, transferable solutions.

IMAGE RECOGNITION

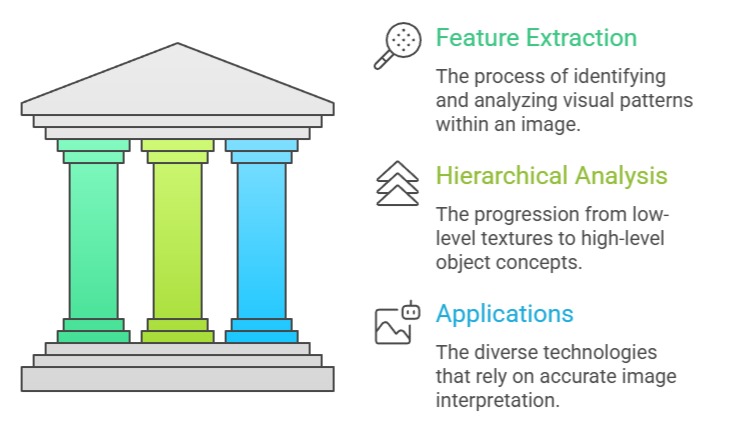

Image recognition refers to the process through which a model analyzes visual input and assigns it to predefined categories based on patterns learned from extensive training data.

It operates by extracting hierarchical features, beginning from low-level textures and progressing to high-level object concepts, enabling the system to interpret complex images with remarkable consistency.

This capability underlies many modern technologies, from biometric authentication to product search engines, where accurate interpretation of visual content is essential.

The strength of image recognition lies in its ability to process immense amounts of visual information far faster than humans, enabling scalable and automated decision-making.

It is widely utilized in domains where classification forms the preliminary step before more advanced visual tasks. As data grows in volume and complexity, CNN-based recognition models continue to evolve, incorporating improved architectures that enhance generalization while reducing computational burden.

Advantages

1. High Classification Accuracy

Modern CNN-based recognition systems achieve extremely high accuracy across a wide range of datasets because they learn visual patterns directly from data rather than relying on handcrafted features.

This enables models to capture subtle distinctions between categories even when images contain noise, shadows, distortions, or environmental variations that might confuse traditional algorithms.

Their ability to generalize across unseen examples makes them suitable for real-world tasks such as medical diagnosis or industrial inspection, where precision is crucial.

As more data becomes available, these models continue to refine their feature representations, further enhancing reliability.

This strong performance makes image recognition foundational for more complex visual tasks that rely on accurate classification.

2. Automated Feature Extraction

CNNs eliminate the need for manual feature engineering, allowing recognition systems to autonomously identify meaningful patterns within data. Instead of designing features such as edges, corners, and gradients manually, the network learns them through hierarchical convolutional layers, improving both the speed of development and the model’s adaptability.

Automated feature extraction ensures that the model continuously refines its internal representation as more training examples are introduced, resulting in stronger generalization capabilities.

This automation also reduces human bias, as the features learned are derived statistically rather than subjectively. Overall, the process provides scalability for large datasets and minimizes the effort required to adapt models to new tasks.

3. Robustness to Variations in Visual Data

Image recognition models exhibit resilience when confronted with variations in lighting, color, orientation, and scale, largely due to data augmentation and convolutional operations that simulate real-world unpredictability.

By exposing the network to diverse transformations during training, the system becomes capable of identifying objects even in challenging conditions such as partial obstruction or camera distortion.

This robustness is essential for applications like surveillance or autonomous navigation, where environmental conditions cannot be controlled.

CNNs also learn invariant representations that allow them to focus on essential features rather than superficial changes.

As a result, they maintain stability and accuracy even when deployed in dynamic visual environments.

4. Scalability Across Domains

With transfer learning, image recognition systems can be adapted to new tasks quickly, making them highly scalable across industries such as healthcare, agriculture, retail, and transportation.

Pretrained CNN models serve as strong baselines and require only limited fine-tuning to perform well on specialized datasets, reducing training time and computational cost.

This scalability enables organizations to rapidly deploy recognition models without building architectures from scratch. Furthermore, the adaptability of CNN representations allows them to perform well even when dealing with niche or rare classes.

As industries increasingly digitize their operations, scalable recognition systems become critical for managing large amounts of visual data with minimal development overhead.

5. Fast Inference for Real-Time Applications

Optimized CNN architectures allow recognition tasks to run efficiently on a variety of hardware platforms, including mobile devices and embedded systems.

By reducing the number of parameters and employing acceleration techniques such as quantization or pruning, models achieve low inference latency, enabling real-time classification in safety-critical environments.

This is essential in use cases like autonomous drones, robotics, or quality inspection lines where immediate decisions are mandatory.

Lightweight CNN versions further extend this advantage by providing adequate accuracy while maintaining high throughput on resource-limited hardware.

The combination of speed and accuracy enables real-world deployments at scale.

6. Strong Performance on Large and Complex Datasets

Image recognition thrives when trained on large datasets, leveraging the abundance of examples to learn diverse and nuanced visual cues.

CNNs excel at identifying minute differences between classes, enhancing their ability to manage large-scale problems such as fine-grained product classification or species identification.

As datasets expand, models continue to refine their internal representations for improved precision.

This ability to scale with data makes recognition systems suitable for scenarios where continuous learning and improvement are essential, such as medical research or global monitoring applications.

The more varied the dataset, the stronger the model’s generalization capability becomes.

7. Foundation for Advanced Computer Vision Tasks

Recognition systems serve as the foundational building block for more advanced tasks like object detection and segmentation, which rely on accurate classification as the initial step.

The hierarchical features learned during recognition training transfer effectively to downstream visual problems, making recognition models indispensable in comprehensive computer vision pipelines.

Their ability to capture both low-level and high-level features enhances the performance of multi-stage systems.

This foundational role also allows recognition models to accelerate the development of new architectures, as advancements typically begin with improvements in classification frameworks.

Disadvantages

1. Limited Understanding Beyond Classification

Image recognition systems are inherently designed to assign categories, meaning they offer minimal insight into object location, relationships, or contextual interactions.

This limitation reduces their usefulness in applications requiring spatial awareness or multi-object interpretation.

For Example, recognizing that a pedestrian exists in an image is insufficient for tasks like autonomous driving, which require precise location and movement estimation.

As a result, recognition alone is often inadequate for tasks demanding structured understanding.

2. High Computational Cost During Training

Training deep recognition models requires substantial computational resources due to the large number of parameters and extensive training iterations.

This creates barriers for organizations with limited access to GPUs or cloud infrastructure.

High computational cost also slows experimentation cycles, making it difficult to iterate rapidly during development.

Although inference can be optimized, the training stage often remains a demanding process.

3. Vulnerability to Dataset Bias

Recognition models inherit biases present in the training data, potentially resulting in inaccurate or unfair predictions in real-world scenarios.

If a dataset is imbalanced, the model may fail to classify minority classes effectively, leading to skewed interpretations.

This issue is significant in sensitive domains such as medical diagnosis or facial recognition, where biased outputs can have serious implications.

Mitigating this requires careful dataset design and extensive evaluation.

4. Sensitivity to Adversarial Attacks

CNN-based recognition systems can be deceived by adversarial perturbations — imperceptible modifications that cause misclassification.

These vulnerabilities pose security risks in applications like surveillance or authentication.

Attackers can exploit these weaknesses to bypass systems or manipulate outputs.

Addressing adversarial robustness requires advanced defensive strategies, which increase model complexity.

5. Difficulty in Handling Highly Occluded or Overlapping Objects

Recognition models struggle when key features of an object are partially blocked or overlapping with other objects.

Since recognition does not localize or isolate objects, occlusion can significantly reduce accuracy.

This becomes problematic in dense visual environments such as crowded streets, retail shelves, or industrial scenes, where overlapping objects are common.

6. Limited Interpretability of Deep Models

The internal decision-making process of CNNs is difficult to interpret due to their deep hierarchical structure.

This lack of transparency makes it challenging to verify why a specific classification was made, especially in regulated domains such as healthcare or security.

As a result, trust and accountability become issues in critical applications.

7. Dependence on Large Labeled Datasets

Recognition models require vast quantities of labeled data to achieve high accuracy, which is expensive and time-consuming to curate.

Many industries lack access to large annotated datasets, limiting the development of robust models.

Although weak supervision and synthetic data help mitigate this problem, the challenge remains substantial.

OBJECT DETECTION

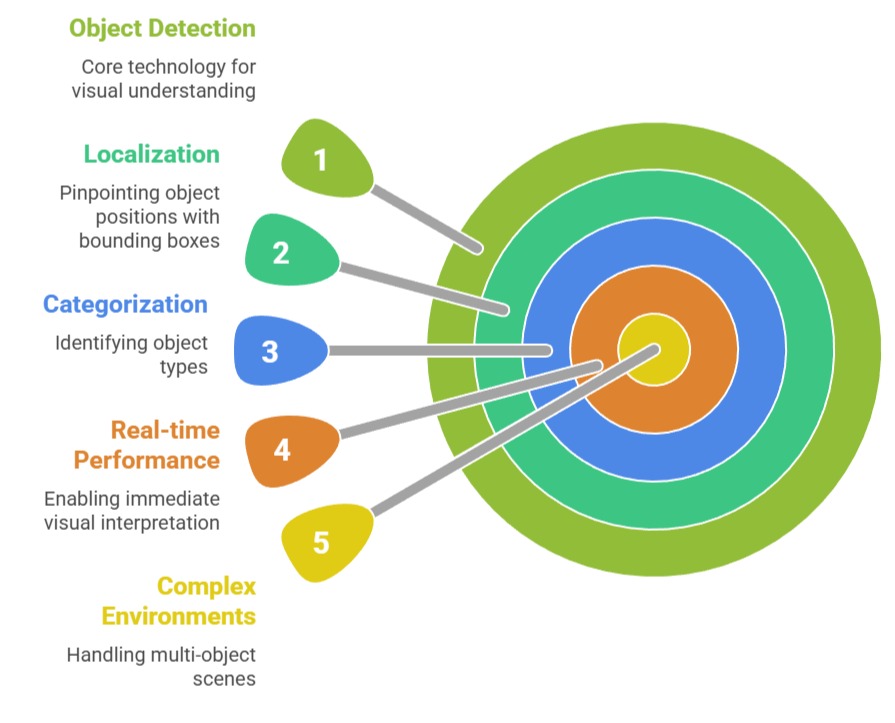

Object detection extends the capabilities of standard image classification by not only identifying what objects appear within an image but also pinpointing their exact spatial locations using bounding boxes.

Unlike classification, which gives a single label to an entire image, object detection performs both localization and categorization, making it indispensable for real-world systems that must interpret complex, multi-object environments.

Modern architectures such as YOLO, Faster R-CNN, SSD, and DETR demonstrate exceptional accuracy and real-time performance, enabling machines to interact meaningfully with dynamic visual scenes.

Today, object detection serves as a core engine for autonomous machines, retail automation, robotics, medical analysis, smart surveillance, and environmental monitoring.

Its importance keeps rising as industries seek automation that can understand cluttered, unpredictable environments with high precision.

Advantages

1. Enables Real-Time Visual Understanding

Object detection provides machines with immediate insights into what objects exist in the environment and where they are located, enabling instant decision-making required in scenarios like autonomous driving and industrial robotics.

This real-time capability is driven by architectures optimized for speed, allowing systems to maintain high levels of awareness even under rapidly changing conditions.

Through this fast inferencing, robots avoid collisions, vehicles track pedestrians, and drones navigate obstacles.

It ensures machines respond efficiently, making the technology essential for mission-critical applications where delayed recognition could result in failures or safety risks.

2. Supports Multi-Object Interpretation

Unlike simple classifiers, object detection can simultaneously identify multiple objects across varying categories in a single frame, offering a richer and more comprehensive visual representation.

This ability enhances automation pipelines where understanding multiple elements—like people, items, and vehicles—is crucial for operational decisions.

In retail analytics, for example, detection helps track multiple products; in surveillance, it monitors multiple individuals.

The multi-object capability brings significant value to environments that cannot be simplified into single labels.

3. Adaptable to Complex and Cluttered Environments

Object detection models excel at identifying objects even when they appear partially occluded, overlapping, or positioned in challenging orientations.

This makes them reliable in real-world scenes where visuals are rarely clean or perfectly separated.

Their robustness ensures they remain functional in crowded places like malls, streets, or hospitals.

The flexibility to operate under unpredictable conditions greatly increases their value for safety-critical applications and enhances their reliability.

4. Facilitates Automation and Process Optimization

By identifying objects and their positions, object detection streamlines operations in warehouses, manufacturing facilities, and logistical systems.

Robots can locate items for picking, automated systems can check for defective products, and quality inspection becomes faster and more consistent.

The spatial awareness generated by detection drastically cuts down on manual work, improving efficiency while reducing operational errors.

5. Integrates with Tracking for Continuous Monitoring

Object detection can be combined with tracking algorithms to monitor objects over time, allowing a system to follow the same object across multiple frames.

This combination is essential in traffic cameras, sports analysis, and behavioral monitoring systems.

By assigning consistent identities, systems can evaluate movement patterns, detect anomalies, and generate insights that would be impossible with static classification alone.

6. Useful Across a Wide Range of Domains

Object detection’s flexibility allows it to serve healthcare, retail, transport, agriculture, and environmental conservation.

Its ability to generalize effectively means a single model can detect entirely different categories once retrained or fine-tuned.

This multi-domain capability increases its value in industries where automation opportunities vary widely, allowing scalable adoption.

7. Enhances Safety Systems Through Early Object Awareness

Many safety-critical technologies—collision avoidance, patient monitoring, hazard detection—rely on object detection to identify dangers early.

Detecting pedestrians near a vehicle, equipment faults in factories, or intruders in secure zones helps prevent accidents.

By recognizing threats swiftly and accurately, object detection strengthens preventive measures and enables timely response.

Disadvantages

1. Struggles with Small or Distant Objects

Despite advances, object detection models often find it difficult to accurately detect small or far-away objects because the visual details become too limited.

This limitation becomes risky in domains like autonomous driving, where early detection of tiny objects—a distant pedestrian or a road hazard—is critical.

Models may either miss such objects or misclassify them, reducing reliability and requiring additional sensor support.

2. High Computational Requirements

State-of-the-art detection frameworks require powerful GPUs for training and often still demand substantial resources for inference.

This makes deployment difficult on edge devices with limited power.

Companies face higher costs due to hardware demand, and this challenge slows down adoption in low-budget environments.

The energy consumption also raises sustainability concerns in large-scale deployments.

3. Sensitive to Lighting and Environmental Changes

Object detection systems can fail when exposed to different lighting conditions, weather variations, blurriness, or motion distortion.

A model trained in bright daylight may show poor performance at night or under shadowy conditions.

These inconsistencies make systems unreliable unless large, diverse datasets are used and continuous updates are performed.

4. Requires Extensive Labeled Data

Creating bounding-box annotations is time-consuming and costly, especially for large datasets with thousands of objects per image.

The quality of detection heavily depends on the accuracy of these labels. Developing large-scale datasets becomes a barrier for organizations with limited annotation budgets or smaller teams.

5. May Produce False Positives in Dynamic Scenes

In highly dynamic environments, object detectors may incorrectly identify random patterns or shadows as objects, leading to false alarms.

These errors disrupt automated workflows, cause unnecessary actions, and reduce the trustworthiness of deployed systems. Extra filtering mechanisms are often needed to stabilize detection results.

6. Difficulties in Generalizing to New Contexts

Models trained on specific contexts might fail when introduced to new environments where object appearances differ slightly.

For Example, a detector trained on vehicles in one region may struggle with models from another region.

This lack of generalization increases retraining frequency and maintenance costs.

7. Not Ideal for Detailed Pixel-Level Understanding

While object detection provides bounding boxes, it cannot deliver precise shape outlines or pixel-level boundaries.

This limited granularity reduces effectiveness for applications requiring high-detail spatial information, such as medical imaging or precise robotic manipulation.

Real-World Examples / Case Studies

1. Autonomous Vehicles: Detect pedestrians, other cars, traffic signs, road debris for safe navigation.

2. Retail Stores: Detect products on shelves for inventory analysis.

3. Airports & Security: Identify unattended baggage and track individuals.

4. Sports Analytics: Detect players and balls for performance metrics.

5. Robotics: Guide robotic arms for picking and sorting tasks.

IMAGE SEGMENTATION

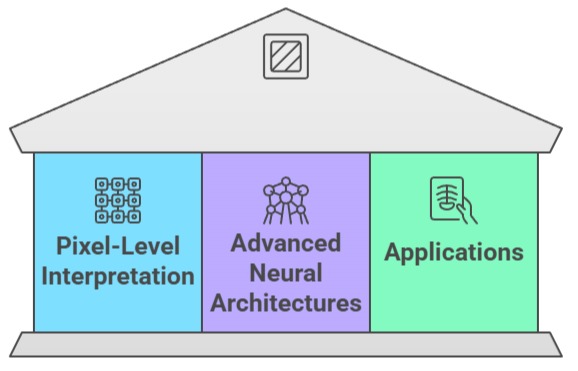

Image segmentation elevates visual understanding by dividing an image into meaningful regions, assigning each pixel to a specific category.

This pixel-level interpretation provides far greater precision than detection or classification, making segmentation indispensable for domains that require fine-grained structural knowledge—such as medical diagnosis, satellite analysis, and high-precision robotics.

Advanced neural architectures like U-Net, SegNet, DeepLab, and Mask R-CNN dominate this landscape, offering detailed spatial recognition and the ability to distinguish subtle boundaries between objects.

Segmentation drives applications requiring accuracy at the microscopic or geographic scale, and its significance continues to grow as industries seek smarter automation and better decision-support systems powered by precise visual insights.

Advantages

1. Provides Pixel-Level Precision

Segmentation delivers category labels for each pixel, allowing systems to understand exact shapes, outlines, and spatial boundaries.

This level of detail is invaluable in high-stakes fields like medical imaging, where the precise contour of a tumor dictates treatment decisions.

Pixel-level accuracy enables models to capture fine visual differences that bounding boxes or classifications cannot, improving trust in automated analysis.

2. Enables Highly Accurate Measurements

Segmentation makes it possible to compute area, volume, and structural dimensions with exceptional accuracy.

In agriculture, this supports crop health measurement; in manufacturing, it enables detection of minute defects.

The fine granularity ensures that measurements reflect real-world proportions rather than approximated shapes, which leads to better decision-making and higher quality control performance.

3. Excellent for Complex Scenes with Overlapping Objects

Unlike detection, segmentation can separate tightly packed or overlapping objects by assigning unique masks.

This capability is crucial in fields such as microscopy, where cells appear densely clustered.

The ability to differentiate connected structures enhances clarity and supports applications requiring precise extraction of individual elements from crowded scenes.

4. Supports Automation in High-Detail Environments

Segmentation significantly enhances robotic systems that must interact with fine structures or navigate tight spaces.

For instance, surgical robots rely on segmentation to separate anatomical tissues, while industrial robots use it to detect tiny components.

The detailed spatial awareness improves reliability in operations that require exact positioning.

5. Enhances Visual Understanding for Decision Systems

Segmentation provides a much deeper interpretation of scenes than detection by understanding not only what is present, but also how objects interact spatially.

In smart cities, for example, segmentation differentiates roads, sidewalks, buildings, and vegetation to enable detailed mapping.

This structured information enriches decision systems across numerous applications.

6. High Utility Across Scientific and Industrial Domains

Disciplines like geology, meteorology, agriculture, and medical diagnostics rely heavily on segmentation for accurate interpretations.

Satellite imagery uses segmentation for land classification, while pathologists use it to highlight abnormal cells.

The wide applicability makes segmentation one of the most valuable tools in scientific analysis.

7. Improves Data Annotation and Model Training

Segmented images act as powerful labels that can support training for higher-level tasks such as detection or classification.

Pixel-level masks strengthen supervised learning pipelines by providing richer inputs.

This creates stronger models overall, improving performance across visual AI tasks.

Disadvantages

1. Extremely High Annotation Cost

Pixel-level annotation is significantly more time-intensive than drawing bounding boxes.

Labeling a single medical image can take hours for experts.

The complexity and labor intensity increase dataset development costs, slowing down project execution and limiting access for smaller teams or organizations.

2. Computationally Intensive

Segmentation models demand heavy processing power due to their dense pixel predictions.

Training involves large memory consumption, often requiring multi-GPU setups. Even inference can be slow on edge devices.

This limits real-time deployment and increases cost barriers for widespread adoption.

3. Sensitive to Noise and Visual Artifacts

Segmentation models often struggle with noisy data, artifacts, and ambiguous boundaries, especially in medical or satellite imagery.

Minor distortions can lead to large errors because predictions rely on local textures. This sensitivity reduces reliability unless extremely clean datasets are used.

4. Complex to Deploy in Real-Time Applications

While some optimizations exist, segmentation is inherently slower than detection.

Real-time uses like autonomous driving often prioritize detection over segmentation because of the processing delay. Achieving high accuracy and real-time performance simultaneously remains difficult.

5. Risk of Overfitting on Limited Data

Because segmentation captures fine details, models often memorize training data if the dataset is small or lacks diversity.

This leads to poor performance in unseen scenarios, requiring strong regularization and extensive augmentation, which increase development effort.

6. Hard to Generalize Across Different Visual Domains

Differences in lighting, image resolution, camera sensors, or textures drastically affect segmentation accuracy.

A model trained on one dataset may fail completely in another, forcing retraining and domain-specific fine-tuning. This increases long-term maintenance requirements.

7. Challenging to Interpret Errors

When segmentation fails, the mistakes occur across thousands of pixels, making it harder to analyze root causes.

Errors are often subtle and require domain experts to diagnose. This slows down debugging, delaying deployment in mission-critical systems.

Examples / Case Studies

1. Medical Diagnostics: Tumor boundary segmentation for radiology.

2. Agriculture: Leaf disease and crop area segmentation.

3. Autonomous Driving: Lane markings, drivable regions, sidewalks.

4. Satellite Imaging: Land cover segmentation for environment monitoring.

5. Manufacturing: Surface defect segmentation for quality control.