Vectorization is a foundational concept in deep learning that focuses on expressing mathematical operations in terms of vectors and matrices instead of iterative loops.

This shift drastically accelerates computation, allowing modern neural networks to process massive datasets at high speed.

Most deep learning frameworks, such as TensorFlow, PyTorch, and JAX, rely heavily on vectorized operations because they can be executed in parallel on GPUs and TPUs.

By replacing slow, element-wise loops with optimized matrix multiplications and tensor operations, vectorization ensures that computations remain scalable as model sizes and data volumes grow.

Efficient computation complements vectorization by ensuring that hardware capabilities—parallel cores, SIMD instructions, memory bandwidth, and caching are fully utilized.

Whether training large transformer models or deploying lightweight models on edge devices, vectorization forms the backbone of performance optimization.

It ensures that neural networks can handle complex tasks without overwhelming processing resources, making it indispensable for today’s advanced AI architectures.

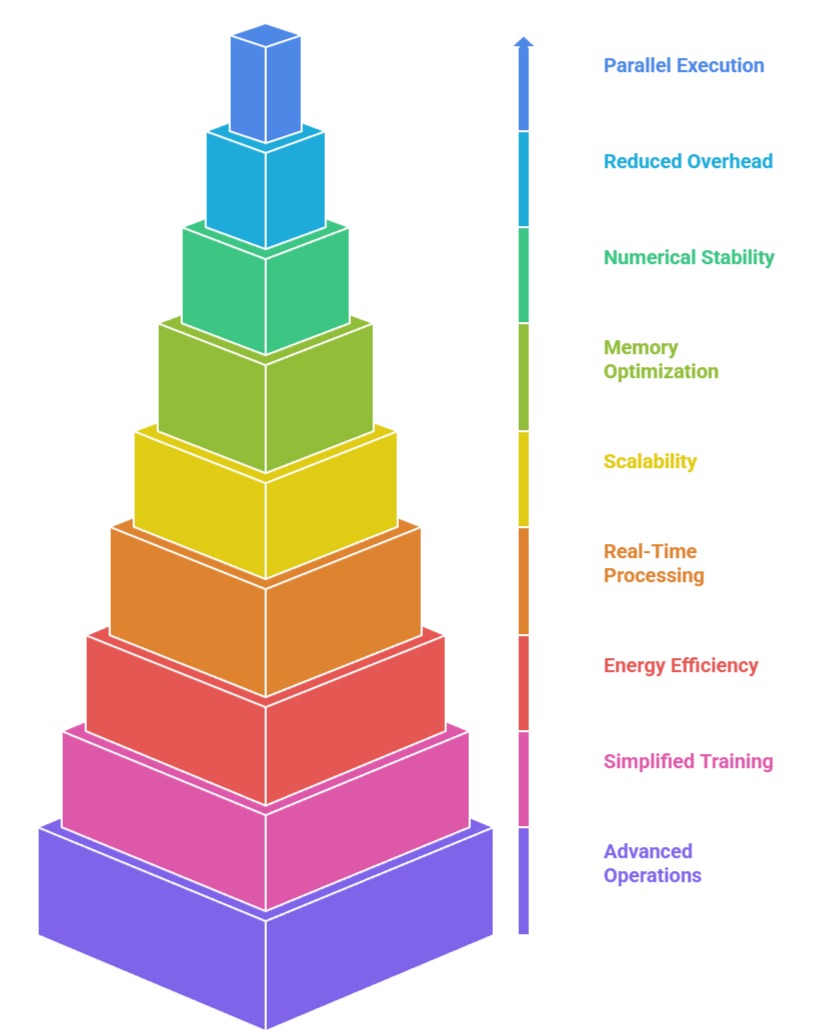

Importance of Vectorization

1. Enables Massive Parallel Execution

Vectorization allows operations like addition, multiplication, and matrix transformations to be performed on entire arrays simultaneously rather than element by element.

This parallelism directly taps into GPU architectures, where thousands of cores execute identical instructions concurrently.

As a result, operations that would normally take milliseconds through loops can be completed in microseconds.

This efficiency becomes critical during training when millions of parameters must be updated repeatedly. Without vectorization, training modern deep networks would be too slow and computationally expensive to be practical.

2. Reduces Overhead Caused by Explicit Loops

Traditional programming relies on iterative loops that incur significant overhead for each cycle, including memory access and instruction dispatch.

In deep learning, where operations are frequently repeated across large batches of data, these loops become a major performance bottleneck.

Vectorization eliminates this overhead by restructuring computations into bulk operations handled internally by optimized linear algebra libraries like BLAS and cuDNN.

This not only simplifies code but also ensures faster execution by minimizing high-level control flow operations.

3. Improves Numerical Stability and Consistency

Vectorized operations utilize highly optimized routines with built-in numerical safeguards, reducing the risk of rounding errors or inconsistent results across iterations.

These operations are executed using low-level kernels that ensure accuracy even when dealing with extremely small gradients or large tensor values.

The predictable numerical behavior of vectorized computations is crucial for stable training, especially in architectures where subtle numerical deviations can lead to divergence or erratic gradient updates.

4. Optimizes Memory Access Patterns

Efficient computation relies on how data is arranged and accessed in memory.

Vectorization encourages the use of contiguous memory blocks, enabling faster loading and storing of data.

This reduces cache misses and improves memory throughput, two factors that significantly influence deep learning performance.

When computation flows sequentially through well-structured tensors, hardware can prefetch data more effectively, reducing delays caused by random or scattered memory access.

5. Enhances Scalability for Large Models

Modern neural architectures such as Transformers, CNNs, and Graph Neural Networks involve large tensors with millions or billions of elements.

Vectorization ensures that these large structures can be manipulated without exponential increases in compute time.

As the model scales, vectorized operations scale proportionally, enabling researchers and engineers to experiment with deeper layers, larger embeddings, and wider networks without rewriting algorithms or worrying about extreme slowdowns.

6. Enables Real-Time Processing for Critical Applications

Vectorization significantly reduces latency by performing large operations simultaneously, which is vital for systems requiring instant responses—such as autonomous driving, fraud detection, robotics, and voice assistants.

Instead of processing one input at a time, vectorization handles entire batches in a single optimized execution.

This bulk processing shortens inference cycles, ensuring rapid decision-making even when handling high-volume data streams.

As a result, models can meet demanding real-time constraints without sacrificing accuracy or throughput.

7. Enhances Energy Efficiency and Cost Savings

Vectorized operations minimize redundant computations, leading to lower electricity consumption and reduced heat generation in GPUs and TPUs.

By performing thousands of floating-point operations in one unified step, the system avoids repeated instruction calls, helping organizations reduce cloud computing bills and infrastructure demands.

This advantage becomes increasingly valuable in large-scale training settings, where energy and compute costs can escalate quickly.

Ultimately, vectorization supports sustainable AI practices by improving resource efficiency.

8. Simplifies Large-Batch Training and Parallelism

Training deep neural networks with large batch sizes is only feasible because vectorized operations allow seamless processing of huge data blocks at once.

This improves gradient stability and enables highly parallel distributed training across multiple GPUs or nodes.

The uniform structure of vectorized data reduces synchronization delays and communication overhead between devices.

This makes large-scale optimization more predictable and efficient, particularly for enormous models like GPT, BERT, or Vision Transformers.

9. Supports Advanced Mathematical Operations Needed in Modern AI

Operations such as convolution, attention mechanisms, multi-head projections, and normalization layers rely heavily on tensor-level computations.

Vectorization ensures these mathematically complex tasks are expressed compactly and executed swiftly.

Without efficient tensor operations, cutting-edge architectures like Transformers or diffusion models would be computationally infeasible.

Thus, vectorization forms the foundation that enables innovation in deep learning research and model evolution.

Challenges of Vectorization

Vectorization is a core technique behind high-performance deep learning, but it is not without practical challenges.

Understanding these limitations helps practitioners make informed design choices and balance speed, memory, and maintainability.

1. Complex Implementation for Irregular Data Structures

While vectorization works extremely well for structured data, tasks involving ragged sequences, conditional logic, or non-uniform tensors introduce complications.

Rewriting such operations in a vectorized form often requires advanced transformations, padding strategies, or restructuring algorithms.

This complexity may lead to unintuitive solutions or inefficient memory usage, making development harder and more error-prone.

2. Memory Limitations on Large-Scale Models

Vectorized operations require holding large tensors in memory, which becomes problematic as models and batch sizes grow.

GPUs, despite their computational power, are constrained by limited VRAM. When tensors exceed memory capacity, training slows dramatically due to recomputations, swapping, or out-of-memory crashes.

Efficient memory management becomes essential, and developers must balance model size with available hardware.

3. Debugging Becomes More Difficult

Vectorized operations hide low-level computation steps inside large bulk operations.

While this improves speed, it makes debugging more challenging because errors often originate deep inside compiled kernels.

When issues arise such as shape mismatches, exploding tensors, or silent precision errors it becomes harder to isolate individual failing elements compared to loop-based code where failures occur in predictable steps.

4. Requires Specialized Hardware and Libraries

To fully benefit from vectorization, developers rely on GPUs, TPUs, and optimized linear algebra libraries.

Without this hardware, vectorized operations still work but may not deliver significant speedups.

This reliance creates barriers for small organizations or students who lack high-performance computing resources.

Additionally, compatibility issues across frameworks can arise when kernels behave differently on various devices.

5. Increased Complexity in Algorithm Design

Designing algorithms that are both fully vectorized and mathematically correct requires careful thinking.

Many naïve implementations cannot be directly converted into matrix operations without altering the underlying logic.

Engineers must find creative ways to preserve functionality while restructuring computations into tensor-based forms an effort that often requires deep mathematical understanding and familiarity with linear algebra.

6. Difficulties in Handling Dynamic Sequence Lengths and Conditional Paths

Many real-world datasets involve variable-length sequences, irregular structures, or conditional decisions within the network.

Vectorizing such workflows requires padding, masking, or restructuring logic into global operations, which increases computational waste and memory usage.

These techniques can sometimes degrade performance, especially when a large portion of the batch contains padded or unnecessary elements.

Achieving full vectorization without overcomplicating the code becomes a delicate and technically demanding balance.

7. Precision Issues When Using Mixed-Precision or Quantization

Efficient computation often involves using mixed-precision training (FP16/FP8) or quantized operations to speed up throughput.

However, vectorized kernels in low precision may introduce rounding errors or numerical instability that propagate through large matrices.

These subtle inaccuracies can accumulate, causing training divergence or degraded inference quality.

Ensuring correct scaling, loss-scaling, or fallback mechanisms requires extra steps, adding complexity to otherwise straightforward vectorized pipelines.

8. Hardware-Specific Behavior Causes Inconsistencies

Different GPUs, TPUs, and CPU architectures implement vector instructions differently.

This can result in varying performance characteristics, small numerical deviations, or unexpected bottlenecks when moving models across devices.

Developers may need to rewrite kernels, refactor models, or adjust settings to optimize performance on each platform.

This dependency on specialized hardware knowledge becomes a challenge for teams aiming for fully portable and reproducible deep learning workflows.

9. Increasing Difficulty in Profiling and Identifying Bottlenecks

When operations are vectorized, performance bottlenecks often shift from Python code to underlying kernels.

Traditional debugging or profiling methods become ineffective because the slowdowns are buried inside compiled executables written in C, CUDA, or hardware-specific libraries.

Developers must rely on advanced profiling tools that require deep expertise to interpret.

Without proper analysis, optimization efforts may fail to identify the true source of inefficiency or excessive memory usage.

10. Significant Learning Curve for Beginners

Mastering vectorized programming requires understanding linear algebra, tensor operations, memory layouts, and optimization principles.

Beginners who are used to writing simple loops may find it challenging to think in terms of matrix transformations and bulk computations.

This steep learning curve can slow adoption and lead to poorly optimized code if the developer is unfamiliar with best practices or high-performance computing concepts.