These operations work together to transform raw images into multi-level feature maps. Early layers capture simple features, while deeper layers detect complex structures like objects or scenes.

Understanding the principles, advantages, limitations, and practical applications of convolution, pooling, and padding is essential for designing accurate, robust, and computationally efficient CNNs.

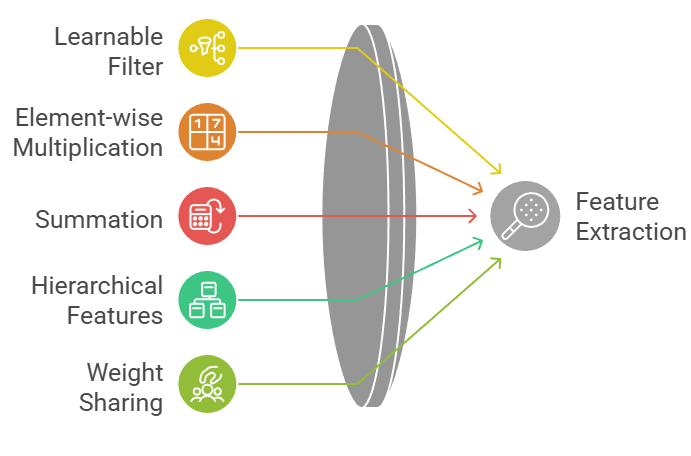

Convolution

Convolution involves applying a learnable filter (kernel) across the input feature map or image to extract relevant features.

Each kernel slides over the image, performing element-wise multiplications and summing results to form a feature map.

Multiple convolution layers capture hierarchical features, from edges and textures in early layers to complex structures in deeper layers.

Convolution reduces parameter count through weight sharing, enabling efficient learning for high-dimensional data.

Advantages

1. Efficient Feature Extraction

Convolutional layers use weight sharing, meaning the same filter is applied across the entire input image.

This drastically reduces the number of parameters compared to fully connected layers, enabling deep networks to be trained efficiently.

Each filter learns to detect a specific feature, such as an edge or a texture, and the combination of multiple filters produces rich hierarchical feature representations.

This allows CNNs to extract meaningful patterns automatically from raw data, which is essential for complex tasks like object detection and scene understanding.

By efficiently encoding spatial correlations, convolution minimizes redundancy and improves computational performance, making deep networks feasible on large images.

2. Hierarchical Feature Learning

Convolutional layers are stacked to progressively learn more complex features. Initial layers capture low-level structures like edges, corners, and textures, while deeper layers represent high-level patterns such as object parts or entire objects.

This hierarchical approach mimics human visual processing and allows the network to recognize objects under different scales, rotations, or orientations.

By learning features in layers, CNNs can generalize across multiple inputs and adapt to diverse applications without manual feature engineering, which is a significant advantage in real-world computer vision tasks.

3. Translation Invariance

The sliding window mechanism of convolution ensures that features can be detected regardless of their spatial location in the input.

This property provides translation invariance, meaning that even if an object moves within the image, the network can still recognize it.

Translation invariance is crucial for real-world scenarios where objects may not be perfectly aligned or centered.

It also improves model robustness, reduces sensitivity to minor positional shifts, and contributes to better generalization across different inputs without requiring additional data augmentation.

4. Parameter Efficiency

Convolutional layers require fewer parameters due to shared weights, which makes them more memory- and computation-efficient than fully connected layers.

This efficiency allows the creation of deeper networks without overfitting on limited datasets.

Because fewer parameters are being learned, the model can focus on extracting meaningful spatial patterns rather than memorizing irrelevant noise.

This property is especially beneficial for training high-resolution images or large datasets on limited hardware, making convolution the backbone of modern deep learning architectures.

5. Multi-Channel Feature Learning

Convolution can process multi-channel inputs such as RGB images by learning filters that combine information across channels.

This enables the network to capture complex color, texture, and intensity relationships simultaneously.

Multi-channel learning enhances feature diversity, allowing the model to recognize patterns that are defined by interactions between channels, such as color gradients or textures that involve multiple colors.

This capability is essential for realistic image processing tasks where features are rarely restricted to a single channel.

6. Flexibility in Feature Detection

By adjusting kernel size, stride, and the number of filters, convolutional layers can detect features at different scales and resolutions.

Small kernels capture fine details, while larger kernels capture broader structures.

Stride variations control the step size of the filter, balancing feature coverage and computational cost.

This flexibility allows the network to handle diverse visual patterns efficiently and adapt to various image sizes or resolutions.

It provides a powerful mechanism to build robust, multi-scale feature representations in deep CNNs.

Disadvantages

1. Limited Receptive Field in Shallow Networks

Shallow convolutional layers only observe small regions of the input image, which can prevent the network from capturing global context.

This limitation can reduce performance on tasks requiring understanding of large objects or long-range dependencies.

To overcome this, networks must be made deeper or use larger kernels, both of which increase computational cost.

Without sufficient receptive field coverage, the network may fail to detect patterns that span multiple regions, limiting its effectiveness in complex visual tasks.

2. Computational Cost in Deep Networks

Although convolution is efficient per layer, stacking multiple layers in a deep network increases computation and memory requirements significantly.

High-resolution images and numerous filters per layer exacerbate this problem.

Training and inference may require specialized hardware like GPUs or TPUs, and memory limitations can restrict batch size, slowing down convergence.

Therefore, while convolution reduces parameters, deep architectures can still be computationally intensive.

3. Sensitivity to Hyperparameters

Convolutional layers depend heavily on kernel size, stride, number of filters, and padding. Poor selection of these hyperparameters can result in underfitting, overfitting, or the loss of important features.

Careful experimentation and tuning are necessary to optimize performance, which can be time-consuming.

Misconfigured layers may compromise the network’s ability to learn meaningful representations, particularly for complex datasets.

4. Edge Information Loss Without Padding

Without proper padding, convolution filters cannot access border pixels, leading to potential loss of information near edges.

This may reduce the accuracy of feature maps, particularly for tasks like object detection where edge features are critical.

Padding can mitigate this issue, but improper configuration may still affect output dimensions and filter performance.

5. Difficulty Capturing Global Context

Convolution focuses on local regions, making it challenging to capture relationships across distant regions in the image.

Large kernels or deeper networks are needed to cover global context, increasing computation.

This can be problematic for tasks that require holistic understanding, such as scene classification or multi-object interactions, where local features alone are insufficient.

6. Potential Overfitting on Small Datasets

Deep convolutional networks have high capacity and can easily overfit if the dataset is small or noisy.

They may memorize irrelevant patterns rather than learning meaningful features.

Regularization techniques like dropout or transfer learning are often necessary to prevent overfitting and ensure that the model generalizes well to unseen data.

Example

3x3 convolution filter applied to a 224x224 RGB image in PyTorch:

pythonimport torch.nn as nn

conv_layer = nn.Conv2d(in_channels=3, out_channels=64, kernel_size=3, stride=1, padding=1)

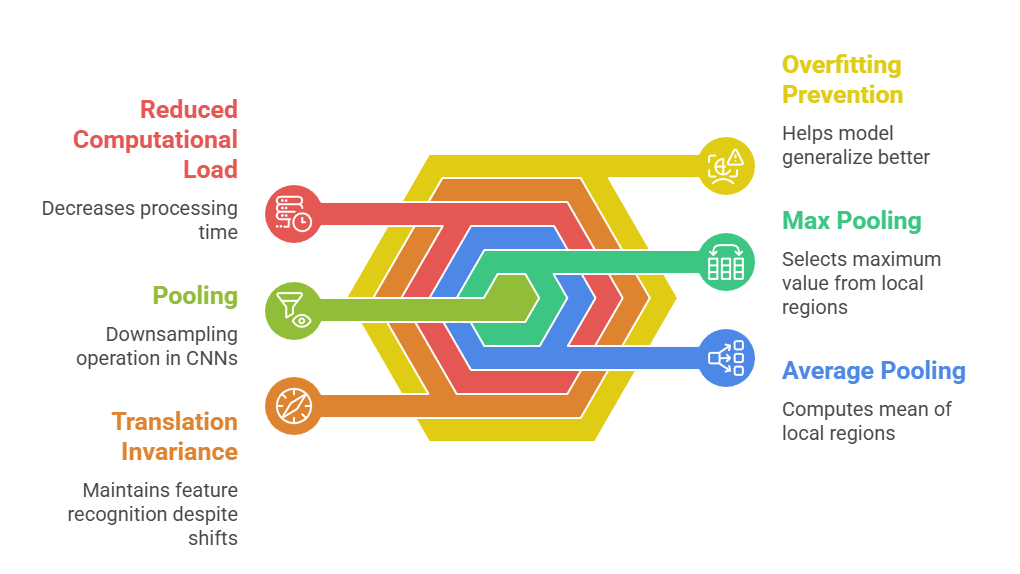

Pooling

Pooling is a downsampling operation used in CNNs to reduce the spatial dimensions of feature maps while preserving important information.

Pooling is a downsampling operation used in CNNs to reduce the spatial dimensions of feature maps while preserving important information.

It summarizes local regions by applying operations such as max pooling (selecting the maximum value) or average pooling (computing the mean).

Pooling reduces computational load, introduces translation invariance, and helps prevent overfitting.

It is typically applied after convolution layers to compress feature maps and emphasize the most significant features.

Pooling also supports hierarchical feature learning by simplifying the representations passed to deeper layers. Its design choices—pooling type, window size, and stride—directly impact network performance and generalization.

Despite being simple, pooling is essential for efficient and effective CNN architectures.

Advantages of Pooling

1. Dimensionality Reduction

Pooling reduces the spatial size of feature maps, which decreases the number of parameters and computational operations required in subsequent layers.

This dimensionality reduction makes networks more memory- and computation-efficient, allowing them to scale to high-resolution inputs.

Despite reducing data, pooling retains the most relevant information, enabling the network to focus on critical features while discarding redundant details.

By compressing feature maps, pooling also accelerates training and inference, which is crucial for practical applications in real-time image processing or large datasets.

2. Translation Invariance

Pooling ensures that small shifts or translations in the input do not significantly affect the output of the network.

By summarizing features within local regions, the model can recognize objects even if their position changes slightly.

This invariance is particularly useful in real-world images, where objects may not be perfectly centered or aligned.

Translation invariance enhances the robustness of CNNs, improves generalization across diverse input conditions, and reduces the need for extensive data augmentation.

3. Regularization Effect

Pooling acts as a form of implicit regularization by simplifying the feature maps. Reducing spatial complexity limits the network’s ability to memorize noise or irrelevant patterns, which helps prevent overfitting.

It encourages the model to focus on broader, more generalizable features rather than overly specific local patterns.

This property is particularly beneficial when training deep networks on limited datasets, as it enhances generalization without adding explicit regularization terms like dropout.

4. Supports Hierarchical Feature Learning

Pooling helps construct hierarchical representations by compressing low-level features and emphasizing essential patterns.

Early layers’ pooling focuses on edges or textures, while deeper layers’ pooling abstracts higher-level features, such as object shapes or structures.

This hierarchy allows CNNs to learn multi-scale representations efficiently.

By reducing redundant information and emphasizing important features, pooling simplifies subsequent layers’ tasks and improves the network’s ability to detect complex patterns.

5. Computational Efficiency

Pooling reduces the spatial size of feature maps, which directly decreases the number of operations required in later layers.

Smaller feature maps mean fewer multiplications, less memory usage, and faster processing, especially in deep networks.

This efficiency is crucial for practical applications like real-time video analysis or mobile deployment, where computational resources are limited.

Pooling thus allows deeper architectures without proportional increases in computation.

6. Flexibility in Downsampling

Pooling parameters such as window size, stride, and pooling type can be adjusted to control the level of downsampling.

Max pooling emphasizes prominent features, while average pooling smooths feature maps.

By selecting the appropriate configuration, pooling can balance the retention of important information with dimensionality reduction. This flexibility allows network designers to tailor pooling operations to the requirements of different tasks and datasets, enhancing model performance.

Disadvantages of Pooling

1. Loss of Fine Details

Pooling reduces spatial resolution, which can discard important information such as edges or small features.

In tasks like image segmentation or object localization, this loss may lead to decreased accuracy.

Excessive pooling can oversimplify feature maps, removing subtle patterns that are critical for detecting fine-grained differences.

Therefore, pooling must be carefully balanced with the need to retain sufficient spatial information for the task.

2. Fixed Operation Limitations

Traditional pooling operations are static and do not adapt to varying input sizes or object scales.

Max or average pooling cannot learn to emphasize more relevant features dynamically.

This rigidity can reduce performance when inputs vary significantly, requiring alternative methods like adaptive pooling or strided convolutions to provide more flexible downsampling.

3. Potential Information Bottleneck

Aggressive pooling over large windows can compress feature maps too much, creating a bottleneck where important information is lost.

This can impair the network’s ability to capture detailed or complex patterns, limiting representational power.

Careful design choices are required to avoid over-compression while still benefiting from reduced computational load.

4. No Learning Capability

Pooling does not involve learnable parameters, meaning it cannot adapt to specific patterns in the data.

It treats all local regions equally, which may ignore subtle but important features.

The inability to learn feature weighting limits pooling’s flexibility compared to convolution or attention mechanisms.

5. Over-Aggregation Risk

Pooling merges information from local regions, which can sometimes combine unrelated features.

This over-aggregation may blur important distinctions in the input and reduce feature discriminability.

In tasks requiring precise spatial relationships, such as facial recognition or segmentation, over-aggregation can negatively affect performance.

6. Sensitivity to Pooling Configuration

Incorrect choices of pooling size, stride, or type can lead to uneven feature coverage or loss of crucial information.

For Example, too large a window may excessively reduce resolution, while too small may provide insufficient compression.

Selecting pooling parameters requires experimentation and careful consideration of the dataset and task to optimize model performance.

Example

Max pooling 2x2 applied to a feature map:

pythonpool_layer = nn.MaxPool2d(kernel_size=2, stride=2)

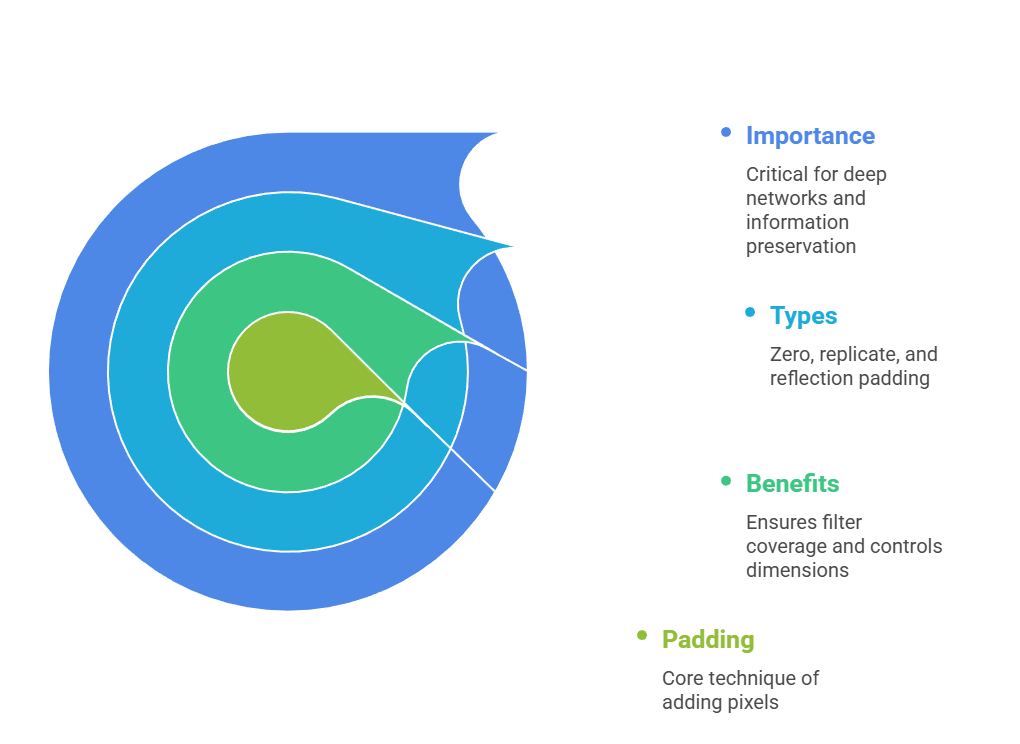

Padding

Padding involves adding extra pixels around the input feature map before convolution.

This ensures that convolution filters can cover edge pixels and controls output dimensions.

Common padding types include zero-padding, replicate-padding, and reflection-padding.

Padding is critical for deep networks to preserve spatial dimensions, prevent information loss at borders, and support stacking of multiple convolution layers.

Advantages of Padding

1. Preserves Spatial Dimensions

Padding ensures that the output feature map maintains the same width and height as the input, which is crucial for stacking multiple layers without excessive shrinkage.

2. Captures Edge Information

Without padding, convolution ignores border pixels. Padding allows filters to detect features at the edges, improving feature representation quality.

3. Supports Deeper Networks

By maintaining feature map dimensions, padding allows multiple convolution layers to be stacked without reducing spatial size excessively, enabling hierarchical feature learning.

4. Flexibility in Kernel and Stride Selection

Padding allows the use of larger kernels and varying strides without reducing output dimensions drastically. This provides flexibility for architectural design.

5. Improves Receptive Field Coverage

Padding ensures that convolution filters can interact with all regions of the input, including borders, which enhances the network’s ability to capture context.

6. Prevents Early Information Loss

Proper padding prevents early layers from discarding critical edge or corner features, which is essential for tasks like object detection and segmentation.

Disadvantages of Padding

1. Introduction of Artificial Pixels

Zero-padding adds artificial values that do not represent real information. Excessive padding may slightly distort learned features or introduce artifacts.

2. Slight Computational Overhead

Padding increases the number of operations per convolution, which may affect computation in very deep networks with large inputs.

3. Requires Careful Alignment

Improper padding combined with stride or kernel size can cause dimension mismatches or uneven coverage, leading to errors.

4. Potential Overfitting at Borders

Repeated padding in deep layers may emphasize edge artifacts, causing the model to overfit to artificial patterns at borders.

5. May Increase Memory Usage

Adding padding slightly enlarges feature maps, increasing memory requirements during training, particularly for high-resolution inputs.

6. Does Not Learn Features

Padding is a static operation and does not contribute to learning. The network relies entirely on convolution filters to interpret the added pixels.

Example

Zero-padding applied in PyTorch convolution:

pythonconv_layer = nn.Conv2d(in_channels=3, out_channels=64, kernel_size=3, stride=1, padding=1)