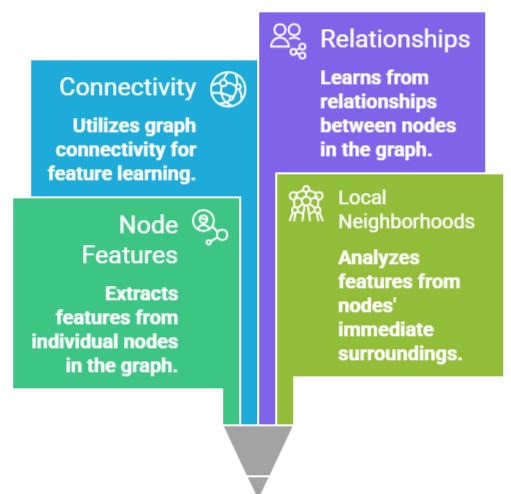

Graph Neural Networks (GNNs) are a specialized class of deep learning models designed to operate on graph-structured data, where nodes represent entities and edges represent relationships.

Standard GNNs rely on message passing to aggregate neighborhood information, but different applications require variations in aggregation strategies, weighting, and sampling.

Among the most widely used variants are Graph Convolutional Networks (GCN), Graph Attention Networks (GAT), and GraphSAGE.

Each of these architectures has been developed to address specific challenges such as scalability, heterogeneous graphs, inductive learning, and capturing complex dependencies.

Graph Convolutional Network (GCN)

A Graph Convolutional Network (GCN) is a type of neural network designed to operate directly on graph-structured data by extending the concept of convolution from Euclidean domains (like images) to graphs.

In a GCN, each node updates its representation by aggregating feature information from its immediate neighbors using a normalized weighting scheme, effectively combining node attributes with local graph topology.

This allows the network to capture patterns in node connectivity while maintaining computational efficiency.

GCNs are widely used for tasks such as node classification, link prediction, and graph classification where relational information is critical.

By propagating information through neighboring nodes, GCNs leverage both the features and the structure of the graph to produce meaningful embeddings.

Advantages

1. Efficient Local Aggregation

GCNs are designed to aggregate information from a node’s immediate neighbors in a mathematically principled way.

By normalizing neighbor contributions, they capture local connectivity patterns efficiently.

This allows the model to learn meaningful node representations while keeping computational cost manageable, making GCNs suitable for medium-sized graphs where local interactions are highly informative.

2. Simplicity and Ease of Implementation

The architecture of GCNs is straightforward and requires relatively few hyperparameters.

Its simplicity makes it easy to implement, train, and tune, which is particularly useful for practitioners new to graph-based deep learning.

Despite the simplicity, GCNs achieve strong performance across a variety of tasks, including node classification and link prediction.

3. Semi-Supervised Learning Capability

GCNs can propagate label information from a few labeled nodes to unlabeled nodes through graph structure.

This ability is particularly valuable in domains where labeled data is scarce, such as citation networks or protein interaction graphs, as it enables effective learning without requiring large annotated datasets.

4. Integration of Node Features and Graph Structure

GCNs combine structural information with node features to produce embeddings that reflect both topology and attributes.

This dual capability allows the model to capture richer relationships between nodes and to perform better in tasks where both connectivity and features matter, such as social network analysis or recommendation tasks.

Disadvantages

1. Over-Smoothing of Node Representations

When multiple GCN layers are stacked, node embeddings can become overly similar, losing their discriminative power.

This over-smoothing problem reduces the model’s effectiveness in distinguishing between nodes, especially in deep architectures.

2. Limited Expressiveness in Heterogeneous Graphs

GCNs treat all neighbors equally after normalization, which may be insufficient when certain neighbors are more relevant than others.

This uniform weighting can reduce performance in heterogeneous or noisy graphs where some relationships carry more significance than others.

3. Scalability Challenges for Large Graphs

GCNs require full-batch computation of neighbor information, which becomes memory-intensive and slow for large graphs with millions of nodes and edges.

This limits their practical application to small or moderately sized networks unless approximations are used.

4. Sensitivity to Noisy or Incomplete Graphs

Since GCNs rely heavily on graph connectivity, missing edges or noisy connections can propagate misleading information, potentially reducing the reliability of the learned representations and downstream predictions.

Graph Attention Network (GAT)

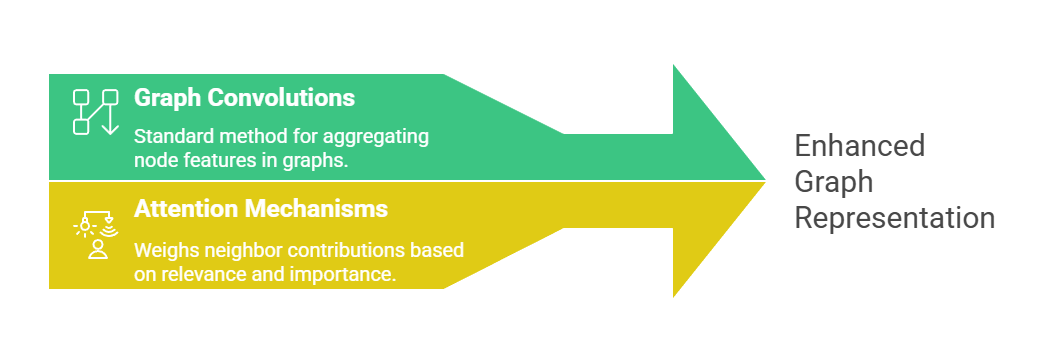

A Graph Attention Network (GAT) is an advanced GNN variant that enhances standard graph convolutions by incorporating attention mechanisms to weigh the contributions of neighboring nodes differently.

A Graph Attention Network (GAT) is an advanced GNN variant that enhances standard graph convolutions by incorporating attention mechanisms to weigh the contributions of neighboring nodes differently.

Instead of treating all neighbors equally, GATs learn attention coefficients that allow the model to focus more on relevant or informative nodes while down-weighting less important ones.

This adaptive weighting improves learning on heterogeneous or noisy graphs and allows the network to capture subtle patterns in relational data.

GATs are particularly useful in scenarios where certain edges or nodes carry more significance, such as knowledge graphs, social networks, and multi-relational datasets.

Advantages

1. Adaptive Neighbor Weighting Through Attention

GATs assign different attention scores to each neighbor, allowing the model to focus on more important nodes during feature aggregation.

This improves representation quality, particularly in graphs where certain relationships are more informative than others, such as social or knowledge graphs with heterogeneous connections.

2. Flexibility Without Preprocessing

Unlike GCNs, GATs do not require pre-computed graph Laplacians, making them more flexible and easier to apply to various graph structures.

This reduces preprocessing time and allows the model to handle graphs that are irregular or dynamically changing.

3. Improved Expressiveness

By learning attention coefficients, GATs can capture subtle patterns in node interactions, enhancing model expressiveness.

This is especially useful for tasks where connections vary in importance, such as predicting interactions in multi-relational knowledge graphs.

4. Applicability to Multi-Relational or Heterogeneous Graphs

Attention mechanisms allow GATs to assign appropriate importance to different types of edges or relationships, making them well-suited for knowledge graphs, social networks, and traffic networks with complex connectivity.

Disadvantages

1. High Computational Complexity

Calculating attention weights for all neighbors introduces computational overhead, particularly in large graphs.

This can result in slower training and inference times compared to simpler aggregation methods.

2. Risk of Overfitting

The flexibility of attention mechanisms can lead to overfitting if the model focuses too heavily on specific neighbors in small or sparse datasets, reducing generalization performance.

3. Partial Interpretability Only

Although attention weights offer some insight into neighbor importance, the overall reasoning process remains difficult to fully interpret, particularly in deep GAT layers.

4. Sensitivity to Hyperparameters

Performance is highly dependent on attention head count, dropout rates, and learning rates. Improper tuning can significantly degrade results, requiring careful experimentation.

Graph Sample and Aggregate (GraphSAGE)

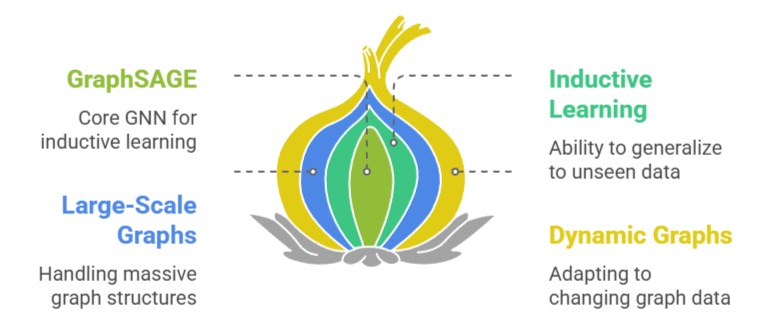

GraphSAGE is a variant of GNNs designed for inductive learning on large-scale or dynamic graphs.

Unlike GCNs, which typically operate in a transductive setting (embedding only seen nodes), GraphSAGE generates embeddings for unseen nodes by sampling a node’s local neighborhood and aggregating features using functions such as mean, LSTM, or pooling.

This enables the model to handle evolving graphs where new nodes constantly appear. GraphSAGE is highly scalable, supports mini-batch training, and is effective for tasks such as recommendation systems, social network analysis, and biological network predictions.

Its sampling-based approach allows it to process massive graphs without the memory limitations of full-batch methods.

Advantages

1. Inductive Learning for Unseen Nodes

GraphSAGE generates embeddings for previously unseen nodes by sampling and aggregating neighborhood features.

This inductive capability is particularly useful for dynamic graphs, such as social networks or recommendation systems, where new nodes constantly appear.

2. Scalable to Large Graphs

GraphSAGE uses neighborhood sampling and mini-batch training to handle very large graphs efficiently.

This reduces memory usage and allows training on graphs with millions of nodes and edges, overcoming one of the main limitations of GCNs.

3. Flexible Aggregation Functions

GraphSAGE supports multiple aggregation strategies, such as mean, LSTM-based, and pooling aggregators, allowing embeddings to be tailored to different tasks or graph structures.

This flexibility improves performance across diverse domains.

4. Efficient and Parallelizable Training

By sampling neighborhoods rather than using full-batch computation, GraphSAGE reduces computational overhead and allows for parallelized training, making it practical for real-world applications.

Disadvantages

1. Sampling Bias

Neighborhood sampling may omit important nodes, which can introduce bias into the learned embeddings.

This can lead to lower quality representations and degraded performance in downstream tasks.

2. Limited Global Context Capture

GraphSAGE primarily focuses on local neighborhoods, which may restrict its ability to model long-range dependencies unless deeper layers are stacked, potentially leading to over-smoothing.

3. Hyperparameter Sensitivity

The choice of sampling size, aggregation type, and layer depth significantly impacts model performance, requiring careful tuning to achieve optimal results.

4. Complexity in Heterogeneous Graphs

Handling graphs with multiple node or edge types may require customized aggregation mechanisms, complicating implementation and potentially reducing efficiency.