Sequence and attention models have become the cornerstone of modern artificial intelligence applications, enabling machines to understand, process, and generate sequential data efficiently.

Their ability to model dependencies in time-series or token-based sequences has revolutionized fields such as natural language processing (NLP), machine translation, and speech recognition.

In NLP, these models allow machines to interpret context, semantics, and syntactic structures, enabling intelligent text analysis, summarization, and conversational AI.

Machine translation benefits from the sequence-to-sequence frameworks and attention mechanisms by accurately mapping sentences from a source language to a target language while capturing context, idiomatic expressions, and syntactic nuances.

Meanwhile, speech recognition systems leverage recurrent and attention-based architectures to convert spoken language into text, facilitating applications such as virtual assistants, real-time transcription, and accessibility tools for differently-abled users.

The rise of Transformer-based architectures, including BERT and GPT, has further accelerated advancements in these domains by enabling highly parallelized training, long-range dependency modeling, and pretraining on vast unlabeled datasets.

These innovations have drastically improved accuracy, scalability, and adaptability of AI systems across multiple languages, accents, and domains.

Natural Language Processing (NLP)

NLP encompasses the use of deep learning models to analyze, understand, and generate human language.

Sequence and attention models allow NLP systems to process sentences or documents while maintaining contextual understanding, enabling tasks such as sentiment analysis, summarization, question answering, and text classification.

By capturing long-range dependencies and semantic nuances, these models provide a framework for machines to interact with language in ways that are contextually meaningful, efficient, and scalable across multiple languages.

Advantages

1. Contextual Understanding

Sequence and attention models provide machines with the ability to capture the meaning of words in context rather than in isolation.

This contextual awareness allows for nuanced interpretation of polysemous words and idiomatic expressions.

For Example, in sentiment analysis, a model can distinguish between sarcasm and literal statements, leading to higher accuracy in understanding intent across social media, reviews, or documents.

2. Automation of Language-Dependent Tasks

NLP enables automation in areas like customer support, document summarization, and data extraction.

Tasks that previously required human effort, such as responding to emails or analyzing survey data, can now be performed at scale with reduced error rates, increasing efficiency while allowing human resources to focus on higher-level decision-making.

3. Multilingual Capabilities

Modern NLP models, especially Transformer-based architectures, can be trained across multiple languages or adapted to low-resource languages using transfer learning.

This capability enables global applications, bridging communication barriers and making AI systems universally applicable in diverse linguistic contexts.

4. Scalability

Once pretrained, NLP models can be fine-tuned on specific tasks or domains without retraining from scratch.

This modular approach enables organizations to deploy solutions rapidly, scaling language understanding across multiple tasks such as chatbots, recommendation systems, and compliance monitoring.

5. Enhanced Information Retrieval

Attention-based NLP systems can identify relevant sections within large texts, improving search engines, question-answering systems, and knowledge extraction pipelines.

This capability reduces time spent on manual reading and facilitates informed decision-making in research and business intelligence.

6. Support for Conversational AI

Sequence models underpin chatbots and virtual assistants, enabling natural dialogue and context retention over multiple conversational turns.

This improves user experience and enables human-like interaction in applications ranging from customer service to healthcare advisory systems.

7. Facilitates Knowledge Discovery

Advanced NLP models can summarize large corpora, extract entities, and detect relationships, aiding in insights discovery and trend analysis.

By processing vast amounts of textual data efficiently, organizations can make faster, data-driven decisions with improved accuracy.

Disadvantages

1. Requires Large, High-Quality Datasets

Accurate NLP models depend heavily on large-scale, clean, and well-annotated datasets.

Poor-quality data can lead to misinterpretation, biased predictions, and reduced generalization, making data preparation a crucial bottleneck.

2. Computationally Intensive

Training and deploying state-of-the-art NLP models, especially Transformers, require significant computational resources and memory.

This limits accessibility for smaller organizations and increases operational costs.

3. Susceptible to Bias

Models trained on biased datasets can propagate societal biases, resulting in discriminatory outcomes in sentiment analysis, hiring tools, or content moderation.

Careful dataset curation and fairness evaluation are essential to mitigate these risks.

4. Language and Domain Dependency

Pretrained models may underperform in domain-specific or low-resource languages without fine-tuning.

Adaptation requires additional data and effort to ensure accurate predictions.

5. Limited Understanding of Pragmatics

Even advanced models may struggle with sarcasm, humor, or highly context-dependent nuances that require cultural or situational understanding, limiting their effectiveness in certain applications.

6. High Inference Latency

Complex NLP models with deep attention layers can exhibit slow response times, which may be unsuitable for real-time applications without optimization strategies like distillation or quantization.

7. Interpretability Challenges

Attention weights provide some insight but do not fully explain model reasoning.

Understanding why predictions are made remains complex, limiting trust and accountability in high-stakes applications.

Examples

1. Chatbots and Virtual Assistants: Google Assistant, Alexa.

2. Sentiment Analysis: Social media or product review analysis.

3. Text Summarization: Automatic news or research paper summaries.

4. Named Entity Recognition: Identifying entities in documents.

5. Question Answering Systems: SQuAD-based AI assistants.

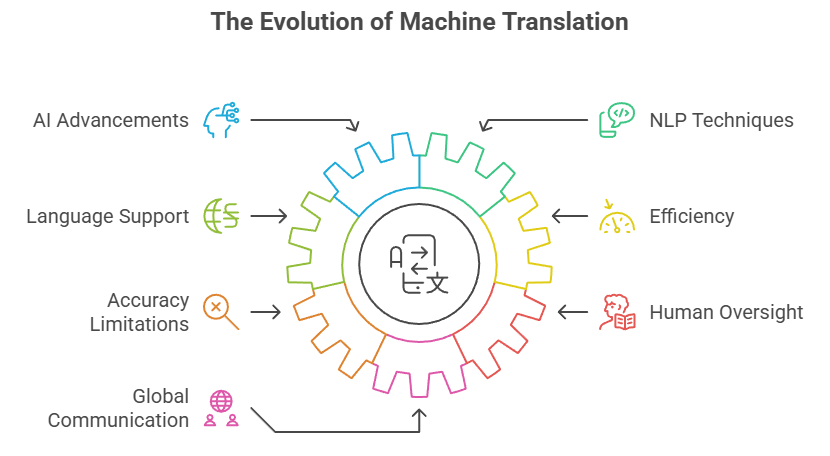

Machine Translation

Machine translation (MT) uses sequence models and attention mechanisms to automatically convert text from one language to another.

Attention enables the model to focus on relevant source words while generating target sentences, capturing syntactic and semantic nuances, word order variations, and idiomatic expressions.

Modern Transformers have outperformed traditional RNN-based MT models, providing accurate, fluent translations suitable for global communication.

Advantages

1. Cross-Language Communication

MT enables instant translation across languages, facilitating global collaboration in business, research, and online communication.

It breaks language barriers, allowing information to be accessed and shared universally.

2. High Accuracy with Contextual Understanding

Attention mechanisms ensure that translations consider context, reducing errors caused by polysemy or ambiguous phrases.

This leads to more coherent and meaningful translations than word-by-word approaches.

3. Scalable to Multiple Languages

Transformer-based MT systems can handle dozens of languages with shared representations, making multilingual deployment more efficient and cost-effective.

4. Reduces Human Effort

Manual translation is slow and resource-intensive.

Automated MT systems can translate large volumes of text rapidly, saving time and operational costs in international organizations.

5. Continuous Improvement with Self-Supervised Learning

Pretraining on large bilingual or monolingual corpora allows MT models to improve with additional unlabeled data.

This enhances performance even without extensive parallel datasets.

6. Adaptable to Domain-Specific Translation

Fine-tuning MT models for technical, legal, or medical domains improves accuracy for specialized texts, enabling practical deployment in industry-specific scenarios.

7. Facilitates Real-Time Applications

Modern MT systems support real-time translation for live meetings, subtitles, and messaging platforms, enhancing accessibility and global engagement.

Disadvantages

1. Quality Depends on Data Availability

MT performance suffers for low-resource languages or specialized terminology due to limited training corpora.

Accurate translation requires comprehensive multilingual datasets.

2. Struggles with Idiomatic and Cultural Expressions

MT systems often fail to correctly interpret idioms, metaphors, or cultural references, leading to unnatural or misleading translations.

3. High Computational Requirements

Training large-scale MT models is resource-intensive, requiring GPUs/TPUs and large memory capacity, which may limit deployment in small-scale settings.

4. Error Propagation in Long Sequences

Incorrect predictions early in a sequence can affect subsequent outputs, causing compounding errors, especially in long sentences or paragraphs.

5. Limited Context Window

Even with Transformers, extremely long documents may exceed the model’s attention window, leading to context loss and decreased translation quality.

6. Requires Post-Editing for Critical Texts

Machine translations often need human review for sensitive or high-stakes content to ensure correctness, increasing workflow complexity.

7. Susceptible to Biases in Pretraining Data

Models may inherit biases present in multilingual datasets, affecting fairness and accuracy in certain contexts.

Examples

1. Google Translate: Text and speech translation.

2. Live Subtitles: Real-time translation for meetings or streaming.

3. Multilingual Chatbots: Customer support across languages.

4. International Content Localization: Websites, apps, and marketing materials.

5. Academic Research Translation: Scientific papers across languages.

Speech Recognition

Speech recognition converts spoken language into text, enabling machines to understand and process audio input.

Sequence models like RNNs, LSTMs, and Transformers capture temporal dependencies in speech, while attention mechanisms allow the model to focus on relevant segments of audio for accurate transcription.

Applications range from virtual assistants to automated transcription, accessibility tools, and voice-controlled systems, making speech recognition a critical interface between humans and AI systems.

Advantages

1. Enables Hands-Free Interaction

Speech recognition allows users to interact with devices using natural language, facilitating hands-free control in smart homes, vehicles, and mobile devices, enhancing convenience and accessibility.

2. Supports Accessibility

Voice-to-text systems provide critical assistance to individuals with disabilities, such as hearing impairments or motor limitations, enabling them to communicate and access information efficiently.

3. High Accuracy with Contextual Models

Modern attention-based models consider temporal and semantic context, leading to highly accurate transcription even in noisy environments, multi-speaker scenarios, or varying accents.

4. Automates Manual Transcription Tasks

Speech recognition reduces the time and cost associated with converting audio recordings into text, benefiting industries like journalism, legal, and healthcare documentation.

5. Scalable Across Languages

Pretrained speech models can be fine-tuned for multiple languages, accents, and dialects, facilitating global deployment and communication.

6. Integrates with NLP for Voice Applications

Speech recognition serves as the first step in voice-based NLP pipelines, enabling sentiment analysis, intent detection, and conversational AI applications.

7. Supports Real-Time Applications

Attention-based models allow streaming transcription and live command execution, enabling real-time interactions with virtual assistants, call centers, and educational tools.

Disadvantages

1. Sensitive to Noise and Accents

Background noise, dialects, and pronunciation variations can degrade accuracy, requiring robust preprocessing and data augmentation.

2. Computational Resource Requirements

Real-time transcription and high-quality recognition demand GPUs or specialized hardware, increasing cost and limiting edge deployment.

3. Data-Hungry Models

Training accurate speech recognition models requires large, annotated audio datasets, which may not be available for all languages or domains.

4. Privacy Concerns

Voice data collection may raise privacy issues, particularly in healthcare, customer service, or personal assistant applications, necessitating careful data handling.

5. Limited Understanding Beyond Transcription

While converting speech to text is accurate, models may misinterpret tone, sarcasm, or intent without additional contextual NLP processing.

6. Domain-Specific Adaptation Required

Technical vocabulary or specialized accents may require additional fine-tuning to achieve high accuracy, increasing implementation complexity.

7. Susceptible to Overfitting on Training Voices

Models trained on limited speaker profiles may underperform when exposed to new speakers or recording conditions, reducing generalization.

Examples

1. Virtual Assistants: Siri, Alexa, Google Assistant.

2. Automated Transcription Services: Legal or medical transcription.

3. Voice-Controlled Interfaces: Smart home devices, mobile applications.

4. Language Learning Tools: Pronunciation evaluation and feedback.

5. Real-Time Subtitles: Accessibility for live events or broadcasts.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.