Variational Autoencoders (VAEs) are a class of probabilistic generative models that combine principles of deep learning with Bayesian inference.

Unlike traditional autoencoders, which deterministically compress input data into a latent representation, VAEs map inputs into a probabilistic latent space, allowing them to generate new data samples by sampling from this latent distribution.

This approach introduces smoothness and continuity in the latent space, enabling meaningful interpolation, reconstruction, and generation of novel examples.

VAEs consist of an encoder, which learns to approximate the posterior distribution of latent variables, and a decoder, which reconstructs the data from sampled latent vectors.

Training involves maximizing the evidence lower bound (ELBO), balancing reconstruction fidelity with the regularization of the latent space through a Kullback-Leibler (KL) divergence term.

Latent space representations learned by VAEs capture the underlying structure and variations in the data, making them highly suitable for applications such as image synthesis, anomaly detection, feature extraction, and semi-supervised learning.

By enforcing a continuous latent space, VAEs enable smooth transitions between different data points, which can be leveraged in creative AI tasks, interpolation of images, or exploration of generative factors.

Moreover, VAEs facilitate disentanglement of underlying factors in complex datasets, offering interpretability and controllability over generated outputs.

Probabilistic Representation Learning with Variational Autoencoders

Variational Autoencoders learn latent representations as probability distributions rather than fixed encodings, enabling them to capture uncertainty and variability inherent in real-world data.

This probabilistic framework supports robust generation, smooth interpolation, and effective generalization across diverse tasks.

1. Probabilistic Latent Representations

VAEs map input data into a probabilistic latent space instead of a fixed point, enabling the model to capture the inherent uncertainty and variability in data.

This probabilistic formulation allows the model to generate diverse outputs by sampling from the latent space.

The latent vectors follow a learned distribution, typically Gaussian, which ensures smooth transitions between points and facilitates interpolation between data samples.

For instance, in image generation, this allows controlled changes in attributes like facial expressions or object orientation.

Such a probabilistic structure also enhances robustness, enabling better generalization when handling previously unseen data, as the model can naturally represent multiple possibilities rather than deterministic mappings.

2. Structured Latent Space for Generative Tasks

The continuous latent space learned by VAEs encodes meaningful relationships between features of the data, making it easier to manipulate or explore underlying generative factors.

For Example, moving along specific latent dimensions in an image dataset can change color, shape, or style attributes smoothly.

This structured space is invaluable for creative applications, data augmentation, and feature disentanglement.

It allows practitioners to control the output characteristics explicitly, producing variations systematically rather than randomly.

The latent structure also supports interpretability, as each dimension can often correspond to a semantically relevant factor in the data, enhancing understanding and utility of the learned representations.

3. Effective for Semi-Supervised Learning

VAEs excel in scenarios where labeled data is scarce because they can learn rich, unsupervised latent representations from large amounts of unlabeled data.

These representations capture the essential structure of the input space, which can then be leveraged in downstream supervised tasks such as classification, clustering, or regression.

For Example, in medical imaging, VAEs can extract latent features from unlabeled scans, which are then used to train a classifier with limited annotations.

This approach reduces reliance on expensive labeled datasets and enables improved performance in real-world applications where data labeling is costly or time-consuming.

4. Smooth Data Interpolation

A defining feature of VAEs is their ability to interpolate smoothly between different data points in the latent space.

By sampling along paths connecting two latent vectors, VAEs can generate transitional outputs that gradually shift from one data instance to another.

For Example, morphing between two facial images produces realistic intermediate images that maintain coherence and preserve key features.

This property is particularly useful in creative AI, design exploration, or generating synthetic data for training purposes.

Smooth interpolation also demonstrates the model’s understanding of the underlying manifold structure of the dataset, reflecting a high-quality latent space.

5. Regularization and Generalization

VAEs employ the KL divergence term to regularize latent representations, encouraging them to match a predefined prior distribution, usually Gaussian.

This regularization prevents overfitting, ensures latent space continuity, and allows the generator to produce novel yet plausible samples. As a result, VAEs generalize well to unseen data, making them suitable for both generative and predictive tasks.

Proper regularization also ensures that sampling from the latent space produces coherent outputs, enhancing reliability for downstream applications.

The balance between reconstruction loss and KL divergence is critical to achieving this generalization without compromising output quality.

6. Applications in Anomaly Detection

VAEs are well-suited for anomaly detection because they learn a probabilistic model of normal data patterns.

Outliers or unusual instances are identified based on poor reconstruction or deviation from expected latent distributions.

For Example, in industrial monitoring, VAEs can detect abnormal sensor readings, while in medical imaging, unusual patterns in MRI or X-ray scans can be flagged as potential anomalies.

This capability leverages both reconstruction error and latent space structure, providing a flexible tool for detecting rare or unexpected occurrences in complex datasets.

7. Complementary to Other Generative Models

VAEs can be combined with GANs, normalizing flows, or attention mechanisms to overcome inherent limitations like blurry outputs.

Hybrid models integrate the probabilistic framework of VAEs with the adversarial quality of GANs, producing high-fidelity samples while preserving the interpretability and continuity of latent space.

Such combinations are increasingly used in image super-resolution, text-to-image generation, and video synthesis.

By leveraging complementary strengths, hybrid models offer both structured latent representation and high-quality outputs, expanding VAEs’ applicability across diverse generative tasks.

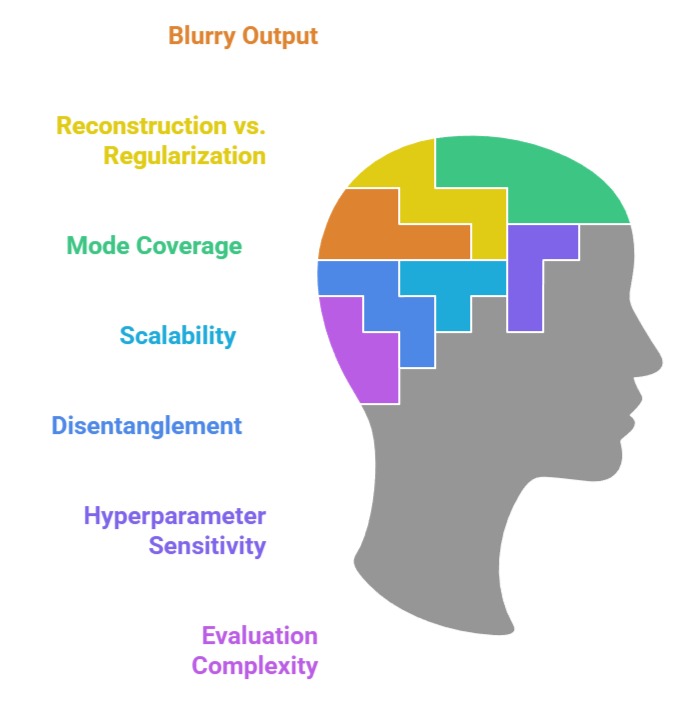

Challenges in Variational Autoencoders

1. Blurry Output Generation

A common limitation of VAEs is that outputs, particularly in image generation, can appear blurry or less detailed compared to GANs.

This occurs because the reconstruction loss encourages the model to produce average outputs across possible reconstructions, which reduces sharpness.

While VAEs excel in learning smooth latent spaces, the resulting images may lack high-frequency details and fine textures.

Addressing this often requires hybrid techniques such as combining VAEs with adversarial losses, hierarchical architectures, or post-processing methods to enhance visual fidelity while preserving the probabilistic advantages of VAEs.

2. Balancing Reconstruction and Latent Regularization

The VAE training objective consists of a reconstruction term and a KL divergence term.

Achieving the right balance is challenging: overemphasis on reconstruction can cause overfitting and unstructured latent space, while overemphasis on KL divergence can degrade reconstruction quality and produce unrealistic outputs.

Proper tuning is critical for generating meaningful latent representations while maintaining high-quality reconstructions.

Techniques such as beta-VAE introduce a weighting factor for KL divergence to control the trade-off between disentanglement and reconstruction fidelity.

3. Mode Coverage Limitations

Although VAEs encourage smooth and continuous latent spaces, they may struggle to represent multimodal or highly complex data distributions fully. Rare patterns or subtle variations may be underrepresented, limiting generative diversity.

In domains like medical imaging or high-resolution video, this can result in outputs that fail to capture all essential variations.

Mitigation strategies include hierarchical VAEs, expressive priors, or combining VAEs with GANs to improve coverage and fidelity.

4. Scalability to High-Dimensional Data

Training VAEs on high-resolution images, long sequences, or multidimensional datasets is computationally intensive.

Memory and processing constraints can hinder the deployment of large-scale VAEs, particularly in research or industry settings with limited hardware.

Optimized convolutional architectures, sparse latent representations, or dimensionality reduction techniques are often employed to handle large inputs without compromising generative performance.

5. Disentanglement and Interpretability Challenges

While VAEs promote structured latent spaces, unsupervised models may produce entangled latent factors where multiple data attributes are mixed across dimensions.

This reduces interpretability and controllability, making it harder to manipulate specific features in generated samples.

Techniques like beta-VAE or factor-VAE aim to improve disentanglement, but achieving fully interpretable latent factors remains challenging, particularly in complex or high-dimensional datasets.

6. Sensitivity to Hyperparameters

VAE performance is highly dependent on hyperparameter choices, including latent dimension size, learning rate, network depth, and batch size.

Small deviations can result in unstable training, suboptimal reconstructions, or ineffective latent spaces.

Extensive experimentation and domain-specific tuning are often necessary to optimize performance, which can be resource-intensive and time-consuming.

7. Evaluation Complexity

Assessing the quality of VAE outputs and the structure of latent space is non-trivial.

Metrics such as ELBO, reconstruction loss, or FID provide partial insights but do not fully capture realism, diversity, or disentanglement.

For generative and downstream tasks, human evaluation or task-specific performance metrics are often required, adding complexity to the deployment and validation of VAEs in real-world applications.