Generative Adversarial Networks (GANs), introduced by Ian Goodfellow in 2014, represent one of the most powerful approaches in generative modeling.

GANs consist of two neural networks—the generator and the discriminator—engaged in a competitive framework where the generator creates synthetic data samples, and the discriminator evaluates whether the samples are real or fake.

This adversarial setup enables the generator to improve continuously, producing increasingly realistic outputs, whether images, audio, or text.

GANs have transformed fields such as image synthesis, super-resolution, data augmentation, and creative AI applications like art and style transfer.

The ability of GANs to model complex, high-dimensional data distributions makes them ideal for applications requiring realistic sample generation without explicit density estimation.

Unlike traditional generative models, GANs do not rely on likelihood maximization but instead learn implicitly through the adversarial game. This leads to highly detailed outputs but also introduces significant training challenges.

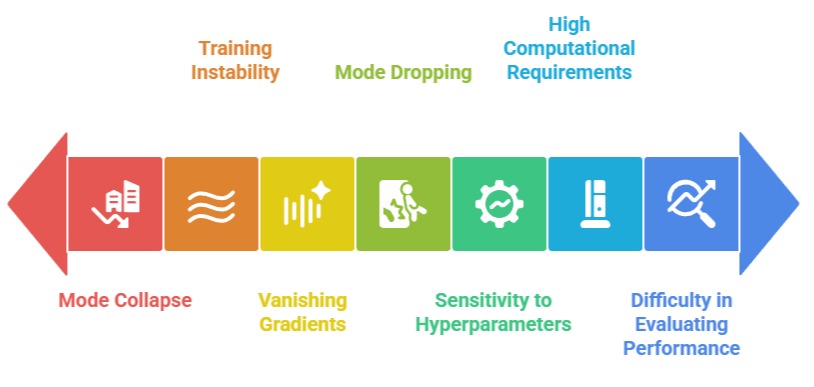

GANs are notoriously difficult to train due to issues such as mode collapse, vanishing gradients, and training instability, which arise from the delicate balance required between the generator and discriminator.

Furthermore, GANs demand careful hyperparameter tuning, network architecture design, and monitoring to achieve stable convergence.

Despite these difficulties, advances such as Wasserstein GANs (WGANs), spectral normalization, and progressive growing have improved stability and performance, enabling GANs to produce photorealistic images, high-fidelity audio, and convincing synthetic data across multiple domains.

GAN Training Challenges

1. Mode Collapse

Mode collapse occurs when the generator produces limited variations of output samples, failing to capture the full diversity of the target distribution.

This happens because the generator finds a small set of outputs that successfully fool the discriminator repeatedly.

While the generator appears to perform well in fooling the discriminator, the results lack diversity, which is problematic for applications like data augmentation or creative content generation where variety is essential.

Mode collapse requires careful monitoring and architectural strategies such as mini-batch discrimination, unrolled GANs, or diversity-sensitive loss functions to maintain rich and varied outputs.

2. Training Instability

GANs are inherently unstable because the generator and discriminator are trained simultaneously in a minimax game.

Small updates in one network can drastically affect the other, leading to oscillations or divergence in loss values. Instability can manifest as non-converging training curves, sudden drops in performance, or unrealistic outputs.

Stabilization techniques, including learning rate adjustments, gradient penalties, and careful initialization, are essential to achieve consistent convergence without compromising output quality.

3. Vanishing Gradients

During training, the generator may receive very weak gradients if the discriminator becomes too strong, leaving the generator unable to improve effectively.

This vanishing gradient problem is particularly severe in high-capacity discriminators, causing slow or stalled learning.

Techniques such as using the Wasserstein loss or label smoothing help maintain meaningful gradient flow, enabling the generator to learn even when the discriminator performs well.

4. Sensitivity to Hyperparameters

GAN performance is highly dependent on hyperparameter choices such as learning rates, optimizer type, batch size, and regularization parameters.

Small deviations can cause instability, divergence, or mode collapse.

This sensitivity requires extensive experimentation and domain expertise to fine-tune hyperparameters for stable training, making GAN deployment challenging for practitioners without substantial computational resources.

5. Difficulty in Evaluating Performance

Unlike supervised learning tasks with well-defined metrics, evaluating GAN outputs is non-trivial.

Measures like Inception Score (IS) or Fréchet Inception Distance (FID) provide approximate quality assessment but cannot fully capture realism, diversity, or contextual relevance.

Reliable evaluation often requires human judgment or task-specific criteria, complicating benchmarking and progress tracking.

6. High Computational Requirements

GANs, especially with large architectures like StyleGAN or BigGAN, require significant memory and computation for stable training.

Training high-resolution models can demand multi-GPU setups, extended training times, and efficient memory management, limiting accessibility for smaller labs or organizations.

7. Mode Dropping and Bias

Even when partially stable, GANs may ignore less frequent modes in the data distribution, producing biased outputs that overrepresent common patterns.

is particularly critical in applications like medical imaging or dataset augmentation, where capturing full diversity is essential for downstream performance and fairness.

Real-Life Applications and Case Studies

Generative Adversarial Networks (GANs) have moved beyond theory into impactful real-world use across multiple industries.

These applications and case studies demonstrate how GANs solve practical problems by generating realistic, high-quality synthetic data and content.

1. Image Synthesis and Super-Resolution

GANs are widely used to generate high-resolution, photorealistic images from low-resolution inputs.

For Example, Super-Resolution GANs (SRGANs) have been deployed in medical imaging to enhance MRI scans, enabling more accurate diagnosis.

Similarly, in photography and satellite imagery, GANs reconstruct detailed images from blurred or compressed sources.

This application demonstrates the generator’s ability to learn complex textures and details, while attention to training stability is critical to avoid artifacts or unrealistic outputs.

2. Art, Design, and Creative Content

GANs have revolutionized creative industries by generating artwork, music, and fashion designs.

For instance, Artbreeder and DeepArt use GANs to create unique digital artwork, allowing designers to experiment with styles and compositions rapidly.

In fashion, GANs generate novel clothing designs for trend prediction and virtual try-ons.

These applications highlight GANs’ capability to explore creative spaces and produce innovative outputs that would be time-consuming or impossible to generate manually.

3. Data Augmentation

GANs are increasingly used for augmenting training datasets in domains with limited labeled data.

For Example, in medical imaging, GANs generate synthetic X-ray, MRI, or CT images to increase dataset size and diversity.

This improves downstream model performance for classification or segmentation tasks. Similarly, autonomous vehicle systems use GANs to generate rare driving scenarios for safer model training.

While effective, maintaining diversity and preventing mode collapse are critical challenges in data augmentation applications.

4. Video Generation and Animation

GANs are employed in video frame prediction, deepfake generation, and animation.

Deepfake technologies, while controversial, showcase GANs’ ability to create realistic facial animations and lip-sync videos.

In entertainment, GANs generate dynamic visual effects or simulate realistic environments in video games and virtual reality.

These applications emphasize the importance of temporal consistency and multi-dimensional modeling in sequential data generation.

5. Medical and Scientific Research

In medical research, GANs create synthetic medical datasets to protect patient privacy while enabling AI training.

For Example, GAN-generated retinal images assist in disease detection without exposing sensitive data.

Similarly, in drug discovery, GANs generate molecular structures and predict chemical properties, accelerating early-stage research.

These cases highlight GANs’ potential for high-impact scientific and healthcare applications but also underline the necessity of maintaining fidelity and minimizing bias in synthetic data.

Challenges in Real-World GAN Applications

Despite their impressive capabilities, deploying GANs in real-world systems presents significant technical, ethical, and operational challenges.

Understanding these limitations is essential for building reliable, responsible, and effective GAN-based solutions.

1. Ensuring Realism and Fidelity

In practical deployment, generated outputs must be indistinguishable from real-world data.

High-resolution image or video generation may produce artifacts or unrealistic features if training is unstable, limiting usefulness in critical domains like medicine or security.

2. Mode Collapse and Limited Diversity

Generators may produce repetitive or highly similar outputs, failing to cover the full data distribution.

This is particularly problematic in applications like data augmentation or creative generation, where diversity is crucial for model performance and novelty.

3. Ethical and Legal Concerns

GAN-generated content, such as deepfakes or synthetic media, raises ethical and legal questions.

Misuse can propagate misinformation, manipulate public perception, or violate intellectual property, necessitating strict governance and detection mechanisms.

4. High Computational and Resource Demands

Training GANs for high-resolution outputs or complex tasks requires powerful GPUs/TPUs, large memory, and prolonged training times.

Resource constraints can prevent smaller organizations from leveraging GAN capabilities effectively.

5. Evaluation and Benchmarking Difficulty

Assessing GAN performance remains challenging, as traditional loss metrics do not fully capture output quality, diversity, or realism.

Reliance on proxies like FID or Inception Score may be insufficient for certain applications, requiring human evaluation or domain-specific metrics.

6. Bias and Representation Issues

GANs can replicate biases present in training data, leading to skewed outputs that misrepresent underrepresented groups or features.

In healthcare or autonomous systems, such bias can compromise fairness, safety, or reliability.

7. Training Instability in Real-World Scenarios

GANs are highly sensitive to hyperparameters, network architecture, and data quality.

Instability can manifest as oscillating losses, mode collapse, or divergence, particularly when scaling to large datasets or deploying in novel environments.