Deep learning frameworks provide the foundational tools that enable researchers, students, and practitioners to build, train, and deploy neural networks efficiently.

Among the numerous frameworks available today, PyTorch and TensorFlow stand out as the most widely adopted due to their flexibility, performance, and extensive ecosystem support.

These platforms offer high-level abstractions for defining neural architectures, automatic differentiation for efficient gradient computation, and access to optimized kernels that leverage GPUs and TPUs.

PyTorch is well-known for its intuitive design, dynamic computation graphs, and Pythonic interface, making it a preferred choice for academic research and rapid experimentation.

TensorFlow, on the other hand, excels in production readiness, scalability, and deployment capabilities across multiple environments such as mobile devices, cloud platforms, and edge hardware.

Each framework brings distinctive strengths, allowing developers to choose the tool that best aligns with their workflow, project size, and deployment requirements.

PyTorch

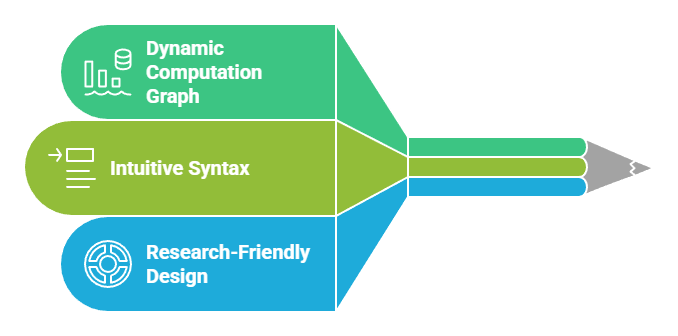

PyTorch is an open-source deep learning framework developed by Meta AI.

It is known for its dynamic computation graph, intuitive syntax, and research-friendly design.

PyTorch has become a cornerstone of academic experimentation due to its flexibility and ease of debugging.

Advantages

1. Dynamic computation graph for high flexibility

PyTorch constructs the computation graph during execution, meaning it adapts instantly to changes in input shapes or logic flows.

This flexibility allows researchers to experiment with unconventional architectures or algorithms without rewriting core components.

It simplifies debugging because errors appear at the exact step where computation fails.

This dynamic behavior is especially useful in NLP, reinforcement learning, and graph-based networks where sequence lengths or structures vary.

2. Pythonic and intuitive programming style

PyTorch mirrors native Python syntax, making model-building feel natural to anyone familiar with the language.

There is minimal abstraction between code and execution, reducing cognitive load and enabling rapid prototyping.

This design lowers barriers for beginners while empowering experts to fine-tune complex architectures without wrestling with framework-specific constraints.

As a result, developers experience faster iteration cycles and smoother experimentation.

3. Strong ecosystem for research and experimentation

The PyTorch ecosystem includes libraries like TorchVision, TorchText, and PyTorch Geometric, which provide ready-to-use datasets, pretrained models, and domain-specialized tools.

This expansive ecosystem accelerates innovation by offering modular components that integrate seamlessly with custom models.

Researchers can focus more on designing ideas and less on boilerplate code, contributing to PyTorch’s popularity in scientific communities.

Disadvantages

1. Historically weaker deployment support

Although PyTorch has improved significantly with TorchServe and ONNX, its deployment pipeline used to lag behind TensorFlow’s production tools.

Large enterprises often considered PyTorch less ideal for mobile and embedded systems due to limited earlier support.

This created friction for teams transitioning research models into production environments. While the gap is narrowing, some legacy systems still favor TensorFlow.

2. Higher memory consumption in some training scenarios

PyTorch’s dynamic graph mechanism, while flexible, can sometimes require more memory than static graph execution.

When training very large models or handling massive batches, this added memory overhead can limit scalability.

Developers must be cautious with tensor retention and gradient storage to avoid out-of-memory issues, especially on single-GPU setups.

3. Lack of official TPU support

PyTorch primarily targets GPU acceleration and does not natively support Google TPUs without external tools.

This restricts users who rely heavily on TPU clusters for large-scale or cost-efficient training.

Workarounds exist but often require using intermediate compilers or switching frameworks entirely, creating additional complexity.

Example of PyTorch Usage

Building a simple neural network using torch.nn.Sequential to classify handwritten digits from the MNIST dataset.

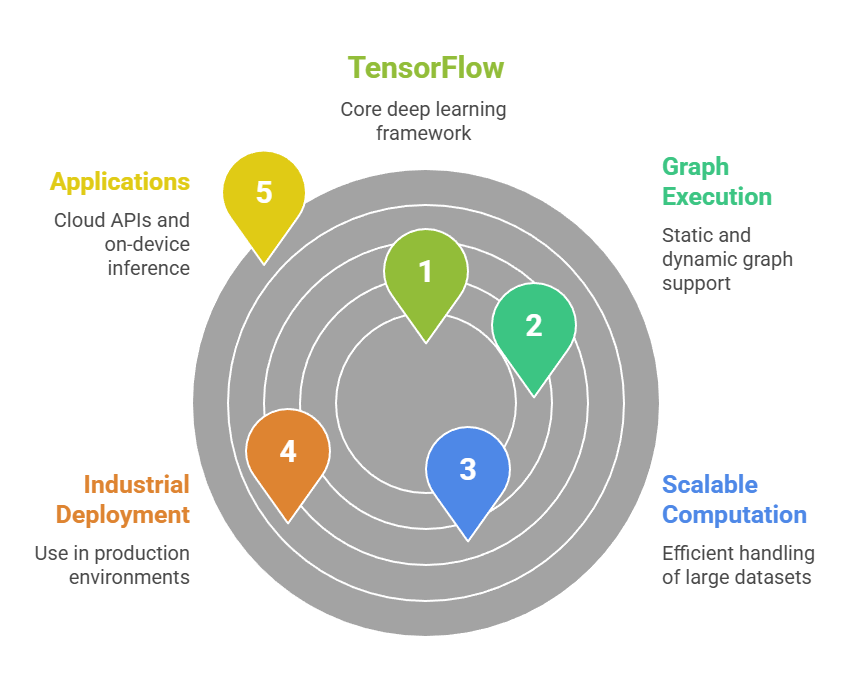

TensorFlow

TensorFlow, developed by Google DeepMind, is a powerful deep learning framework designed for scalable computation and industrial deployment.

It supports both static and dynamic graph execution and is widely used in production environments ranging from cloud API services to on-device inference.

Advantages

1. Strong production and deployment capabilities

TensorFlow excels in deploying models across various platforms, including mobile phones (TensorFlow Lite), browsers (TensorFlow.js), and enterprise environments.

Its production-grade tools allow organizations to create end-to-end pipelines for training, serving, monitoring, and updating models.

This makes TensorFlow ideal for long-term maintenance and scaling of AI systems.

2. Efficient static graph optimization

TensorFlow allows developers to build static graphs using the tf.function decorator, enabling the framework to optimize execution at compile time.

This results in faster computation, reduced memory usage, and more efficient hardware utilization.

Static graphs are particularly beneficial for repetitive large-scale batch operations, where fine-grained control of computational flow enhances training speed.

3. Robust ecosystem with extensive tooling support

TensorFlow's ecosystem includes TensorBoard for visualization, TFX for production workflows, Keras for high-level modeling, and Model Garden for state-of-the-art architectures.

These integrated tools provide an end-to-end experience from experimentation to deployment.

The ecosystem significantly enhances productivity by reducing the need for external libraries.

Disadvantages

1. Steeper learning curve for beginners

TensorFlow's combination of eager execution, static graph modes, and multiple abstractions can be overwhelming for newcomers.

Understanding how operations behave under different execution contexts requires practice and exposure.

This complexity may result in slower early progress compared to more intuitive frameworks like PyTorch.

2. Debugging static graphs can be difficult

When using static graph mode, errors often originate deep within compiled operations, making them harder to trace.

Developers have limited visibility into intermediate values unless additional debugging tools are configured.

This can slow down troubleshooting, especially for custom layers or intricate architectures.

3. Verbosity in low-level operations

While Keras provides a simplified high-level interface, lower-level TensorFlow code can become verbose and less intuitive.

Writing custom training loops, graph transformations, or specialized kernels often involves substantial boilerplate code.

This verbosity makes experimentation slower and less appealing for research-heavy workloads.

Example of TensorFlow Usage

Training a convolutional neural network using tf.keras to perform image classification on CIFAR-10.

PyTorch

Example: A Simple Feedforward Neural Network for Binary Classification

pythonimport torch

import torch.nn as nn

import torch.optim as optim

# Sample input tensor (batch_size=4, features=3)

X = torch.randn(4, 3)

y = torch.tensor([[1.0], [0.0], [1.0], [0.0]])

# Build a simple model

class SimpleModel(nn.Module):

def __init__(self):

super(SimpleModel, self).__init__()

self.layer1 = nn.Linear(3, 8)

self.activation = nn.ReLU()

self.output = nn.Linear(8, 1)

self.sigmoid = nn.Sigmoid()

def forward(self, x):

x = self.activation(self.layer1(x))

x = self.sigmoid(self.output(x))

return x

model = SimpleModel()

# Loss and optimizer

criterion = nn.BCELoss()

optimizer = optim.Adam(model.parameters(), lr=0.01)

# Forward pass

preds = model(X)

# Compute loss

loss = criterion(preds, y)

# Backpropagation

optimizer.zero_grad()

loss.backward()

optimizer.step()

print("Predictions:\n", preds)

print("Loss:", loss.item())

TensorFlow/Keras

Example: Simple Feedforward Neural Network for Binary Classification

pythonimport tensorflow as tf

from tensorflow.keras import layers, models, optimizers, losses

# Sample input (batch_size=4, features=3)

import numpy as np

X = np.random.randn(4, 3)

y = np.array([[1], [0], [1], [0]])

# Build a sequential model

model = models.Sequential([

layers.Dense(8, activation='relu', input_shape=(3,)),

layers.Dense(1, activation='sigmoid')

])

# Compile the model

model.compile(

optimizer=optimizers.Adam(learning_rate=0.01),

loss=losses.BinaryCrossentropy(),

metrics=['accuracy']

)

# Train for one epoch (just demonstration)

model.fit(X, y, epochs=1, verbose=1)

# Predict

preds = model.predict(X)

print("Predictions:\n", preds)