Diffusion models and energy-based models (EBMs) are advanced generative modeling approaches that have recently gained prominence for their ability to capture complex data distributions.

Diffusion models generate data by simulating a gradual denoising process, starting from pure noise and iteratively refining it to produce structured, realistic samples.

This approach, exemplified by Denoising Diffusion Probabilistic Models (DDPMs), has shown remarkable success in image generation, surpassing traditional GANs in fidelity and diversity.

The underlying principle involves learning the reverse process of a predefined forward diffusion, enabling the model to reconstruct high-quality samples from random noise.

Diffusion models are highly stable in training, avoid mode collapse, and naturally model uncertainty, making them particularly suitable for high-dimensional data generation.

Energy-based models, on the other hand, define a scalar energy function over input data, assigning low energy to likely configurations and high energy to improbable ones.

The model learns to associate energy landscapes with data distributions, enabling sampling via methods like Langevin dynamics or MCMC. EBMs are flexible, theoretically grounded, and capable of modeling complex, multimodal distributions.

They can also be integrated with latent-variable models or hybrid generative approaches to enhance expressivity.

Both diffusion models and EBMs provide alternatives to adversarial training, focusing on probabilistic and energy-based formulations rather than min-max games.

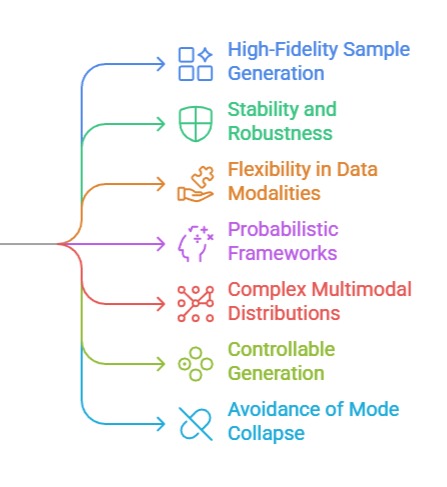

Generative Strengths of Diffusion Models and Energy-Based Models

1. High-Fidelity Sample Generation (Diffusion Models)

Diffusion models excel at producing highly detailed and realistic outputs, particularly in image synthesis and video generation.

By iteratively refining noise, these models can capture fine-grained textures, complex patterns, and semantic coherence.

Unlike GANs, which may suffer from mode collapse, diffusion models inherently explore diverse modes in the data distribution.

This capability makes them suitable for tasks like high-resolution image synthesis, text-to-image generation, and scientific visualization, where accuracy and realism are critical.

Additionally, the iterative denoising process provides robustness to perturbations and ensures stable convergence during training.

2. Stability and Robustness

Both diffusion models and EBMs exhibit stable training dynamics compared to adversarial approaches.

Diffusion models avoid the minimax optimization of GANs, reducing risks of instability or divergence.

EBMs provide a well-defined energy landscape, guiding the model to plausible configurations.

This inherent stability allows for reliable generation and better exploration of data modes, which is particularly beneficial when working with high-dimensional, multimodal, or noisy datasets.

The models are less sensitive to hyperparameters and adversarial imbalance, simplifying experimentation and deployment.

3. Flexibility in Data Modalities

Diffusion models and EBMs can be applied across multiple modalities, including images, audio, 3D shapes, and molecular structures.

In particular, are modality-agnostic, as the energy function can be defined over any data space.

This flexibility allows researchers to generate or model diverse types of structured and unstructured data, enabling applications in computer vision, computational biology, physics simulations, and generative design.

4. Probabilistic and Theoretically Grounded Frameworks

Both model classes rely on well-defined probabilistic or energy-based principles, providing interpretability and theoretical grounding.

Diffusion models explicitly model the data distribution through a learned reverse stochastic process, while EBMs assign energies reflecting data likelihoods.

This probabilistic foundation allows for principled uncertainty estimation, sampling, and integration with other generative or discriminative frameworks.

It also supports hybrid approaches that combine VAEs or normalizing flows with diffusion or energy-based formulations.

5. Ability to Model Complex Multimodal Distributions

EBMs excel at capturing highly complex, multimodal distributions that are challenging for other generative models.

By associating an energy value with each configuration, EBMs naturally represent multiple data modes without collapsing into single-point solutions.

Diffusion models similarly explore diverse modes through stochastic sampling, producing outputs that reflect the full diversity of the training dataset.

This capability is essential for tasks like drug discovery, synthetic dataset generation, and content creation.

6. Controllable and Conditional Generation

Diffusion models can incorporate conditioning signals, such as text prompts or class labels, allowing precise control over generated outputs. Conditional EBMs can similarly guide energy landscapes to produce specific configurations.

This makes both approaches suitable for guided synthesis tasks, including text-to-image, style transfer, or domain-specific data generation. Conditional modeling enhances usability and applicability in real-world scenarios requiring targeted outcomes.

7. Avoidance of Mode Collapse and Training Instability

Unlike GANs, diffusion models and EBMs do not rely on adversarial min-max optimization, which often leads to mode collapse.

The training objectives in these models encourage exploration of the full data distribution, maintaining diversity and stability.

This is particularly advantageous in scientific, medical, or high-resolution generative tasks, where losing modes can result in incomplete or biased outputs.

Challenges of Diffusion and Energy-Based Models

Diffusion and energy-based models achieve remarkable generative quality, but they introduce unique practical and computational challenges.

Understanding these limitations helps practitioners choose appropriate architectures and optimize deployments for real-world constraints.

1. Computational and Memory Intensity

Both diffusion models and EBMs require extensive computational resources due to iterative sampling, large network architectures, or repeated energy evaluations.

Training high-resolution diffusion models can take days on multi-GPU setups, while EBMs often rely on expensive MCMC or Langevin dynamics for sampling.

These requirements limit accessibility and scalability for organizations without specialized hardware.

2. Slow Sampling in Diffusion Models

The iterative denoising process in diffusion models involves hundreds or thousands of steps, leading to slow generation compared to GANs or VAEs.

Although recent techniques like DDIM or accelerated samplers reduce inference time, achieving real-time generation remains challenging for high-dimensional or high-resolution outputs.

3. Difficulty in Likelihood Estimation (EBMs)

EBMs define unnormalized probability distributions, making exact likelihood computation intractable for high-dimensional data.

Approximate methods like contrastive divergence or score matching are used, but they add complexity and may affect convergence or sample quality.

This complicates training evaluation, hyperparameter tuning, and model comparison.

4. Sensitivity to Hyperparameters and Noise Schedules

Diffusion models require carefully designed noise schedules, step sizes, and network architectures to maintain stability and sample quality.

EBMs also demand precise learning rates, regularization, and sampling strategies.

Improper settings can lead to poor convergence, unrealistic outputs, or overfitting, increasing experimental effort.

5. High-Dimensional Sampling Challenges

Sampling in high-dimensional spaces is inherently difficult for both model classes.

EBMs rely on stochastic dynamics that can struggle to explore all modes efficiently, while diffusion models require dense step-wise refinement to maintain detail.

Scaling to ultra-high-resolution images or large structured datasets introduces significant computational bottlenecks.

6. Interpretability and Latent Control

While diffusion and energy-based models produce realistic samples, controlling or interpreting latent factors is less straightforward than in VAEs.

EBMs’ energy landscapes are often opaque, and diffusion models’ iterative processes complicate direct manipulation.

Achieving controllable, interpretable generation may require auxiliary models or hybrid architectures.

7. Integration Complexity with Downstream Tasks

Applying diffusion models or EBMs in end-to-end pipelines can be challenging due to their computational cost, iterative nature, or difficulty in conditional integration.

Tasks like real-time image editing, text-to-speech synthesis, or interactive content generation may require additional optimization, approximation techniques, or hybridization with faster models.