Machine Learning Operations (MLOps) is the discipline of managing the entire lifecycle of machine learning models, from development and training through deployment and ongoing maintenance. By combining principles of DevOps with specialized processes for ML, MLOps aims to streamline workflows, ensure reproducibility, and maintain model reliability in production environments.

Introduction to MLOps

MLOps addresses the complexities unique to deploying and sustaining machine learning systems, which involve not just code but also data, models, and infrastructure. It fosters collaboration between data scientists, engineers, and IT teams to deliver automated, scalable, and traceable ML workflows.

The goal is to achieve continuous integration and continuous deployment (CI/CD) for models, enabling them to adapt to new data and evolving business requirements.

Versioning in MLOps

Versioning tracks changes and maintains records of datasets, model parameters, code, and configurations. It ensures experiments are reproducible and facilitates rollback to previous stable versions.

.png)

Benefits: Enables auditability, collaboration, experiment comparison, and regulatory compliance.

MLOps Pipelines

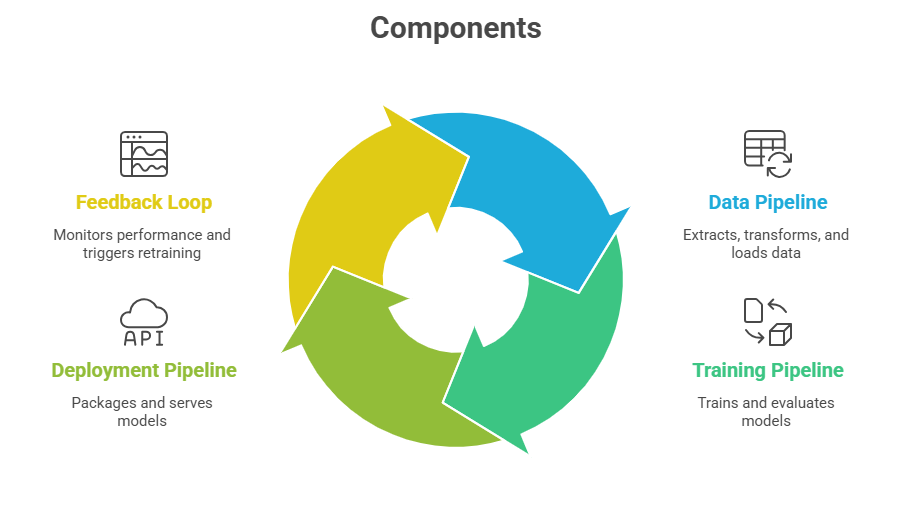

Pipelines automate the workflow of data ingestion, preprocessing, feature engineering, model training, validation, deployment, and retraining.

Tools and Frameworks: Platforms like Kubeflow, MLflow, TensorFlow Extended (TFX), and Apache Airflow simplify pipeline creation, execution, and orchestration.

Monitoring in MLOps

Track model performance metrics (accuracy, precision, recall), data and concept drift, latency, throughput, and resource utilization during inference.

Drift Detection: Identifying shifts in input data distributions or model predictions indicating degradation.

Alerts and Automation: Set thresholds and automated workflows to retrain, rollback, or redeploy models when performance drops.

Observability: Logging, tracing, and visualization dashboards provide actionable insights to maintain model health.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.