Deep learning, a subfield of machine learning, employs neural networks with multiple layers to model complex patterns and extract high-level features from data. These deep architectures have transformed areas like image recognition, speech processing, and natural language understanding by automating feature extraction and capturing intricate relationships.

Introduction to Deep Learning Architectures

Deep learning architectures consist of layers of interconnected neurons, where each successive layer learns increasingly abstract representations of input data.

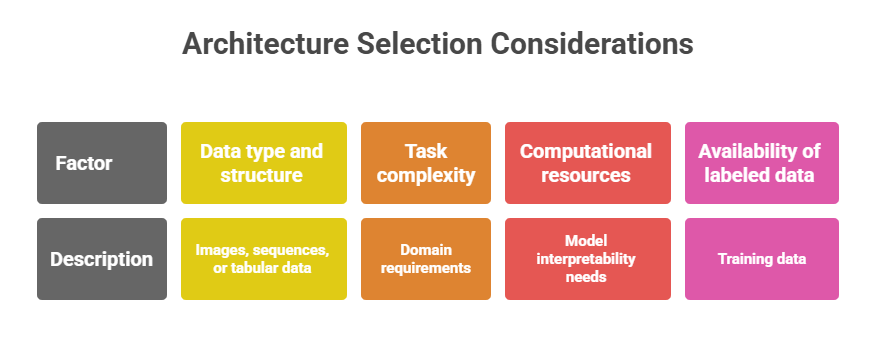

The depth (number of layers) and structure of these networks determine their ability to model complex nonlinear functions. Several architectures exist, each designed for specific types of data and tasks, from feedforward tasks to sequence modeling.

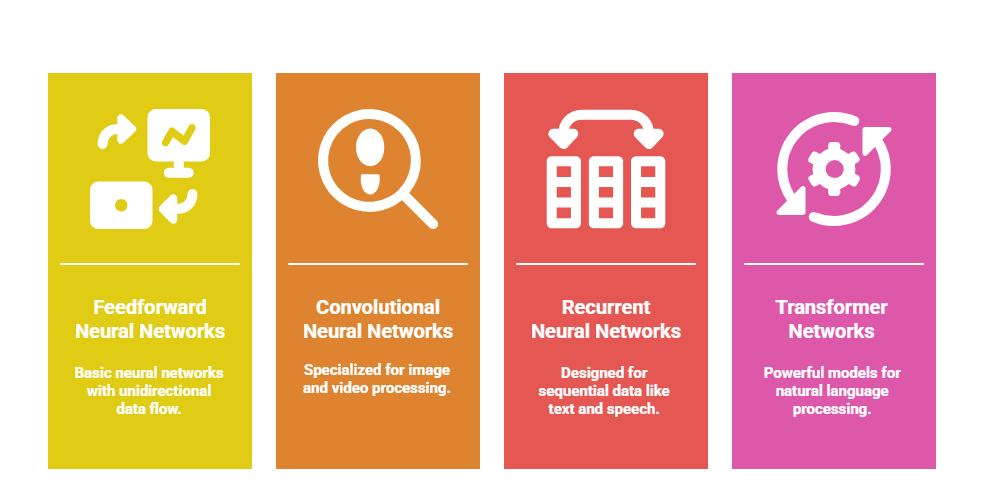

Common Deep Learning Architectures

1. Feedforward Neural Networks (FNN)

The simplest form of deep neural networks. It consists of fully connected layers where information flows linearly from input to output. Suitable for static data like tabular records, and often used as baseline models.

2. Convolutional Neural Networks (CNNs)

Designed primarily for image and spatial data. Use convolutional layers to automatically extract spatial features. Key components include convolutional layers, pooling layers (for dimensionality reduction), and fully connected layers.

Applications: Image classification, object detection, and medical imaging analysis.

3. Recurrent Neural Networks (RNNs)

Tailored for sequential data processing. It feature loops allow information persistence across time steps. Capable of modeling temporal dependencies and sequence order. Variants like Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) address the vanishing gradient problem.

Applications: Speech recognition, language modeling, time series forecasting.

4. Transformer Networks

Utilize self-attention mechanisms to weigh the importance of input elements dynamically. It avoid recurrent computations, enabling parallel processing of sequences. It has become the state-of-the-art in natural language processing and extends to vision tasks.

Models like BERT and GPT are transformer-based architectures.

Applications: Machine translation, text generation, image captioning.

Specialized Architectures and Hybrid Models

Listed below are innovative neural designs crafted to address challenges that standard networks struggle with. These models introduce new structures and learning mechanisms for improved performance.

1. Autoencoders

Autoencoders are neural architectures designed for unsupervised learning by compressing data into efficient representations and reconstructing it. They consist of an encoder that reduces dimensionality and a decoder that rebuilds the original input. These models are widely used for tasks such as dimensionality reduction, denoising, and identifying anomalies in data.

2. Generative Adversarial Networks (GANs)

GANs involve two networks—a generator that produces synthetic data and a discriminator that judges its authenticity—trained together in an adversarial setup. Through this competitive process, GANs learn to generate highly realistic data samples. They are extensively used in image synthesis, data augmentation, and advanced tasks like style transfer.

3. Capsule Networks

Capsule Networks introduce capsules, which are neuron groups that capture both features and their spatial relationships within an image. This design aims to address the limitations of traditional CNNs by preserving hierarchical pose information more effectively. Although still evolving, capsule networks show promising performance in specialized applications.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.