Neural networks are computational models inspired by the biological structures of the human brain, designed to recognise patterns, learn from data, and solve complex problems.

Playing a central role in deep learning, neural networks have revolutionised fields like image recognition, natural language processing, and predictive analytics.

Neural Networks

At their core, neural networks consist of interconnected nodes called neurons that perform mathematical computations. These neurons are organised into layers through which data flows, transforming raw inputs into meaningful outputs.

The network "learns" by adjusting the strength of connections—called weights—based on the error between predicted and actual results, enabling it to model intricate relationships within data.

Architecture of Neural Networks

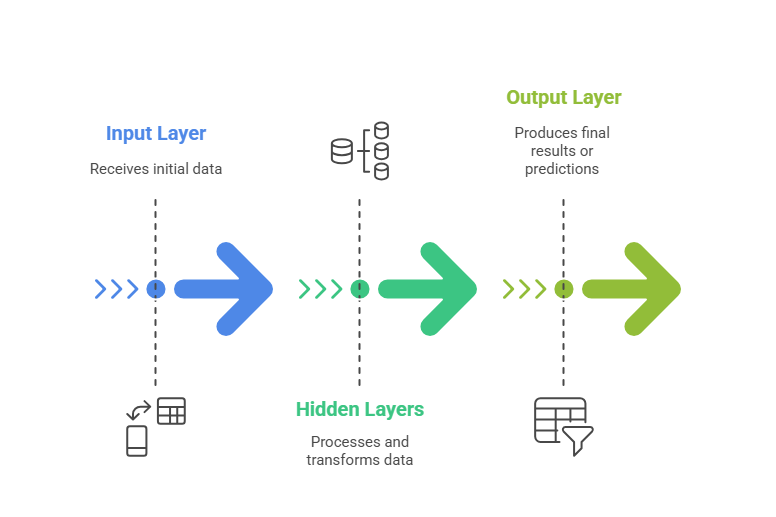

Listed below are the primary layers involved in constructing a neural network. Together, they determine the model’s learning capacity and overall behaviour.

1. Input Layer

The input layer is the entry point of data into the network.

Each neuron in this layer corresponds to one feature or attribute of the input data.

It acts as a conduit, passing input values to subsequent layers without transformation.

2. Hidden Layers

Hidden layers lie between the input and output layers, performing the core computations.

Each layer contains neurons that transform inputs using weighted sums and activation functions.

The number of hidden layers and neurons per layer determines the model’s capacity to learn complex patterns.

Activation functions introduce non-linearities, allowing the network to model real-world phenomena that are not linearly separable.

Common activation functions include ReLU (Rectified Linear Unit), sigmoid, and tanh.

3. Output Layer

The output layer produces the final result or prediction of the network.

Its structure depends on the task, such as a single neuron with sigmoid activation for binary classification or multiple neurons with softmax activation for multi-class classification.

In regression tasks, the output layer may contain one or more neurons providing continuous values.

Key Components of a Neural Network

The following highlights the primary components that determine how a neural network operates. Each contributes uniquely to transforming raw inputs into meaningful outputs.

Learning Process

Neural networks learn using forward propagation (data passing through layers to generate output), loss calculation, and backpropagation (error signals propagated backwards to update weights). Iterative cycles optimise the network to improve accuracy.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.