Natural Language Processing (NLP) enables machines to understand, interpret, and generate human language, making it vital for applications like translation, sentiment analysis, and conversational AI. Recurrent Neural Networks (RNNs) and their enhanced variants, Long Short-Term Memory networks (LSTMs), form the backbone of many NLP models due to their ability to process sequential data and remember context over time.

Introduction to Recurrent Neural Networks (RNNs)

RNNs are specialized neural networks designed to handle sequential inputs by maintaining a hidden state that encodes information about previous elements in the sequence.

Unlike feedforward networks, RNNs have loops allowing them to pass information from one step to the next, making them ideal for language tasks where context matters.

Key Idea: At each time step, the RNN takes the current input and the previous hidden state, processes them using shared weights, and produces a new hidden state and output.

Advantage: Ability to capture dependencies and patterns over sequences, such as grammar or topic flows in text.

Limitation: Standard RNNs struggle with long-range dependencies due to vanishing or exploding gradients, impairing their ability to remember far-back information.

Introduction to Long Short-Term Memory Networks (LSTMs)

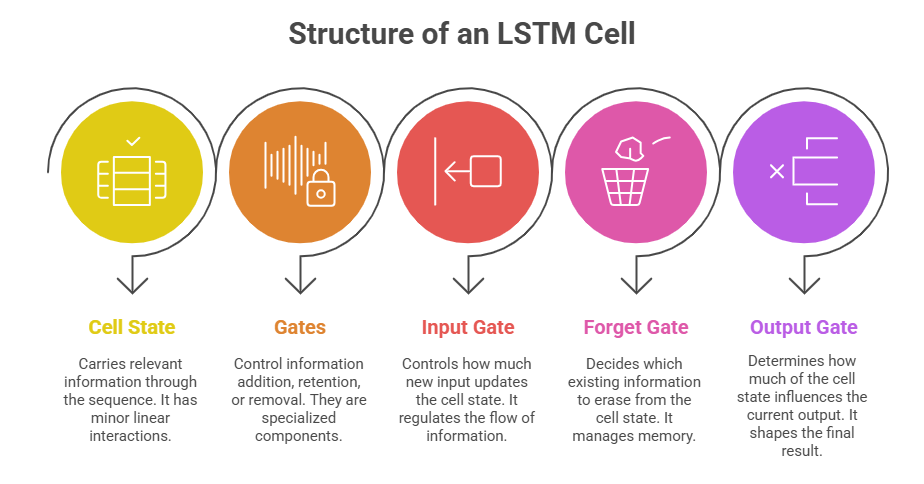

LSTMs are a refinement of RNNs specifically designed to overcome the limitations of traditional RNNs by introducing a memory cell and gating mechanisms that regulate information flow.

Advantages of LSTMs:

1. Effectively learn long-term dependencies by maintaining a stable gradient during training.

2. Handle complex sequence patterns better than vanilla RNNs.

3. Widely used in speech recognition, machine translation, text generation, and sentiment analysis.

Working Mechanism Illustration

Imagine predicting the next word in a sentence. RNNs process each word sequentially, updating their hidden state to build context. LSTMs enhance this by allowing information flow with selective updates, akin to remembering a conversation context over several sentences, which improves accuracy in language modeling.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.