As artificial intelligence systems permeate diverse aspects of society, ensuring fairness and minimizing bias in AI models has become a paramount concern. Biases embedded in data, algorithms, or decision-making processes can perpetuate social inequalities, cause unfair treatment, and diminish trust in AI systems.

Addressing fairness and actively mitigating bias throughout the AI lifecycle are essential for developing responsible, ethical, and inclusive AI technologies.

Introduction to Fairness and Bias

Bias in AI refers to systematic errors or prejudices that result in unfair or discriminatory outcomes for individuals or groups, often based on sensitive attributes like race, gender, age, or socioeconomic status.

Fairness means that AI systems make impartial decisions and treat people equitably regardless of such characteristics. Proactively recognizing bias and implementing fairness principles promotes social justice, transparency, and accountability in AI.

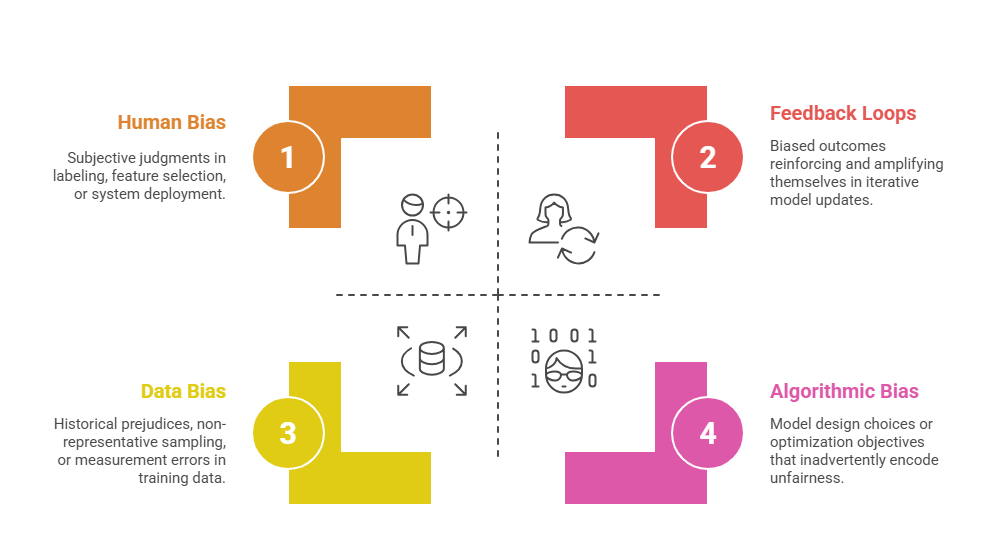

Sources of Bias in AI

Several factors can unintentionally embed prejudice or skewed patterns into AI systems. The list below highlights the most frequent origins of bias in machine learning.

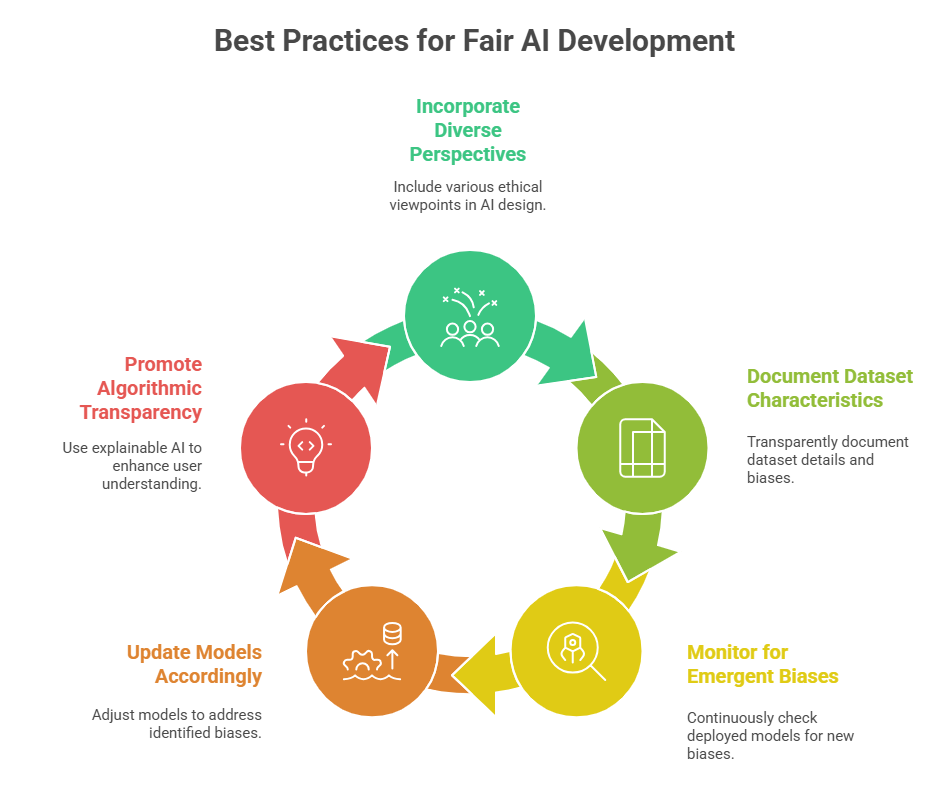

Approaches to Mitigating Bias

Mitigating bias involves applying structured techniques that improve how models treat diverse populations. The following outlines key methods employed before, during, and after model training.

1. Pre-processing Techniques

Pre-processing techniques aim to reduce bias by modifying the training dataset before model development. These methods include reweighting or resampling to balance group representation and removing correlations between sensitive attributes and key features while preserving predictive quality. Additionally, synthetic data generation or augmentation can be used to strengthen minority group presence and enhance fairness.

2. In-processing Techniques

In-processing methods incorporate fairness directly into the training process by embedding constraints that limit discriminatory patterns. Approaches such as adversarial debiasing use auxiliary models to identify and suppress bias signals in learned representations. Regularization-based techniques further help discourage unequal treatment by penalizing biased predictions during optimization.

3. Post-processing Techniques

Post-processing strategies operate on model outputs to align predictions with fairness criteria without modifying the underlying model. These methods can adjust decision thresholds or prediction scores to balance error rates across demographic groups. Calibration techniques also help eliminate disparate impacts by ensuring consistent predictive behavior across populations.

4. Evaluation of Fairness

Fairness evaluation involves applying metrics such as demographic parity, equal opportunity, and equalized odds to assess model behavior across groups. Counterfactual fairness tests are used to examine how predictions would change if sensitive attributes were altered hypothetically. Regular audits supported by explainability tools and fairness dashboards help maintain transparency and detect emerging biases.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.