Activation functions and backpropagation are fundamental concepts in neural networks that enable these models to learn complex patterns and perform accurate predictions.

Activation functions introduce non-linearity to compute meaningful transformations of data, while backpropagation is the algorithm that efficiently trains neural networks by updating weights based on prediction errors. Together, these mechanisms empower neural networks to model highly non-linear relationships and improve their performance iteratively.

Introduction to Activation Functions

In a neural network, neurons process information by calculating a weighted sum of inputs plus a bias term and then applying an activation function to produce an output.

Activation functions are mathematical formulas that determine the neuron's firing condition, allowing the network to capture complex patterns beyond simple linear transformations.

Without activation functions, a network would be equivalent to a linear regression model, severely limiting its capability.

Common Types of Activation Functions

1. Sigmoid (Logistic) Function:

Characterized by an S-shaped curve.

Maps input values to outputs between 0 and 1.

Widely used for binary classification problems.

Can suffer from vanishing gradients in deep networks.

Formula:

2. Tanh (Hyperbolic Tangent) Function:

Similar sigmoid shape, but outputs range from -1 to 1.

Zero-centered, often leading to faster convergence.

Also can encounter vanishing gradient problems.

Formula:

3. ReLU (Rectified Linear Unit):

Outputs zero for negative inputs and identity for positive inputs.

Computationally efficient and alleviates vanishing gradients.

Most popular for hidden layers in deep neural networks.

Formula:

4. Softmax Function:

Converts a vector of values into probabilities summing to 1.

Typically applied in the output layer for multi-class classification.

Formula:

Introduction to Backpropagation

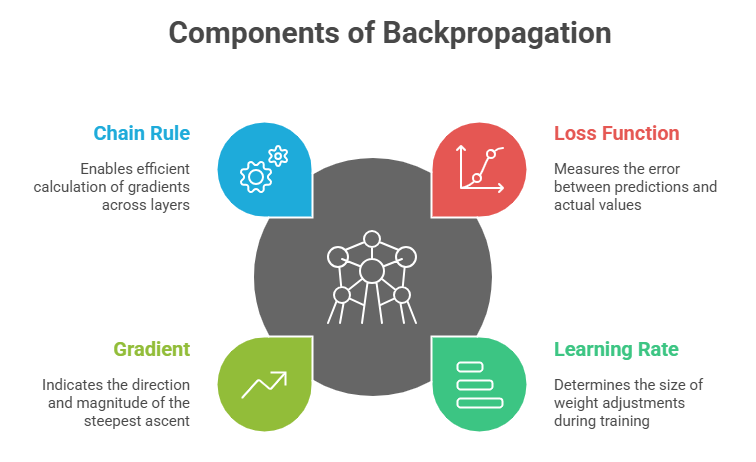

Backpropagation is the core training algorithm used to update networks' weights and biases by propagating the error gradient backwards through the layers.

How Backpropagation Works:

1. Forward Pass: Input data is passed through the network to generate predictions.

2. Loss Calculation: The difference between predictions and actual targets is quantified using a loss function.

3. Backward Pass: The gradient of the loss with respect to each weight is computed using the chain rule of calculus.

4. Weight Update: Weights and biases are adjusted in the direction that minimizes the loss, typically using gradient descent or its variants.

This iterative process repeats across multiple epochs until the network converges to a solution with minimized prediction error.

Importance in Neural Networks

Listed below are the fundamental factors that highlight their importance in neural learning. These mechanisms ensure networks can handle non-linearity and improve through training.

1. Activation functions enable networks to learn and model complex, non-linear relationships critical for tasks like image recognition and language processing.

2. Backpropagation provides an efficient way to optimize all parameters simultaneously, enabling deep learning's success.