Generative AI represents a category of artificial intelligence designed to create new and original content by learning from existing data. Two state-of-the-art approaches—Diffusion Models and Generative Transformers—have significantly advanced the quality and versatility of generated content such as images, text, and audio.

Generative AI

Generative AI systems build models that can produce realistic and meaningful outputs closely resembling the training data while also enabling creativity and extrapolation.

Unlike traditional discriminative models that classify or predict, generative models capture the underlying data distribution and sample new data points from it. This capability supports content creation in various forms, including natural language, visuals, and synthesized sounds.

Diffusion Models: Controlled Noise and Denoising Process

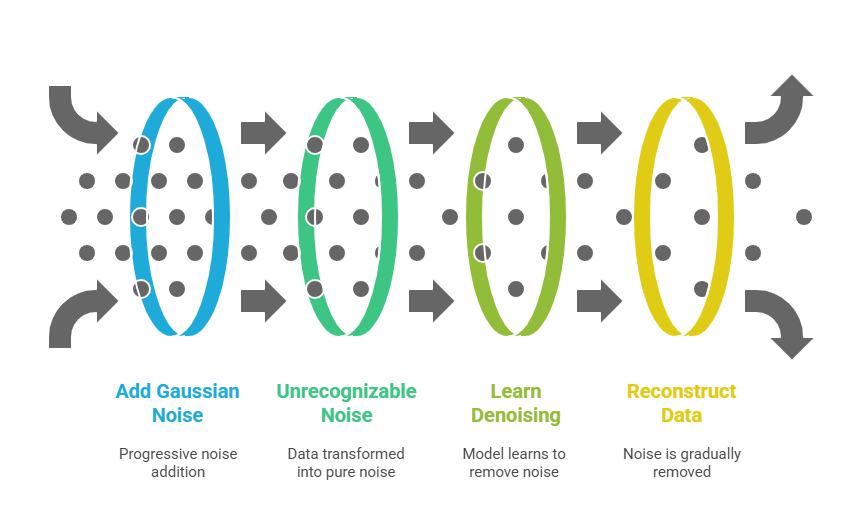

Diffusion models generate data by simulating a process of gradual corruption and recovery:

1. Forward Process (Diffusion): Starting from a real data sample (like an image), the model progressively adds small amounts of Gaussian noise over many steps until the data becomes unrecognizable noise.

2. Reverse Process (Denoising): The model learns to reverse this noisy transformation step-by-step, reconstructing the data by removing noise progressively.

3. This iterative denoising creates new, coherent samples drawn from the learned data distribution.

Diffusion models have garnered popularity for generating high-quality, photorealistic images, exemplified by models like Stable Diffusion and DALL·E 3, which excel in text-to-image generation tasks. They also extend to applications like inpainting, style transfer, and conditional content generation.

Generative Transformers: Attention-Based Sequence Generation

Generative transformers are deep learning models based on self-attention mechanisms, capable of understanding and producing sequential data efficiently:

1. Built on encoder-decoder or decoder-only architectures, transformers process input by dynamically focusing attention on relevant context across the entire sequence.

2. This self-attention mechanism enables transformers to capture complex dependencies and generate coherent, contextually appropriate text, code, or other sequential data.

3. Models such as GPT (Generative Pre-trained Transformer) and BERT (Bidirectional Encoder Representations from Transformers) exemplify generative transformers.

These models are pre-trained on massive datasets and fine-tuned for specific tasks, enabling versatility in language generation, summarization, translation, question answering, and more.

Impact and Future Directions

These generative AI technologies underpin many cutting-edge applications, from chatbots and creative assistants to automated content production and scientific research. Research continues to blend these paradigms with other models like GANs and VAEs to enhance generation quality, diversity, and control.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.