Bias in machine learning arises when model outputs systematically favor or disadvantage particular individuals or groups due to imbalanced data, flawed assumptions, or improper feature engineering.

As ML systems are increasingly embedded in real-time decision pipelines such as fraud detection, medical prioritization, job recommendations, and academic assessments, fairness becomes a crucial requirement rather than an optional enhancement.

When a model amplifies historical inequalities or misinterprets underrepresented patterns, the consequences can be severe and long-lasting.

Fairness in ML focuses on designing systems that provide equitable treatment across diverse populations regardless of demographic, socio-economic, or behavioral differences.

This includes careful examination of how data is collected, how features are constructed, and how predictions are evaluated across different subgroups.

Ensuring fairness requires continuous monitoring throughout the lifecycle—data preparation, training, validation, deployment, and updating.

Importance

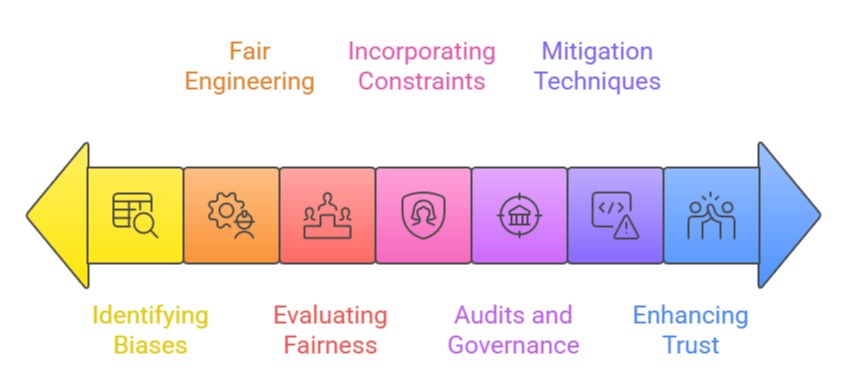

1. Identifying Hidden Biases in Training Data

Bias frequently originates from the data supplied to the model. Historical datasets may reflect existing social inequalities, underrepresentation, or skewed distributions that distort how an algorithm learns patterns.

Detecting these hidden biases requires detailed exploratory analysis, subgroup evaluations, and understanding the context in which the data was collected.

Without this awareness, models may inadvertently mirror discriminatory behaviors—such as lower approval rates for certain demographics—simply because those groups were misrepresented in the data. Early identification ensures that bias is corrected before it is reinforced at scale.

2. Fair Feature Engineering and Representation Learning

Features can introduce unfairness when they correlate with sensitive attributes or encode indirect signals about protected groups.

Even neutral-looking variables, like ZIP codes or spending patterns, can become proxies for income level or ethnicity.

Ethical feature engineering involves assessing whether attributes introduce disproportionate advantages or disadvantages and removing or transforming variables that carry unfair influence.

Techniques such as fairness-aware embeddings or adversarial debiasing help models learn representations that reduce the leakage of sensitive information, resulting in more equitable performance across categories.

3. Evaluating Fairness Across Diverse Subgroups

A model may perform well on average yet behave unfairly for specific subgroups.

This makes fairness evaluation essential during validation and post-deployment monitoring.

Approaches include comparing false-positive rates, error disparities, or prediction thresholds across demographic segments.

Developers should analyze performance metrics disaggregated by group to detect imbalances that would otherwise remain unseen.

Continuous tracking of subgroup-level results ensures that fairness is not confined to initial development phases but remains an ongoing commitment throughout the model lifecycle.

4. Incorporating Fairness Constraints During Model Training

Modern ML workflows allow fairness constraints to be built directly into optimization processes.

These constraints help ensure that accuracy does not come at the cost of discriminatory outcomes.

Methods such as reweighting samples, modifying loss functions, or balancing positive/negative labels during training help reduce structural imbalances.

Such interventions ensure that models treat individuals consistently, regardless of background characteristics.

Integrating fairness at the algorithmic core allows developers to prevent harmful predictions rather than correcting them reactively.

5. Regular Audits and Governance for Ethical Assurance

Fairness is not a one-time check; it demands structured oversight. Ethical governance frameworks support periodic auditing of datasets, models, and prediction pipelines.

These audits identify drift, newly emerging biases, or patterns that may negatively influence stakeholders over time.

Establishing internal review boards, documenting model decisions, and maintaining transparent logs help organizations uphold accountability.

A consistent auditing culture not only enhances fairness but also prepares enterprises to comply with global AI regulations and industry standards.

6. Practical Bias Mitigation Techniques and Tools

Various technical approaches—such as resampling, fairness-aware preprocessing, adversarial debiasing, and balanced thresholding—help mitigate bias in practice.

Tools like IBM AI Fairness 360, Fairlearn, or Google’s What-If Tool assist practitioners in examining bias, generating fairness reports, and experimenting with corrective strategies.

These solutions offer structured methods for comparing fairness metrics and understanding the implications of different mitigation choices. Leveraging such tools empowers teams to adopt fairness methodologies without compromising model performance.

7. Enhancing Public Trust and Organizational Credibility

Fairness plays a major role in building trust among end users, regulators, and clients.

When ML systems demonstrate equal treatment, individuals are more likely to accept algorithmic decisions, especially in contexts that directly impact their opportunities.

Organizations that prioritize fairness reduce reputational risks, avoid legal challenges, and position themselves as responsible AI leaders.

Maintaining fairness across workflows elevates the overall credibility of data-driven solutions and promotes long-term relationships with stakeholders.

Challenges in Ensuring Bias and Fairness in Machine Learning Models

1. Detecting Bias in Complex and High-Dimensional Data

One major challenge is that modern datasets contain thousands of features, making it difficult to identify which variables might be contributing to unfair outcomes.

Bias often hides within interactions between attributes, not just the attributes themselves.

As models grow more complex, uncovering subtle correlations or proxy variables becomes increasingly demanding.

Developers must employ specialized statistical tests and fairness toolkits, yet even these may miss deeper structural inequalities embedded in data.

2. Limited Availability of Representative and Balanced Data

Fairness becomes difficult when certain groups are underrepresented in the dataset.

Models trained on skewed distributions fail to learn accurate patterns for minority segments, resulting in unstable or discriminatory predictions.

Collecting balanced, high-quality data is often expensive, time-consuming, or legally restricted.

This lack of representativeness forces practitioners to rely on imputation, augmentation, or synthetic data, each of which introduces its own risks.

3. Trade-Off Between Fairness and Predictive Accuracy

Improving fairness sometimes requires modifying loss functions, reweighting samples, or removing influential features—all of which may slightly reduce accuracy.

Teams must negotiate between building a highly accurate model versus an ethically reliable one.

Organizations with performance-driven deadlines may deprioritize fairness in favor of short-term gains.

Balancing fairness objectives with business expectations becomes a strategic challenge that demands thoughtful alignment across stakeholders.

4. Ambiguity in Defining What “Fairness” Should Mean

Fairness is not one rigid concept—various definitions exist, such as equal opportunity, demographic parity, predictive equality, and others.

These definitions often conflict, making it impossible to satisfy every criterion simultaneously.

Choosing the right definition depends on the context, legal constraints, and societal values.

This ambiguity makes it challenging to establish fairness goals that satisfy both technical feasibility and ethical expectations.

5. Difficulty in Handling Proxy Variables for Sensitive Attributes

Even when sensitive attributes such as race or gender are removed, models often infer similar information from correlated variables like language patterns, ZIP codes, or device types.

These proxy signals make it difficult to eliminate discrimination completely.

Developers must analyze which features inadvertently encode sensitive information and apply corrective transformations. Detecting proxies becomes tougher as datasets grow in size and complexity.

6. Monitoring Model Fairness After Deployment

Fairness isn’t static—models drift over time as data changes, user behavior evolves, or external conditions shift.

A model that is fair today may become biased in six months without continuous monitoring.

Building real-time fairness dashboards, performance alerts, and review pipelines requires significant engineering resources.

Many organizations lack the infrastructure to track fairness reliably in production environments.

7. Legal, Ethical, and Compliance Constraints

Different regions follow different regulations related to fairness, transparency, and discrimination prevention.

Navigating these laws—such as GDPR, CCPA, or emerging AI safety regulations—can be challenging, especially for global applications.

Teams must balance regulatory requirements with technical capabilities while ensuring documentation and transparency.

Meeting these constraints adds complexity, cost, and administrative effort to the ML lifecycle.

8. Difficulty in Communicating Fairness Trade-Offs to Non-Technical Stakeholders

Business leaders, policymakers, and clients may not fully understand fairness metrics or the consequences of biased predictions.

Translating technical fairness findings into clear language is essential but challenging.

Miscommunication can lead to unrealistic expectations, misinterpretation of risks, or resistance to fairness interventions.

Building awareness across teams is necessary to support ethical ML, yet requires deliberate education and collaboration.