In real-world machine learning and data science projects, datasets are rarely perfect. Missing values and outliers naturally appear due to human errors, system faults, incomplete records, or unexpected behavior in the environment generating the data.

If ignored, these issues distort patterns and weaken a model’s ability to learn meaningful relationships. Handling missing data involves strategies such as deletion, imputation, or predictive filling, depending on the severity and context.

Outlier treatment focuses on understanding whether extreme values represent noise or meaningful rare events before applying transformations, trimming, or robust statistical methods.

Both tasks are essential during preprocessing because they directly influence model reliability, accuracy, and fairness.

If not addressed carefully, machine learning algorithms may produce biased outputs, unstable predictions, or erroneous insights.

Proper handling requires a balance between statistical reasoning and domain understanding, ensuring that data remains consistent without losing valuable information.

Modern workflows emphasize using automated techniques but still rely on analyst judgment to ensure that corrections reflect the true structure of the data.

Importance Handling Missing Data and Outliers

The following points explain the importance of managing missing values and outliers in data preprocessing.

1. Missing Data Treatment Preserves Model Integrity

Dealing with incomplete entries prevents algorithms from misinterpreting gaps as meaningful signals.

When missing values remain unchecked, models may skip important observations, leading to biased parameter estimates or unstable gradient updates during training.

Proper imputation ensures data continuity by filling gaps using mean, median, model-based estimates, or nearest-neighbor approaches.

These methods help maintain feature distributions without significantly distorting the original patterns. In high-stakes applications like healthcare, finance, and forecasting, preserving integrity is crucial because even small inconsistencies can propagate into large predictive errors.

By addressing missingness early, practitioners ensure a consistent learning environment for the model. This strengthens both interpretability and generalizability.

2. Outlier Handling Improves Model Stability and Predictive Power

Outliers can drastically influence statistical measures and cause sensitive models—such as linear regression or k-means clustering—to behave unpredictably.

Managing them prevents skewed parameter estimates, unstable loss functions, and poor convergence.

Techniques like winsorization, log transformations, Z-score filtering, and IQR-based trimming help reduce extreme distortions while safeguarding the underlying relationships.

Outlier analysis also reveals whether unusual points signal anomalies worth investigating rather than simply noise.

This dual perspective—correction vs. insight—supports more accurate, robust modeling. Stable datasets lead to smoother optimization paths and higher-quality predictions across different machine learning contexts.

3. Ensures Fair and Unbiased Results Across Different Data Segments

Missing data and outliers often cluster within specific groups—age ranges, geographic locations, transaction types, or device categories.

If treated carelessly, imputation or removal may disproportionately affect some subpopulations, introducing unintended biases.

Handling these issues systematically ensures each segment retains equitable representation, which is crucial for fairness-sensitive domains.

Maintaining balanced distributions supports better learning, prevents overfitting to “cleaner” groups, and helps models perform consistently across diverse cases.

As organizations increasingly rely on automated decision systems, fairness in preprocessing becomes a central requirement rather than an optional step.

4. Supports More Reliable Feature Engineering

Feature engineering becomes far more effective when missing values and outliers are already addressed.

Clean data enables the creation of transformed variables—such as ratios, aggregates, or temporal features—without introducing artificial spikes or gaps.

When values are missing, engineered features may contain undefined operations like division by zero or unintended null propagation.

Outliers can also distort scaling techniques such as normalization or standardization, causing engineered features to misrepresent original behavior.

By resolving these issues early, engineers can design more expressive variables that better capture real-world patterns. Ultimately, the quality of features improves significantly when preprocessing is thorough.

5. Enhances Model Interpretability and Feature Importance Accuracy

Missing data and extreme values often confuse interpretation tools such as SHAP, LIME, or permutation importance.

If rare anomalies dominate certain regions of the feature space, the model may exaggerate the impact of those variables, leading analysts to misjudge their importance. Cleaning the dataset ensures interpretability reflects true relationships instead of artifacts.

This allows stakeholders to clearly understand why predictions were made, reinforcing trust and transparency.

With well-maintained data, explanations become more stable, consistent, and aligned with domain expectations. Accurate interpretability is essential in fields requiring audits or compliance validation.

6. Strengthens Cross-Dataset Consistency in Large Projects

In large-scale or multi-source datasets, different data sources may contain varied missingness patterns or inconsistent outlier definitions.

Addressing these issues helps harmonize datasets so that merged or integrated data maintains uniform statistical structure.

This consistency becomes crucial when training models across multiple markets, regions, or user groups.

Without alignment, a model may learn conflicting patterns or overfit to one dataset’s quirks.

Proper handling supports smoother integration workflows, reduces preprocessing conflicts, and creates a unified analytical foundation.

As organizations rely on increasingly large datasets, this consistency elevates overall performance and scalability.

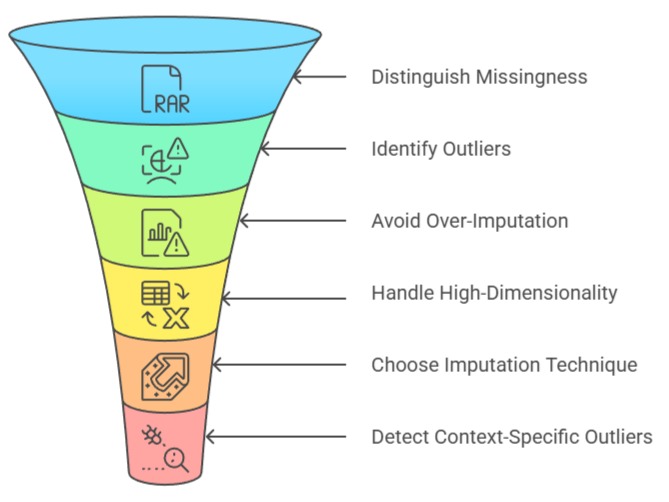

Challenges

1. Distinguishing Missingness Mechanisms (MCAR, MAR, MNAR)

Determining why values are missing is often difficult and requires careful statistical assessment.

Data may be missing completely at random, dependent on other variables, or linked to the missing value itself.

Misidentifying the mechanism can lead to incorrect imputation strategies that distort relationships or create misleading trends.

For Example, filling income-related missing data with averages may hide important socioeconomic differences.

Understanding these mechanisms takes time, domain expertise, and exploratory analysis. Without correct diagnosis, any downstream preprocessing steps may compromise the model.

2. Identifying True Outliers vs. Meaningful Rare Events

A major challenge involves deciding whether extreme points represent noise or valuable indicators of rare but important behavior.

For instance, unusually high transaction amounts might signal fraud rather than error.

Automatically removing such points may eliminate crucial insights, especially in anomaly detection tasks.

Analysts must balance statistical thresholds with contextual interpretation to avoid throwing away useful information.

This decision-making process becomes more complex in high-dimensional datasets where multivariate outliers are harder to detect visually or manually. Incorrect handling may weaken the model or misrepresent patterns.

3. Avoiding Over-Imputation and Distribution Distortion

While imputation is helpful, excessive filling can make data appear artificially smooth and remove natural variability.

Algorithms may then learn relationships that do not exist in real scenarios.

Over-imputation also risks copying information from other features, inflating correlations or diminishing model contrast.

Maintaining a realistic distribution is challenging, especially when missingness is extensive.

The goal is to restore usability without fabricating patterns that mislead the learning process. Achieving this balance requires iterative checking, validation, and sometimes multiple imputation techniques.

4. Handling High-Dimensional Data Where Outliers Are Hidden

High-dimensional datasets make outlier detection significantly harder because unusual points may not appear extreme in any single dimension but behave differently in combined feature space.

Traditional methods like Z-scores or boxplots fail to capture these multivariate patterns.

Techniques such as Mahalanobis distance or isolation forests become necessary, but they require more computation and careful parameter tuning.

Analysts must also interpret complex interactions to determine whether these multivariate outliers have meaningful context.

This complexity makes the process resource-intensive and more prone to misclassification. Managing such data requires advanced statistical and algorithmic insight.

5. Choosing the Right Imputation Technique Without Overfitting

Imputation methods must balance simplicity and predictive power. Advanced approaches—like regression imputation, multiple imputation, or model-based filling—can unintentionally inject hidden leakage, causing models to perform unrealistically well during training.

Simpler approaches may preserve fairness but lose detail. Selecting the right strategy becomes challenging because the best method depends on dataset size, feature relationships, and domain-specific implications.

Incorrect choices may inflate performance metrics while reducing real-world generalizability. Analysts must test multiple approaches and validate them carefully to avoid overfitting through imputation.

6. Detecting Context-Specific Outliers That Follow Domain Logic

Some values appear extreme statistically but make perfect sense in domain applications.

For example, a sudden spike in website traffic during a festival or a rare disease case count in epidemiology may be expected.

Treating these as statistical outliers and removing them can erase valuable domain patterns. Identifying contextual outliers requires subject matter expertise and continuous communication with domain teams.

Purely algorithmic detection is insufficient because it lacks situational awareness. Balancing statistical rules with real-world context becomes a persistent challenge, especially in dynamic environments.