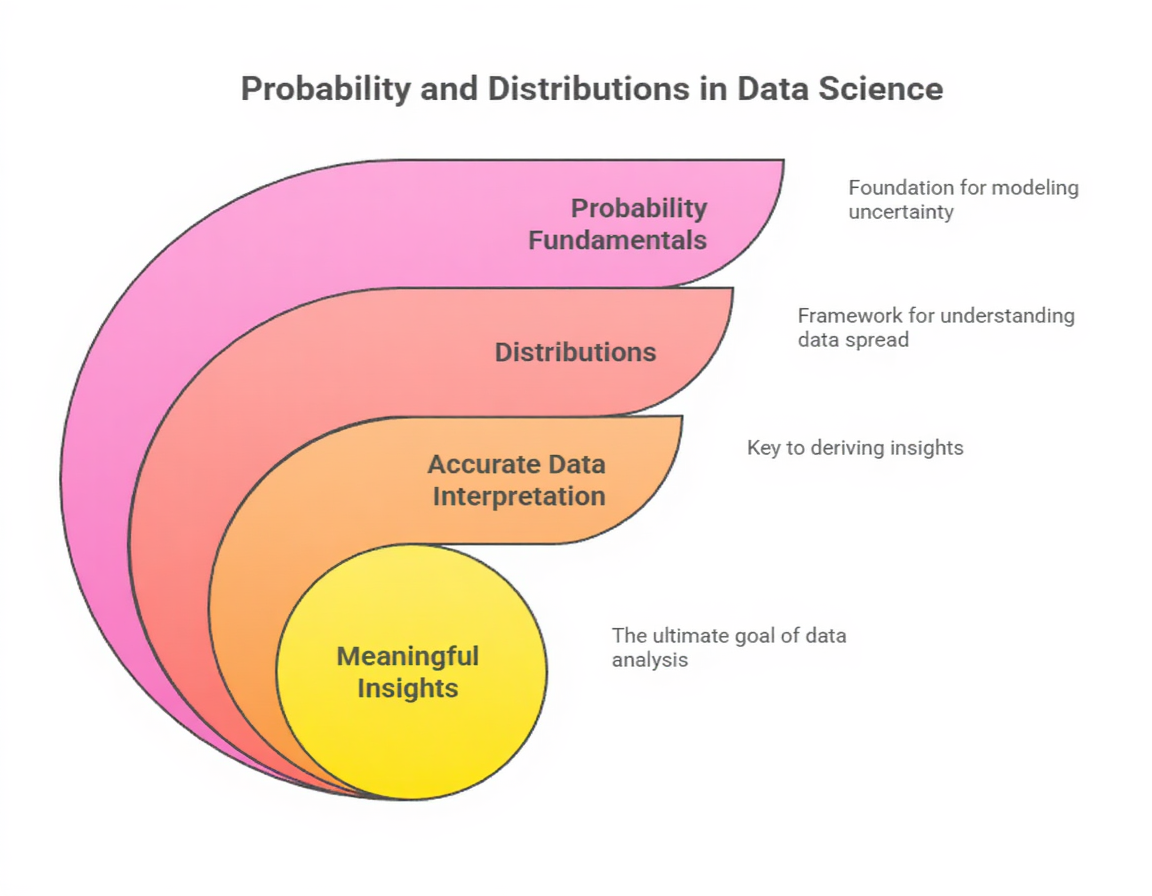

Probability provides the mathematical framework for understanding uncertainty, randomness, and the likelihood of events—core ideas that appear constantly in machine learning.

Every time a model predicts an output, it essentially estimates the probability that an input belongs to a particular class or follows a specific pattern.

Fundamental concepts such as random variables, probability rules, sample spaces, conditional probability, and independence form the basis for understanding how algorithms behave under different data conditions.

Distributions—such as Normal, Bernoulli, Binomial, Poisson, and Exponential—describe how values in a dataset are spread.

These distributions help data scientists decide which algorithms are appropriate, how to simulate data, and how to interpret model outputs.

Whether it’s noise modeling, uncertainty estimation, or Bayesian reasoning, probability acts as the backbone of intelligent decision-making in data science.

Examples

1. Conditional Probability: Used in Naive Bayes to compute the likelihood of text belonging to a certain category.

2. Normal Distribution: Many ML algorithms assume normally distributed features (e.g., linear regression, PCA).

3. Bernoulli Distribution: Used for binary outcomes like spam vs non-spam or fraud vs normal.

4. Poisson Distribution: Models rare events such as the number of service requests per hour in prediction tasks.

5. Uniform Distribution: Commonly used for random weight initialization in neural networks.

Importance

The following points outline why probability is critical for uncertainty modeling and inference.

Probability enables data scientists to quantify the uncertainty present in predictions, inputs, and outcomes.

Models rarely give definitive answers; instead, they output likelihoods that events will occur.

Understanding probability helps interpret these likelihoods correctly, preventing misinterpretation of model outputs.

Many ML decisions, such as classification thresholds or risk assessments, depend on how uncertainty is measured.

Probability also supports robust decision-making when data is incomplete or noisy. Without this framework, model behavior would appear unpredictable and difficult to justify.

2. Forms the Basis of Many Core Machine Learning Algorithms

Algorithms like Naive Bayes, Hidden Markov Models, Bayesian Networks, GMMs, and probabilistic classifiers rely heavily on probability theory.

These models calculate likelihoods, posterior probabilities, or conditional relationships to make predictions.

Even non-probabilistic models internally incorporate randomness or probabilistic assumptions—such as stochastic gradient descent.

Understanding probability allows developers to fine-tune these algorithms more effectively.

It also clarifies why certain methods perform better in particular data conditions. Thus, probability is deeply embedded in ML architecture and algorithmic thinking.

3. Helps Interpret and Choose Appropriate Distributions for Features

Real-world data rarely behaves randomly; it often follows specific statistical patterns.

Knowing distributions allows analysts to recognize whether values follow a bell curve, show skewness, or represent rare events.

This understanding guides preprocessing choices such as normalization, log transformation, or standardization.

Certain algorithms expect particular distributions, and mismatched assumptions can impair accuracy.

Identifying the right distribution also helps simulate synthetic data for testing or modeling rare conditions. Overall, distribution knowledge enhances data understanding and model suitability.

4. Supports Reliable Decision-Making Through Conditional Relationships

Conditional probability helps determine how the likelihood of one event changes given another event has occurred. In machine learning, this concept appears in feature relevance assessment, causal analysis, and probability-based classifiers.

Understanding dependencies between variables helps avoid incorrect assumptions, such as treating correlated features as independent.

Conditional reasoning also aids anomaly detection, as certain behaviors only appear unusual under specific contexts.

By analyzing these conditional patterns, models become more robust and context-aware.

5. Enables Better Generalization and Error Analysis

Probability distributions help estimate how well a model will perform on unseen data. Concepts such as sampling distributions, variance, and likelihood functions allow analysts to assess model uncertainty, bias, and overfitting tendencies.

They also enable practitioners to compute error probabilities, confidence levels, and prediction intervals.

These measures provide a more realistic understanding of model reliability instead of relying solely on point estimates.

By grounding evaluation in probability, generalization becomes measurable rather than speculative.

6. Provides Tools for Simulation and Synthetic Data Generation

Random sampling from known distributions enables simulation of various scenarios, especially when real-world data is limited or costly to collect.

Techniques such as Monte Carlo simulation rely entirely on probability to estimate outcomes under uncertain conditions.

In ML, synthetic sampling supports class balancing (SMOTE), augmentation, and stress-testing models against unusual patterns.

These simulations help evaluate model robustness and behavior in extreme conditions. With probability, analysts can mimic real-world events without needing massive datasets.

7. Essential for Bayesian Thinking and Parameter Updating

Bayesian statistics uses probability to update beliefs as new information becomes available.

This idea powers algorithms such as Bayesian Optimization, Bayesian Neural Networks, and Variational Inference.

Bayesian methods are especially valuable when working with small datasets or when interpretability is crucial.

They allow models to express uncertainty directly in parameters rather than as final outputs.

Understanding Bayes’ theorem helps practitioners incorporate prior knowledge, refine predictions, and create adaptable models as new data arrives.

8. Helps Understand Noise, Randomness, and Variability in Data

Data often contains randomness from measurement errors, user behavior, environmental conditions, or system limitations.

Probability distributions help model this noise instead of ignoring it. Recognizing how randomness influences predictions leads to better preprocessing, smoothing techniques, and filtering strategies.

It also helps differentiate meaningful patterns from mere fluctuations.

Managing randomness effectively improves stability and performance across nearly all ML algorithms. Probability therefore transforms chaos into mathematical structure.