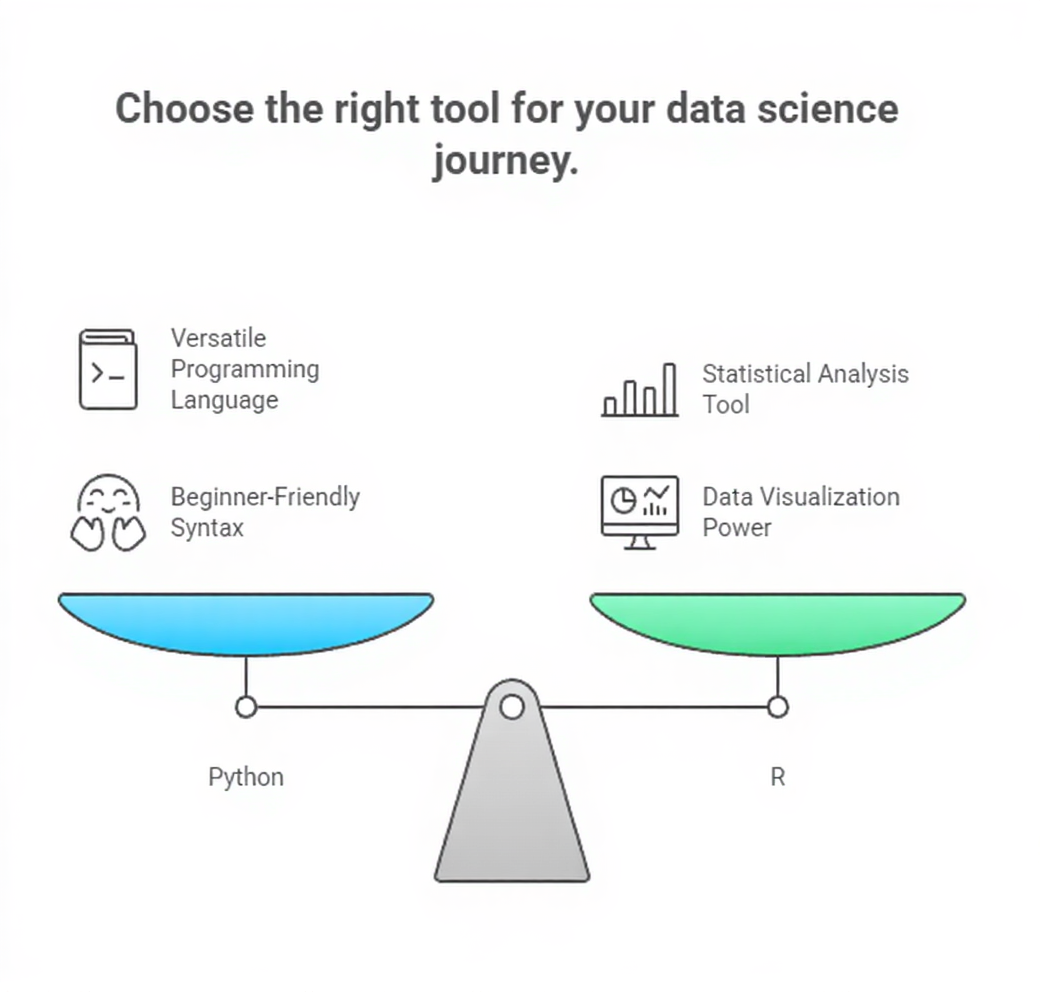

Python and R are two of the most widely used programming languages in data science due to their simplicity, flexibility, and extensive support for analytical workflows.

Python is known for its clear syntax, large ecosystem of machine learning libraries, and suitability for building end-to-end data applications—from data preprocessing to deploying AI systems.

In contrast, R was originally designed for statistical computing, making it exceptionally strong for exploratory data analysis, visualization, and mathematical modeling.

Both languages offer built-in data structures, rich package ecosystems, and a wide range of tools for handling tasks such as data wrangling, numerical computing, feature engineering, and algorithm implementation.

Python relies on libraries such as NumPy, pandas, Matplotlib, scikit-learn, and TensorFlow, while R utilizes packages like dplyr, ggplot2, tidyr, caret, and data.table.

In machine learning and data science, beginners typically start with Python because of its general-purpose nature, strong industry adoption, and compatibility with deep learning frameworks.

R, however, remains a preferred choice among statisticians and researchers who require advanced mathematical modeling and superior visualization capabilities.

Importance of Python or R programming

1. Core Syntax and Readability

1. Core Syntax and Readability

Python’s beginner-friendly syntax allows learners to focus more on problem-solving rather than language complexity.

Its structure resembles natural English, making code easier to understand when working with data transformations, conditional statements, or iteration logic.

R, while slightly more specialized, offers intuitive operators and functions tailored to statistical tasks, enabling analysts to explore datasets efficiently. Both languages feature dynamic typing, which reduces the need for extensive variable declarations.

For data science workflows, this simplicity accelerates experimentation and debugging.

Clear syntax also fosters better collaboration among team members, especially in environments where reproducibility and clarity are crucial.

2. Essential Data Structures for Analysis

Python includes lists, tuples, dictionaries, and sets, which allow flexible representation of data before converting it into analytical formats like pandas DataFrames.

R provides vectors, matrices, lists, and data frames, each optimized for numerical computations and statistical operations.

These structures serve as foundational elements for building machine learning datasets, enabling tasks like indexing, slicing, and reshaping.

Their flexibility supports feature engineering, merging datasets, and handling missing values.

Understanding how these structures behave—mutable vs. immutable, homogeneous vs. heterogeneous—is vital for writing efficient analytical code.

With proper mastery, they significantly simplify workflow development in ML projects.

3. Working With Data Using Libraries and Packages

Modern data science relies heavily on external libraries that simplify complex tasks.

Python’s pandas allows high-performance table operations, time-series manipulation, and transformation pipelines.

R’s dplyr and tidyr streamline filtering, grouping, and cleaning operations through expressive syntax.

These libraries eliminate the need for manual loops, replacing them with vectorized options that run faster and produce cleaner code.

In machine learning projects, such tools help transform raw data into structured formats ready for modeling.

Their consistent function names and modular design enhance productivity, especially when handling large datasets or automating routine tasks.

4. Visualization Techniques for Insight Discovery

Python uses Matplotlib, Seaborn, and Plotly for generating visual representations of patterns in data, making it easier to interpret trends and evaluate model output.

R is renowned for ggplot2, which employs a layered approach to building charts, allowing deep customization and aesthetically rich plots.

Visualization plays a critical role in diagnosing issues such as skewed distributions, outliers, or missing patterns before training models.

Both languages support interactive graphics that help stakeholders explore insights more intuitively.

These tools also allow exporting charts for reports, dashboards, and presentations within data-driven workflows.

5. Numerical Computation and Statistical Functions

Python’s NumPy provides powerful vectorized operations, enabling matrix multiplications and linear algebra routines essential in machine learning algorithms.

R comes with embedded statistical functions that support probability calculations, hypothesis testing, and regression modeling.

These capabilities form the computational backbone of supervised and unsupervised learning tasks.

Efficient numerical computation not only improves runtime performance but also helps in model tuning, optimization, and simulation.

Whether dealing with gradient descent, covariance matrices, or performance metrics, these libraries ensure accurate results with minimal code complexity.

6. Introduction to Machine Learning Frameworks

Python integrates seamlessly with machine learning libraries such as scikit-learn for classical algorithms and TensorFlow or PyTorch for deep learning.

These frameworks provide prebuilt models, utilities for training pipelines, and tools for evaluating performance.

R offers packages like caret, mlr3, and randomForest, enabling statisticians to build predictive models quickly.

Understanding how these frameworks are structured—estimators, transformers, parameter grids—prepares learners for more advanced topics like hyperparameter tuning or cross-validation.

Their documentation and strong community support make them excellent entry points for model experimentation.

7. Script Organization and Modular Programming

Both languages support writing modular code using scripts, functions, and packages.

Python encourages users to break workflows into reusable components, improving maintainability for large machine learning projects.

R similarly allows creating custom functions and scripts, enabling repeatable analyses and clean project structures.

This modular approach is crucial when building pipelines that involve data loading, cleaning, modeling, and evaluation steps.

Organizing code effectively helps avoid redundancy, reduces errors, and enhances collaboration in team-based data science environments.

8. Integration With Notebooks and Development Tools

Python and R are widely supported in interactive notebook environments such as Jupyter Notebook, JupyterLab, and RStudio.

These tools allow embedding code, explanations, charts, and outputs in a single workspace, making them ideal for exploratory analysis.

Notebooks make it easier to track each transformation step, visualize intermediate results, and share insights with others.

Their cell-based execution model encourages experimentation without affecting the whole project.

They are extensively used in Kaggle competitions, academic research, and professional machine learning workflows.