Vectors and Matrices introduce the mathematical foundation behind how data is represented and manipulated in smart data science systems.

Learners gain an intuitive understanding of vectors as data points and matrices as structured collections of data used in transformations and computations.

This knowledge is essential for grasping algorithms in machine learning, optimization, and deep learning.

Vector

A vector is an ordered collection of numerical values that represents a point or direction in a multi-dimensional space.

In data science, a vector usually corresponds to a single data instance, where each number in the vector is a feature describing that instance.

For example, a customer can be represented as a vector containing attributes such as age, income, or spending score.

In machine learning models, vectors also represent weights, embeddings, gradients, and probability outputs.

Vectors allow algorithms to encode information consistently so models can compare, classify, and compute patterns efficiently.

A vector is an ordered collection of numerical values that represents a point or direction in a multi-dimensional space.

In data science, a vector usually corresponds to a single data instance, where each number in the vector is a feature describing that instance.

Examples

1. A feature vector for a loan applicant: [720 credit_score, 35 age, 60000 income]

2. A word embedding vector in NLP (e.g., 300-dimensional representation of a word)

3. A gradient vector used during backpropagation in neural networks

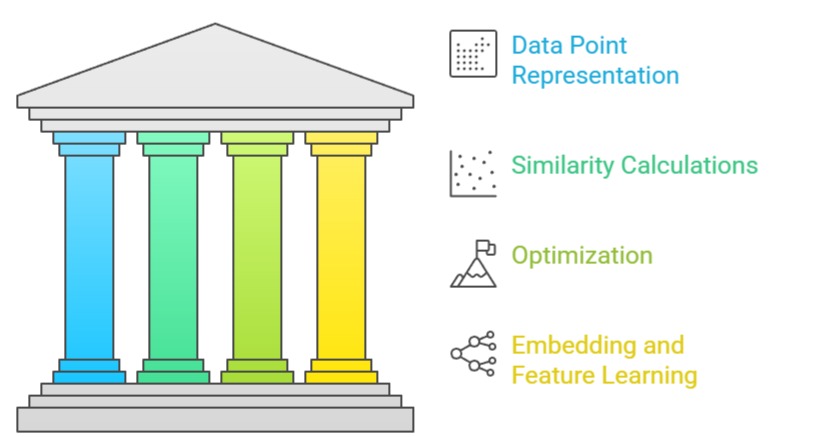

Key Importance Points

1. Foundation for Representing Individual Data Points

Vectors make it possible to represent complex entities—such as images, text snippets, or user profiles—in a structured numerical format. Each value inside a vector corresponds to a measurable property that captures essential characteristics of the instance.

Using vectors allows models to perform mathematical comparisons, calculate distances, and analyze variations across samples.

This representation becomes especially powerful when dealing with high-dimensional datasets, enabling algorithms to operate consistently across thousands of features.

Without vectors, handling numerical inputs in machine learning would be highly inefficient and inconsistent.

2. Critical for Similarity, Distance, and Clustering Calculations

Many machine learning methods rely on calculating distances between vectors to group similar items.

Algorithms such as K-Means, K-Nearest Neighbors, and hierarchical clustering depend on vector-based distance metrics like Euclidean or cosine distance.

These metrics help models determine how close or far apart two samples are in feature space.

By using vectors, clustering and retrieval systems can quickly identify related data points—even in extremely large datasets.

This capability is vital in recommendation systems, anomaly detection, and many real-world analytics tasks.

3. Essential for Optimization Through Gradients

During model training, gradients—vectors containing partial derivatives—indicate how each parameter should adjust to reduce error.

These gradient vectors guide optimization algorithms such as Gradient Descent or Adam in updating model weights.

By tracking how loss changes with respect to each parameter, models refine their predictions over time.

This vector-based optimization process is core to how neural networks, logistic regression, and many modern algorithms learn from data.

Understanding gradient vectors allows practitioners to interpret convergence behavior and adjust learning strategies effectively.

4. Forms the Basis for Embedding and Feature Learning

In natural language processing, computer vision, and recommendation systems, embeddings represent complex objects as dense, meaningful vectors.

For instance, words that share semantic relationships appear closer in embedding space. Image embeddings capture patterns like shapes and textures.

These learned vector representations compress raw data into a format models can easily process.

This vector space organization also enables analogical reasoning, similarity search, and improved classification performance. Embeddings show how vectors help models understand relationships beyond raw data.

MATRICES

A matrix is a rectangular arrangement of numbers organized in rows and columns. In data science, matrices typically represent entire datasets or weight configurations for machine learning models.

Each row often corresponds to a sample, while each column represents a feature. Matrices allow models to process multiple data instances simultaneously through efficient operations like matrix multiplication.

These operations are heavily optimized in libraries such as NumPy, enabling fast computations essential for large-scale analytics, deep learning, and scientific computing.

Examples

1. A dataset with 10,000 samples and 50 features stored as a 10,000 × 50 matrix

2. A neural network weight matrix connecting one layer to the next

3. A covariance matrix used in PCA or statistical analysis

Key Importance Points

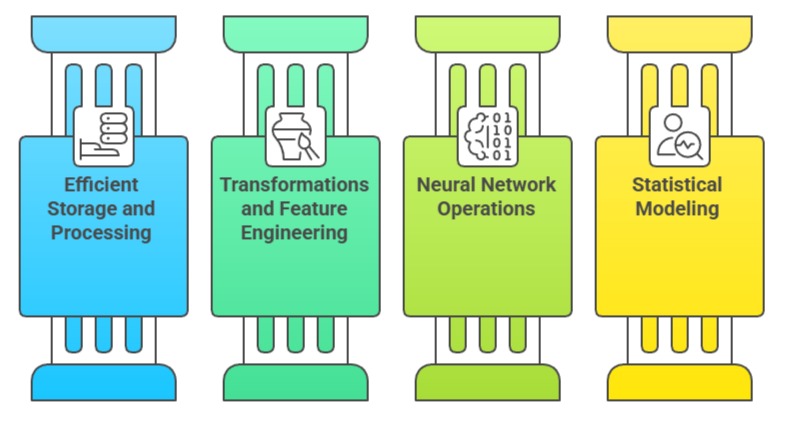

1. Enables Efficient Storage and Processing of Large Datasets

Matrices allow thousands or millions of samples to be stored in a compact, structured form.

Each row represents an observation, making it easy for models to process entire datasets at once. Matrix operations are vectorized, allowing computations to run significantly faster than iterative loops.

This is crucial when training models on large-scale data, such as image collections or log data from online platforms.

Proper matrix structuring also ensures compatibility with machine learning frameworks, improving pipeline efficiency and reducing memory overhead.

2. Core to Transformations and Feature Engineering

Techniques like scaling, rotation, projection, and normalization rely heavily on matrix operations.

For instance, PCA uses covariance matrices and eigenvectors to project data into a new space with fewer dimensions.

These transformations uncover hidden patterns and relationships that might not be visible in raw data.

By using matrices for such transformations, data scientists can improve model performance, reduce noise, and highlight meaningful structure within datasets.

Matrix-based transformations form the backbone of modern feature engineering workflows.

3. Fundamental to Neural Network Forward and Backward Passes

Neural networks depend on matrix multiplication to compute activations and gradients.

When data passes through a layer, it is multiplied by a weight matrix, producing outputs for the next layer.

During backpropagation, gradients flow backward using additional matrix operations that update weights.

These processes repeat hundreds or thousands of times during training. Efficient matrix multiplication is one of the reasons GPUs dramatically speed up deep learning.

Therefore, understanding matrices helps practitioners grasp how neural networks operate internally.

4. Supports Advanced Statistical Modeling and Data Analysis

Matrices are essential for computing relationships between variables, such as covariance and correlation structures.

Statistical methods like multivariate regression use matrix algebra to estimate coefficients that best fit the data.

Decomposition techniques (e.g., SVD, QR, eigen decomposition) help identify principal directions, stabilize computations, and optimize complex models.

These matrix-based tools enhance interpretability, reveal data dependencies, and improve the reliability of analytical outcomes.

Without these mechanisms, many classical and modern models would fail to operate effectively.