Data structures form the foundation of Python programming and are essential for handling, organizing, and manipulating data efficiently in machine learning workflows.

They provide flexible ways to store values, arrange elements, and retrieve information during preprocessing, feature engineering, and model development.

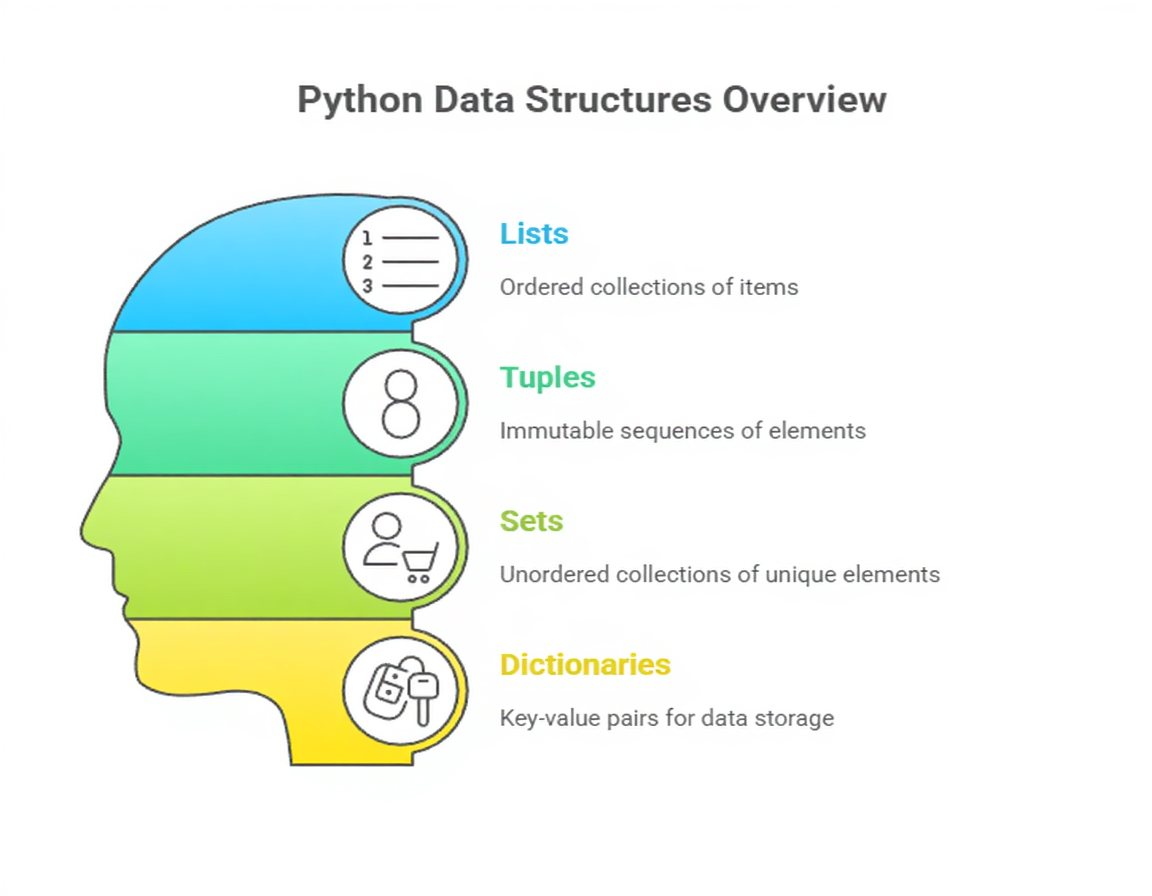

Python offers built-in structures—lists, tuples, dictionaries, and sets—each optimized for specific types of operations.

These structures allow developers to work with heterogeneous data, maintain ordered collections, group key–value relationships, or store unique items, depending on analytical needs.

Beyond built-in types, data science relies heavily on advanced structures introduced through libraries like NumPy arrays and pandas DataFrames, which provide high-performance capabilities for numerical computation and tabular data manipulation.

Understanding how these structures behave, how they manage memory, and how they support vectorized operations helps learners build efficient machine learning pipelines.

Mastering Python data structures ensures that large datasets can be sliced, filtered, aggregated, and transformed smoothly, enabling seamless movement from raw data to model-ready formats.

These structures play a crucial role at every stage of ML workflows—from ingesting data to generating predictions.

Importance of Data Structures

1. Lists as Versatile, Dynamic Containers

1. Lists as Versatile, Dynamic Containers

Python lists are flexible sequences capable of storing items of mixed data types, making them particularly useful during early data exploration.

Their dynamic nature allows elements to be added, removed, or replaced without restrictions, providing adaptability when handling irregular or evolving datasets.

Lists support slicing, iteration, sorting, and concatenation—operations frequently used during preprocessing tasks. In ML workflows, lists often hold raw records, model outputs, split datasets, or temporary results.

Despite their usefulness, lists may be inefficient for heavy numerical computation due to memory overhead, prompting the transition to arrays later. Still, lists remain indispensable for general-purpose data handling.

2. Tuples for Fixed, Immutable Groupings

Tuples function as ordered collections whose values cannot be modified once created.

This immutability makes them reliable for storing values that should remain unchanged throughout execution, such as hyperparameter sets, coordinate pairs, or configuration details.

Tuples provide better memory efficiency compared to lists, which can improve performance in functions that must process many small, fixed-length records.

Their hashability also allows tuples to act as dictionary keys, enabling structured indexing in data science tasks.

Because they enforce stability, tuples help avoid unintended alterations during analytical workflows.

3. Dictionaries for Key–Value Mapping

Dictionaries enable fast retrieval of values through unique keys, making them ideal for storing structured information without relying on position-based indexing.

They are widely used when handling datasets containing labeled attributes, metadata, or mapping relationships.

In data science applications, dictionaries frequently hold mappings like category encodings, column transformations, model parameters, or experiment logs.

Their flexible structure supports nesting, allowing the creation of complex hierarchical data formats.

Efficient lookup operations significantly accelerate tasks that involve frequent searching, such as feature lookups or configuration access.

4. Sets for Managing Unique and Unordered Data

Sets store unique elements without maintaining order, making them valuable when the objective is to eliminate duplicates or perform fast membership testing.

Common use cases include cleaning raw datasets, ensuring unique identifiers, or checking whether certain values appear in a feature column.

Sets also support mathematical operations such as union, intersection, and difference, which become useful when comparing categories or filtering matched groups.

Their hash-based implementation enables rapid lookups, providing time-saving advantages in large-scale data processing.

Although sets cannot store unhashable objects like lists, they remain an efficient tool for maintaining distinct values.

5. NumPy Arrays for High-Performance Numerical Computing

NumPy arrays form the backbone of numerical processing in machine learning because they store elements in continuous memory blocks and support vectorized operations.

This structure eliminates the need for slow Python loops, enabling fast matrix computations required for algorithms like logistic regression, PCA, or neural networks.

Arrays support broadcasting, reshaping, indexing, and advanced mathematical functions that are essential for preparing model inputs.

Their ability to handle large multidimensional datasets efficiently makes them ideal for feature matrices, image processing tasks, and numerical simulations.

NumPy arrays significantly outperform lists in computation-heavy environments.

6. pandas Series and DataFrames for Tabular Data Handling

The pandas library introduces two key structures—Series and DataFrames—which are indispensable in data science.

A Series represents a labeled one-dimensional column, while a DataFrame holds multiple Series arranged into rows and columns.

These structures provide optimized functions for filtering, aggregating, grouping, and cleaning data.

DataFrames mirror the layout of typical machine learning datasets, making them easy to integrate with modeling libraries.

Their built-in handling of missing values, date-time processing, and categorical encoding streamlines data preparation.

They support seamless file I/O, enabling smooth movement between CSV, Excel, SQL, or JSON formats.

7. Understanding Mutability and Its Impact on ML Workflows

Different Python structures exhibit different mutability behaviors, influencing how variables are passed, modified, and stored during computation.

Lists, dictionaries, and sets are mutable, meaning functions can alter their contents directly, sometimes producing unintended side effects.

Tuples, NumPy arrays (with exceptions), and strings are immutable, allowing for predictable behavior during transformations.

In machine learning projects where multiple copies of data are processed—training, validation, augmentation—knowing when a structure changes in-place prevents data leakage and inconsistent preprocessing.

This understanding helps developers maintain clean and reliable pipelines.

8. Choosing the Right Structure for Efficient Data Processing

Selecting an appropriate data structure can significantly improve runtime performance, memory usage, and code clarity.

Lists are ideal for general sequencing tasks, dictionaries excel at label-based retrieval, sets shine in uniqueness checks, and NumPy arrays dominate numerical operations. Meanwhile, pandas DataFrames are unmatched for tabular analysis.

Matching structure capabilities to the task—whether iteration-heavy, math-oriented, or label-dependent—reduces bottlenecks and ensures smooth integration with ML frameworks.

Well-chosen structures enhance reproducibility and reduce the overall engineering effort required for model development.

9. Memory Management and Internal Representation

Python data structures operate using distinct memory models that influence speed, flexibility, and scalability in machine learning tasks.

Lists store references to objects scattered across memory, offering flexibility but resulting in overhead when dealing with large numeric collections.

NumPy arrays, however, store data in contiguous blocks, allowing processors to perform vectorized operations efficiently.

Dictionaries use hash tables internally, giving near-constant-time key lookups but requiring extra memory to handle hashing.

Understanding these internal mechanisms helps data scientists anticipate performance trade-offs, especially when processing large datasets or running repetitive transformations.

This awareness enables informed decisions regarding when to switch structures for better optimization.

10. Nested Data Structures for Complex Data Modeling

Real-world machine learning tasks often involve layered or hierarchical data, such as JSON logs, multi-level categories, or embeddings with metadata.

Python supports deeply nested structures—like lists of dictionaries or dictionaries containing lists—that allow complex information to be grouped intuitively.

Such nesting is essential for tasks like representing model configurations, storing experiment results, or organizing raw inputs from APIs.

While powerful, nested structures require careful handling to avoid confusion or excessive depth.

Proper indexing techniques and clear structuring strategies ensure these collections remain readable and manageable. These nested designs allow ML pipelines to store diverse and interdependent information efficiently.

11. Performance Optimization Through Vectorization

While built-in Python structures are flexible, they fall short when handling large-scale numerical operations due to interpreted execution.

Libraries such as NumPy and pandas enhance performance through vectorization, replacing explicit loops with optimized low-level routines.

Vectorized operations dramatically reduce runtime for tasks like standardization, distance computation, and matrix transformations.

Choosing the right structure—especially switching from lists to arrays—can make the difference between minutes and milliseconds.

This shift is crucial in machine learning experiments where repeated transformations are necessary.

Understanding how vectorization interacts with data structures allows practitioners to design pipelines that scale smoothly.

12. Data Cleaning and Preprocessing With Structural Tools

Many cleansing operations in ML—including removing duplicates, normalizing formats, filtering invalid entries, and handling missing values—depend heavily on the underlying data structure.

Sets make deduplication trivial, while dictionaries support fast validation of category mappings.

DataFrames extend these capabilities with column-wise operations, offering highly efficient mechanisms for replacing null values, standardizing text, or encoding labels.

Each structure plays a different role in shaping raw datasets into consistent and model-ready formats.

Utilizing these strengths ensures preprocessing becomes structured, predictable, and significantly faster. Well-organized structures ultimately reduce model errors and improve downstream feature engineering.

13. Interoperability With Machine Learning Frameworks

Popular machine learning libraries rely on specific types of data structures for seamless training and prediction.

Scikit-learn expects NumPy arrays or pandas DataFrames, ensuring algorithms receive data in uniform numeric formats.

Deep learning frameworks like TensorFlow and PyTorch convert arrays into tensors, which extend array functionality with GPU acceleration.

Dictionaries may hold model configurations or hyperparameters, while lists manage batches or collections of predictions.

Understanding which structure aligns with which framework reduces conversion overhead and prevents shape-related errors.

Effective interoperability enables smooth model experimentation and deployment.

14. Custom Data Structures for Specialized Tasks

Although Python’s built-in structures cover most needs, advanced machine learning problems sometimes require tailor-made structures.

Developers may implement custom classes to represent graphs, trees, or caching mechanisms, depending on the algorithmic requirements.

For example, decision tree implementations may use nested dictionaries, while graph-based models can benefit from adjacency lists.

These custom structures provide better control over functionality, memory usage, and representation of algorithm-specific data.

Learning how to extend Python’s foundational structure system empowers developers to build more optimized, domain-specific solutions. This adaptability is particularly valuable in research or production environments.

15. Error Handling and Data Integrity With Structures

Data structures not only store information but also enforce rules that protect data integrity throughout a pipeline.

Tuples prevent unintended modification, making them ideal for sensitive values or fixed configurations.

Dictionaries raise errors when keys are missing, helping detect discrepancies early in preprocessing.

DataFrames provide warnings and safeguards when performing operations that risk altering data patterns unintentionally.

Using these structures wisely creates safer, more predictable analytical workflows. Maintaining integrity at each step helps avoid model bias, silent data corruption, and inaccurate analysis outcomes.