Evaluating machine learning models is a cornerstone of building reliable, trustworthy, and high-performing systems.

Without proper evaluation, even the most advanced algorithm may lead to misleading conclusions or poor real-world outcomes.

Model evaluation metrics measure how effectively a model learns patterns, while validation techniques ensure that the model generalizes beyond the data it was trained on.

As datasets grow in complexity and tasks vary—from predicting customer behavior to identifying medical conditions—choosing the right evaluation strategy becomes critical.

These tools safeguard against issues like overfitting, underfitting, imbalanced data pitfalls, and false performance illusions.

Evaluation Metrics

Below are the major families of evaluation metrics used in machine learning, each explained with unique importance points and examples.

1. Accuracy

Accuracy matters because it provides a quick snapshot of how often the model predicts correctly across all classes.

It is useful during early experimentation since it helps determine whether the model has learned basic patterns or is simply guessing.

However, accuracy alone can be misleading when data is imbalanced, since the metric may look high even if one class is poorly recognized.

Therefore, accuracy works best when classes are equally represented and performance needs a general assessment. It serves as a starting point before moving to more advanced metrics.

2. Precision

Precision is crucial where the cost of false positives is high, ensuring that when the model predicts a positive class, it is likely correct.

This is especially important in fraud checks, spam detection, or medical screening where incorrect alerts create unnecessary consequences.

Precision helps developers understand how confident the model is in marking positive outcomes.

By emphasizing correctness among predicted positives, it reveals whether the model is overly eager in labeling certain cases.

This metric becomes essential when reducing noise in predictions matters.

3. Recall

Recall becomes important in tasks where missing a positive case poses serious risks, such as disease detection or identifying security threats.

It shows how effectively the model captures all relevant positive instances from the dataset. A model with high recall ensures very few critical cases slip through unnoticed.

This metric guides practitioners in recognizing sensitivity toward positive outcomes. When safety or completeness of detection is the primary goal, recall carries more weight than precision.

4. F1-Score

The F1-Score combines precision and recall into a single measure, ensuring that both types of model errors are considered together.

It prevents a model from appearing good simply because one metric is artificially high while the other is weak.

This balanced view makes F1-Score particularly valuable in imbalanced datasets where accuracy may hide model weaknesses.

It also simplifies comparison between various models or tuning configurations. The F1-Score ensures fairness in evaluation by treating precision and recall as equally important.

5. MSE (Mean Squared Error)

MSE is vital in regression because it highlights how far predictions deviate from true values, with stronger emphasis on larger mistakes.

This helps practitioners detect instances where the model drastically fails to capture patterns.

When precise predictions are essential—such as price forecasting or engineering measurement tasks—MSE reveals whether the model is producing unacceptable errors.

Its sensitivity pushes models to avoid extreme deviations. MSE is widely used when small errors accumulate into major impacts.

6. MAE (Mean Absolute Error)

MAE offers an intuitive representation of average prediction errors, making it easier to interpret in real-world units.

It treats all errors equally without amplifying large deviations, which makes it suitable for tasks where outliers naturally occur.

MAE focuses on overall consistency rather than penalizing occasional large misses.

This characteristic helps assess whether the model typically stays within an acceptable error range. Its simplicity makes it preferred when balanced error interpretation is needed.

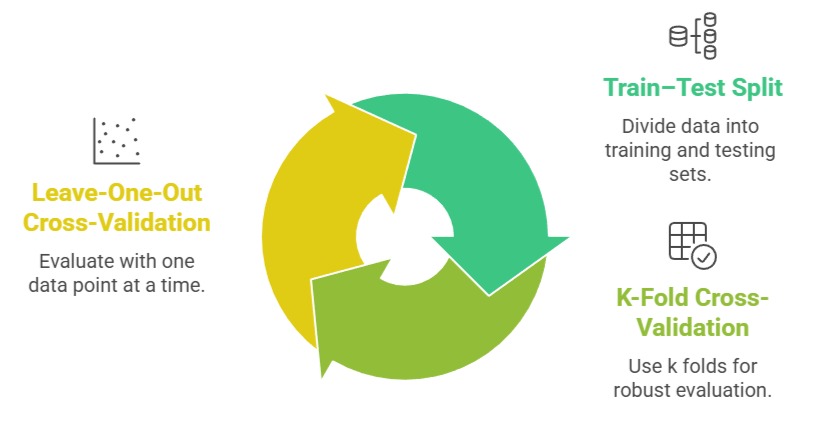

Importance Points of Validation Techniques

1. Train–Test Split

The train–test split provides a straightforward way to estimate how a model performs on unseen data while keeping computational costs low.

It helps identify early signs of overfitting by showing discrepancies between training and testing accuracy.

This method is effective when dealing with large datasets where a single representative split is sufficient.

Stratified splitting ensures both training and testing maintain class proportions, improving reliability. It remains one of the fundamental steps in every ML workflow.

2. K-Fold Cross-Validation

K-Fold Cross-Validation enhances reliability by testing the model across multiple data partitions instead of relying on one split.

This approach reduces randomness and provides a more stable view of model performance. It allows every sample to serve as both training and validation data, making the most of limited datasets.

Higher k values give more detailed evaluations while increasing computational demand. This technique is widely trusted for comparing models objectively.

3. Leave-One-Out Cross-Validation (LOOCV)

LOOCV offers the most exhaustive evaluation by using every data point as a separate test case.

It is highly beneficial when datasets are very small and losing even a few samples would weaken training.

Although computationally heavy, the technique yields consistent, low-variance performance estimates.

LOOCV reveals how sensitive a model is to individual samples, highlighting whether predictions shift drastically across points.

This makes it especially valuable in scientific or medical datasets.