NumPy and pandas are the two most essential Python libraries for modern data science, shaping the workflow from raw data ingestion to model-ready transformation.

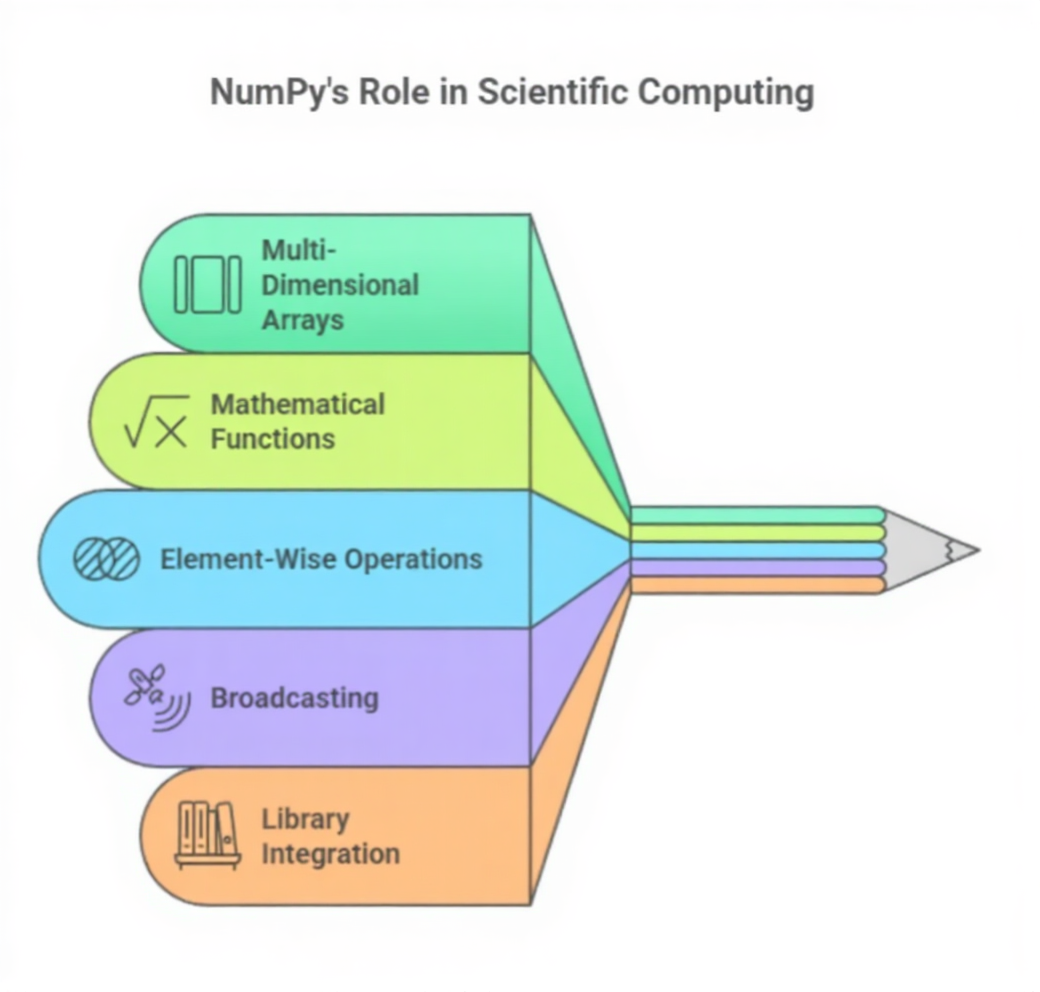

NumPy provides the mathematical backbone for nearly all machine learning operations, offering high-performance multidimensional arrays that execute numerical computations far more efficiently than native Python lists.

Its vectorized operations allow large-scale calculations to happen in a single step, enabling rapid processing of arrays, matrices, and tensors—capabilities required for every ML algorithm, from linear regression to deep neural networks.

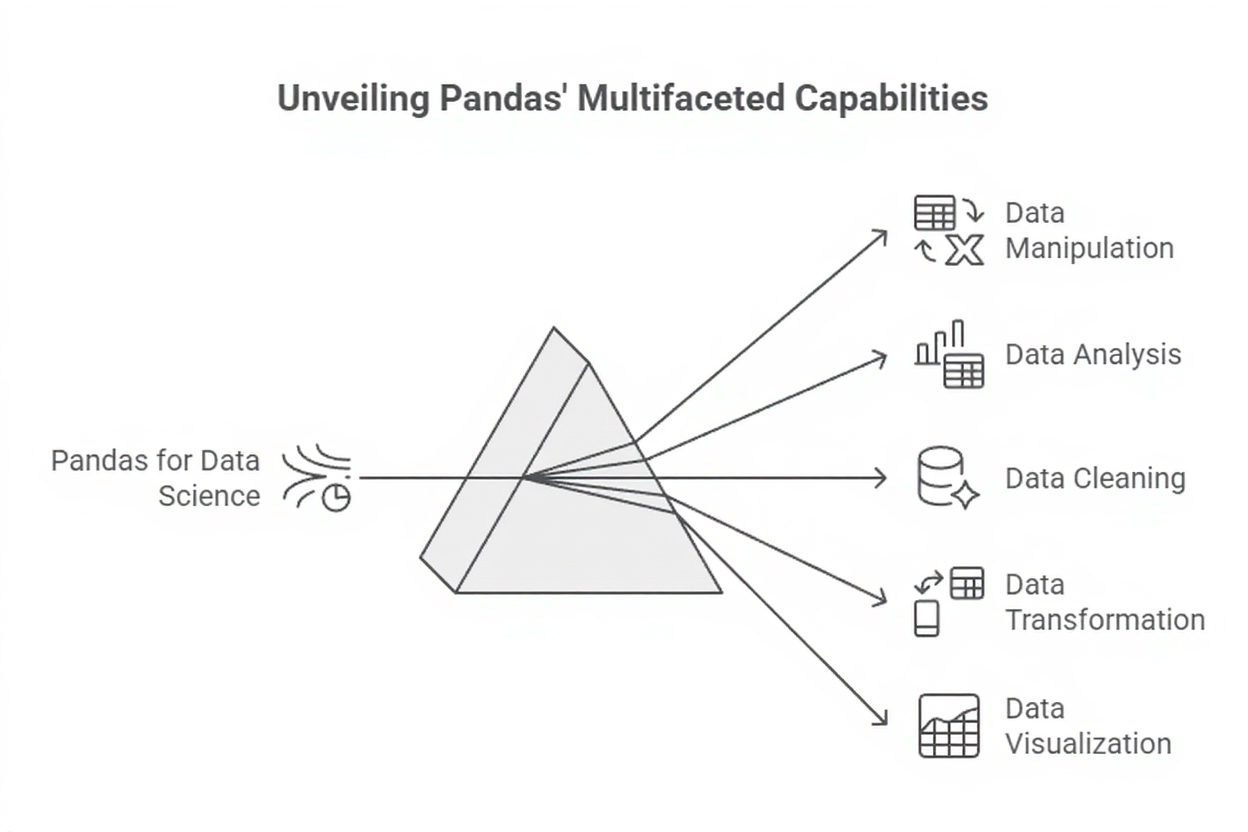

Pandas, on the other hand, serves as the primary tool for managing structured, table-like datasets.

Built on top of NumPy, pandas introduces Series and DataFrame structures that simplify data loading, cleaning, transformation, merging, and aggregation.

With its expressive indexing system, built-in handling of missing values, and intuitive functions, pandas enables data scientists to explore datasets quickly and prepare them for modeling with minimal code.

NUMPY

1. Foundation for Numerical and Scientific Computing

1. Foundation for Numerical and Scientific Computing

NumPy delivers optimized numerical operations that run significantly faster than standard Python loops by leveraging vectorization and contiguous memory storage.

This optimization allows operations on large matrices and arrays to complete efficiently, which is essential in machine learning tasks that require repeated mathematical transformations.

NumPy also underpins most scientific computing libraries like pandas, SciPy, scikit-learn, and deep learning frameworks.

Because ML models depend heavily on linear algebra—dot products, matrix decompositions, tensor manipulations—NumPy becomes indispensable in implementing or understanding algorithmic behavior.

Without NumPy, numerical operations in Python would be slow, limiting model experimentation and large-scale analytics.

2. Support for Multidimensional Arrays

NumPy’s ndarray provides a flexible way to store and manipulate data in multiple dimensions, making it ideal for representing images, time-series sequences, and tabular feature matrices.

This structure allows developers to reshape, stack, slice, and broadcast data effortlessly, operations frequently used during feature engineering.

The ability to handle millions of values in tightly packed memory blocks enhances performance during model training.

Machine learning algorithms that require tensor operations—like dot products, convolutions, or distance calculations—depend on the structure and efficiency of these arrays.

NumPy ensures that complex transformations remain consistent and computationally feasible across ML pipelines.

3. Vectorization for High-Speed Computation

Vectorized operations replace slow, explicit loops with low-level optimized routines written in C, dramatically improving execution speeds.

This capability is crucial when preparing datasets with hundreds of thousands of rows, where element-wise operations must be applied efficiently.

Vectorization ensures that calculations such as normalization, feature scaling, distance computation, and activation functions run smoothly without manual iteration.

In machine learning workflows where the same transformation is applied repeatedly across epochs or batches, vectorization reduces runtime bottlenecks.

NumPy thus enables researchers to experiment faster and iterate models more frequently.

4. Foundation for Many Advanced ML Libraries

NumPy plays a vital role as the underlying computational engine behind libraries like TensorFlow, PyTorch, SciPy, and scikit-learn.

These frameworks rely on NumPy’s array operations to manage tensor manipulation, gradient computation, and efficient numerical routines.

Without NumPy’s speed, these higher-level libraries would struggle to deliver real-time model training.

This foundational role ensures that learners who master NumPy can transition more easily into deep learning workflows.

It acts as a common thread connecting multiple ML ecosystems. In essence, NumPy builds the mathematical backbone that supports modern AI development.

5. Vectorized Operations for Large-Scale Scientific Tasks

NumPy’s vectorized operations allow users to apply complex transformations over huge datasets without explicit loops.

This capability is particularly useful in simulations, numerical modeling, and large-scale experiments.

Because operations occur in compiled C code, runtime performance remains consistently high.

Vectorization also helps reduce programming errors since fewer instructions are manually written.

The clarity it introduces leads to neater, more maintainable scientific scripts. In high-performance computing scenarios, this advantage becomes even more significant. Thus, vectorization elevates both productivity and computational throughput.

6. Strong Integration with Visualization Tools

NumPy arrays feed seamlessly into Matplotlib, Plotly, seaborn, and other visualization tools.

This integration simplifies tasks such as plotting trends, generating histograms, or visualizing model predictions.

Since most plotting libraries accept NumPy arrays directly, the workflow becomes intuitive for data scientists.

The consistency of shape and dimension handling ensures fewer mismatches during visual analysis.

Visualizing data early often reveals patterns that guide better preprocessing or feature engineering.

This synergy between NumPy and visualization frameworks accelerates exploratory data analysis. As a result, plots become more precise and computationally efficient.

Challenges of NumPy

The following points highlight the major limitations of NumPy when working with real-world datasets.

1. Limited Handling of Heterogeneous Data

NumPy arrays require uniform data types, which can be restrictive when working with datasets containing mixed formats like strings, categories, and numbers.

This limitation forces developers to convert data into homogeneous numerical formats before processing, adding extra steps to the workflow.

Handling missing values also requires custom numerical representations, as NumPy does not natively support flexible null handling.

When dealing with real-world datasets that include varied attribute types, these restrictions make pandas more suitable than NumPy alone.

Despite its numerical power, NumPy is less intuitive for raw data exploration and mixed-format processing.

2. Less User-Friendly for Initial Data Examination

While NumPy excels in computation, it lacks built-in features for descriptive analysis such as labeled columns, easy filtering conditions, or human-readable summaries.

Developers must manually implement indexing logic or rely on additional libraries for tasks that pandas performs natively.

This creates friction when performing exploratory data analysis or interpreting dataset structure.

Because machine learning projects rely heavily on fast initial exploration, NumPy’s lower-level interface may slow down this stage unless combined with pandas.

Practical Examples Using NumPy

1. Preparing a Feature Matrix for Machine Learning

NumPy is often used to convert raw values into numerical arrays that act as inputs to ML algorithms.

For Example, after loading data, categorical features may be encoded numerically and combined with continuous attributes into a single ndarray.

This matrix allows operations like normalization, standardization, or polynomial feature creation to be executed quickly.

The resulting array is then passed directly into scikit-learn models, which rely heavily on NumPy for internal operations.

This workflow ensures consistency and computational speed throughout the pipeline.

2. Performing Matrix Operations for Linear Regression

Linear regression relies on matrix multiplication to compute predictions and gradients. NumPy provides optimized functions like dot(), matmul(), and broadcasting techniques that make these calculations efficient even on large datasets.

These operations are critical during both model training and evaluation phases.

Implementing linear regression from scratch becomes manageable with NumPy because of its intuitive matrix manipulation abilities.

This example illustrates how essential the library is for understanding the inner workings of ML algorithms.

PANDAS

1. Structured Data Handling Through DataFrames

pandas introduces the DataFrame, a highly intuitive structure that resembles a spreadsheet or database table.

This makes it the most convenient tool for loading, inspecting, and manipulating structured data commonly used in machine learning.

Developers can quickly filter rows, select columns, compute aggregates, and perform group-based analysis.

These capabilities allow rapid exploration and quality checks before modeling. Because ML relies on clean, well-organized data, pandas becomes the starting point for nearly every workflow involving CSV, Excel, SQL, or JSON files.

2. Rich Functionality for Cleaning and Preprocessing

Real-world datasets often contain duplicates, missing values, inconsistent formats, or noisy text. pandas offers specialized functions such as dropna(), fillna(), astype(), and apply() that allow users to clean data systematically.

It simplifies tasks like merging tables, reshaping data, handling time-series, and encoding categorical values.

These operations form the backbone of feature engineering.

The efficiency and expressiveness of pandas accelerate preprocessing, reducing the time required to prepare model-ready datasets.

3. Seamless Integration With Other ML Libraries

pandas is fully compatible with NumPy and scikit-learn, enabling smooth transitions from data exploration to model training.

Because DataFrames can be easily converted into NumPy arrays, they act as a bridge between raw data and machine learning algorithms.

This interoperability ensures that workflows remain fluid and reduces the overhead of manual conversions. Tools such as scikit-learn pipelines depend on this compatibility to maintain consistent preprocessing steps.

4. Built-In Time-Series Intelligence

Pandas excels in handling time-indexed data, offering powerful capabilities such as resampling, frequency conversion, lag creation, and rolling statistics.

These features make it invaluable for forecasting tasks, sensor monitoring, financial analysis, and real-time analytics. Time-based indexing brings clarity to patterns like seasonality, volatility, and trend shifts.

Analysts can effortlessly align irregular timestamps, fill missing intervals, or aggregate values.

This reduces complex temporal manipulation to simple method calls. Pandas essentially transforms raw chronological data into structured insights. For any time-dependent ML pipeline, its time-series tools are indispensable.

5. Efficient Methods for Handling Messy Data

Pandas provides robust mechanisms for tackling missing, inconsistent, or duplicated records. Functions like fillna(), dropna(), replace(), and duplicated() simplify complex cleaning operations.

Real-world datasets almost always contain noise, and Pandas reduces the effort needed to prepare them for modeling.

Its flexible datatypes allow seamless handling of numeric, categorical, text, and date fields in one structure.

This holistic support shortens preprocessing cycles significantly.

Cleaner data directly enhances model accuracy and reliability. As a result, Pandas becomes a core tool for turning chaotic data into structured formats.

6. Easy Conversion Between Data Formats

Pandas integrates smoothly with external file formats such as CSV, JSON, Excel, SQL databases, Parquet, and more. Its I/O functions enable quick loading and exporting of datasets with a single command.

This convenience helps analysts interact with diverse data sources without switching tools.

It also supports large-scale data ingestion pipelines in enterprise environments.

Such format flexibility makes Pandas suitable for both small projects and full-scale ETL processes.

The ability to merge multiple sources ensures a unified and consistent dataset for analysis. Ultimately, Pandas simplifies the journey from raw data to model-ready inputs.

Challenges of Pandas

Below is the list of challenges that arise when using pandas for large and complex datasets.

1. Memory Usage With Extremely Large Datasets

pandas stores data in memory, which becomes problematic when dealing with massive datasets that exceed system capacity. Operations like joins and group operations can cause significant memory spikes.

Machine learning teams working with high-volume data may need alternatives like Dask, PySpark, or chunked processing.

While pandas is excellent for mid-sized data, its limitations become apparent in large-scale production environments.

2. Performance Limitations for Complex Loops

Although pandas supports vectorized operations, using explicit loops or applying custom Python functions can slow processing dramatically.

If data scientists are not careful, certain operations may run far slower than optimized NumPy equivalents.

Understanding which operations are vectorized and which are not becomes crucial. This challenge pushes developers to write efficient transformations or use specialized functions.

Practical Examples Using Pandas

1. Loading and Transforming Real-World Datasets

A typical machine learning workflow begins by loading a CSV file using pd.read_csv(), followed by examining the dataset with functions like head(), info(), and describe().

Missing values can be imputed, categorical columns encoded, and irrelevant rows filtered.

These steps convert raw data into a structured, clean form ready for modeling. pandas makes such operations quick and readable, reducing the cognitive load on developers.

2. Feature Engineering for Predictive Models

pandas supports operations such as binning continuous values, generating time-based features, constructing rolling averages, and computing group-based statistics.

These transformations provide essential signals that improve model accuracy.

For Example, creating a new column that captures the difference between two features may help tree-based models detect important relationships. pandas enables these operations in a few lines of code, dramatically speeding up experimentation.