Artificial Intelligence (AI) and Machine Learning (ML) are transforming industries by enabling systems to learn from data, automate decisions, and uncover insights at a scale beyond human capability.

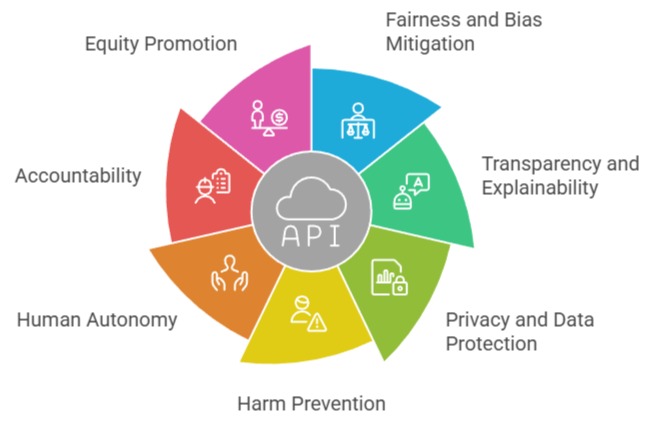

While these technologies bring immense benefits, they also introduce complex ethical challenges that must be understood to ensure responsible and trustworthy use.

As AI becomes more deeply embedded in everyday applications such as healthcare diagnostics, financial forecasting, hiring systems, autonomous vehicles, security tools, and personalized digital services the consequences of unethical design, biased data, or uncontrolled deployment can directly impact individuals, organizations, and society.

A major ethical concern arises from bias in data, which can lead to unfair or discriminatory outcomes when models reinforce existing societal inequalities.

Issues of privacy and data protection are also critical, as AI systems often rely on large volumes of personal or sensitive information.

Without proper safeguards, this can lead to misuse, surveillance, or unauthorized access.

Another key challenge is transparency and explainability—many AI models operate as “black boxes,” making it difficult for users or regulators to understand how decisions are made.

Ethical use of AI also requires addressing accountability.

When an AI decision causes harm, it may be unclear who is responsible: the developer, the organization using it, or the model itself.

Additionally, rapid AI adoption raises concerns about job displacement, autonomy, and the potential for misuse, such as deepfakes or automated cyberattacks.

Understanding these ethical implications is essential for building AI systems that are fair, reliable, and aligned with societal values.

By incorporating transparency, fairness, human oversight, and strong governance frameworks, organizations can ensure that AI and ML technologies contribute positively to individuals and communities.

Importance

1. Ensuring Fairness and Minimizing Algorithmic Bias

AI models often learn biases embedded in real-world data, which can cause them to treat different groups unequally.

Biased hiring recommendations, skewed credit approvals, or unfair medical risk predictions are frequent outcomes when training data lacks diversity or contains historical discrimination.

Ethical practice requires careful auditing, bias detection tools, and inclusive dataset curation.

Practitioners must also conduct impact assessments to understand how model predictions affect vulnerable groups.

Without such diligence, AI systems risk reinforcing social inequalities rather than reducing them.

2. Strengthening Transparency and Explainability in Models

Opaque or “black-box” systems make it difficult for users to understand how predictions are generated.

This becomes problematic when AI influences decisions that directly affect individuals, such as parole rulings or insurance pricing.

Explainability techniques—like feature attribution, interpretable models, or visualization tools—help bridge this gap by revealing the logic underlying model outputs.

Clear explanations enable accountability, foster trust, and help organizations meet regulatory requirements.

Transparency is especially important as AI becomes embedded in critical mission-driven environments.

3. Protecting Privacy and Responsible Data Usage

AI systems frequently rely on large datasets, many of which contain personal or sensitive information.

Ethical data collection requires obtaining meaningful consent, minimizing the amount of personal data stored, and preventing unauthorized access or misuse.

If an AI model inadvertently exposes private information or leaks identifiable patterns, it can harm individuals and violate privacy regulations.

Practices like differential privacy, encryption-based learning, and anonymization help reduce these risks.

Prioritizing privacy ensures that innovation does not occur at the expense of individual rights.

4. Preventing Harmful Deployment and Misuse of AI Technologies

While AI can be used for good, its capabilities can also be exploited for harmful purposes such as surveillance, disinformation, cyberattacks, or automated discrimination.

Ethical development requires anticipating worst-case scenarios and creating safeguards to prevent malicious use.

This includes setting internal governance standards, monitoring system behavior after deployment, and restricting high-risk functionalities.

By addressing potential misuse early on, organizations can reduce the likelihood of unintended societal consequences.

5. Preserving Human Autonomy and Decision-Making Power

Overreliance on automated predictions can weaken human judgment, especially when individuals defer too easily to machine outputs.

Ethical AI emphasizes the importance of keeping humans in control, particularly in high-stakes domains.

Decision-support systems should clarify when human intervention is necessary and avoid fully automated conclusions unless safety and reliability are guaranteed.

Preserving autonomy ensures that AI augments human capabilities rather than replacing critical thinking or moral responsibility.

6. Establishing Accountability for AI-Driven Outcomes

When AI systems make mistakes, it can be unclear who is responsible—the developers, the data providers, or the deploying organization.

Clear accountability frameworks help determine ownership of decisions and establish oversight structures for monitoring performance.

Ethical responsibility includes documenting model design choices, maintaining audit logs, and ensuring that someone can answer for the system’s predictions.

Accountability protects both users and organizations while encouraging more thoughtful system design.

7. Promoting Social, Economic, and Workforce Equity

AI-driven automation has the potential to reshape labor markets, reduce job opportunities, and deepen economic divides if not handled carefully.

Ethical considerations require evaluating how automation impacts workers and communities, especially those at risk of displacement.

Organizations should invest in re-skilling programs, equitable hiring processes, and responsible automation strategies.

Ethical AI development aims to create long-term societal value rather than prioritizing efficiency at the expense of people’s livelihoods.

Class Sessions

Sales Campaign

We have a sales campaign on our promoted courses and products. You can purchase 1 products at a discounted price up to 15% discount.