Scikit-learn is one of the most widely used machine learning libraries in Python, offering a clean and consistent interface for implementing algorithms across classification, regression, clustering, and dimensionality reduction.

Its design focuses on simplicity, efficiency, and modularity, making it ideal for beginners and experts alike.

With a uniform API, Scikit-learn allows users to easily load data, train models, optimize hyperparameters, and evaluate performance—all within a few lines of code.

Because the library abstracts complex mathematical operations, learners can focus more on understanding model behavior rather than implementation details.

It also provides integrated tools for preprocessing, feature engineering, and model validation, ensuring that the full ML workflow is covered.

Through its estimator-based architecture, Scikit-learn ensures reliability, reproducibility, and ease of experimentation.

Whether building a predictive model for real-world data or running experiments in a learning environment, Scikit-learn serves as a powerful foundation for executing machine learning pipelines in Python.

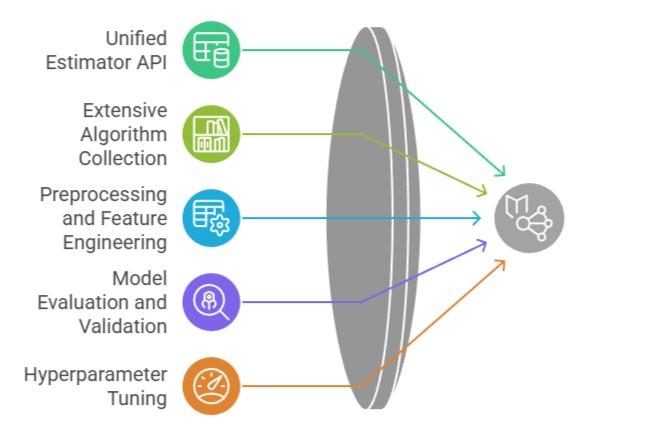

Importance of Implementing ML algorithms with Scikit-learn

1. Unified Estimator API

Scikit-learn’s estimator API enables users to train, fit, transform, and predict using a uniform method structure across all algorithms.

This consistency minimizes the learning curve because once learners understand how one model works, they can operate any other algorithm in the library with similar commands.

The shared syntax ensures that models can be interchanged seamlessly without restructuring code.

This property becomes valuable during model comparison, allowing efficient testing of alternatives such as switching from Logistic Regression to Support Vector Machines.

In educational settings, this clarity enhances comprehension while maintaining coding discipline.

For real projects, it boosts reproducibility and reduces implementation errors. Ultimately, the unified API helps streamline experimentation, testing, and debugging.

Example:

model = LinearRegression().fit(X_train, y_train)

preds = model.predict(X_test)2. Extensive Algorithm Collection

Scikit-learn features a vast set of algorithms covering supervised and unsupervised learning, allowing users to explore multiple modeling strategies without installing additional packages.

It includes popular techniques such as logistic regression, random forests, gradient boosting, k-means, and PCA.

This built-in diversity encourages experimentation across model families to find the most suitable solution for a given dataset.

Learners can evaluate how different algorithms behave with the same input, improving conceptual depth.

In industrial applications, this reduces overhead by eliminating the need to rely on multiple toolchains.

The integrated nature of the library helps maintain consistency and reduces compatibility issues. Teams benefit from having a single, standardized toolkit for multiple ML tasks.

Example:

from sklearn.cluster import KMeans3. Robust Preprocessing and Feature Engineering Tools

Scikit-learn provides utilities for handling missing values, encoding categories, normalizing data, and generating polynomial features.

These preprocessing components are essential because model quality heavily depends on data quality.

The library ensures transformations follow strict, reproducible steps through pipeline structures, preventing data leakage.

This enables users to maintain clean workflows where preprocessing and modeling steps are combined into a single executable sequence.

In real-world scenarios, consistent preprocessing guarantees that production data receives the same treatment as training data.

It also allows quick experimentation with different scaling or encoding strategies to observe their influence on model performance.

Such tools are indispensable for building reliable end-to-end ML systems.

Example:

from sklearn.preprocessing import StandardScaler4. Model Evaluation and Validation Support

Scikit-learn integrates various evaluation tools, including cross-validation, scoring metrics, confusion matrices, and regression error measures.

These utilities help diagnose underfitting, overfitting, or data imbalance issues effectively.

Because evaluation functions follow standardized formats, it becomes easier to compare models on consistent criteria.

This structure encourages rigorous experimentation and eliminates guesswork during assessment.

Furthermore, validation tools such as Stratified K-Fold ensure that model performance is reliable and not dependent on a single train-test split.

By enabling deep insights into model behavior, Scikit-learn helps practitioners make evidence-based decisions.

Example:

from sklearn.model_selection import cross_val_score5. Hyperparameter Tuning Mechanisms

Hyperparameter search tools such as GridSearchCV and RandomizedSearchCV automate the selection of optimal model configurations.

Instead of relying on intuition alone, learners can systematically explore a range of parameters using controlled experiments.

This increases the likelihood of finding high-performing models that generalize well.

Hyperparameter tuning also helps reveal how sensitive models are to settings like regularization strength or decision tree depth.

These tools reduce manual trial-and-error and enforce a more scientific tuning approach.

For production environments, automated search ensures consistent tuning workflows across datasets.

This capability helps bridge the gap between experimentation and deployment.

Example:

GridSearchCV(SVC(), param_grid)